Talk

Open Standards for Data Mesh

Dr. Simon Harrer, Co-Founder & CEO @ Entropy Data · April 21, 2026

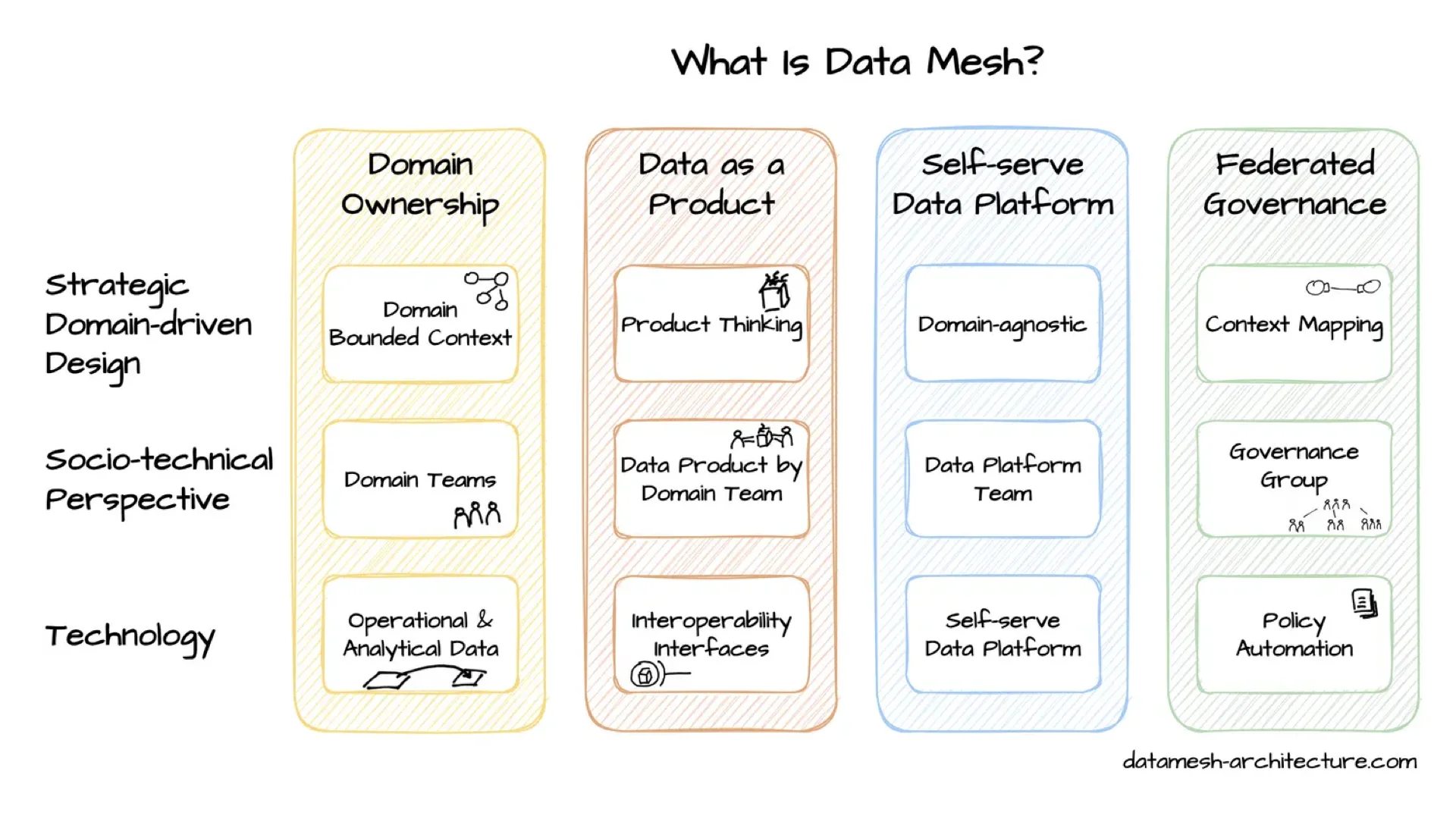

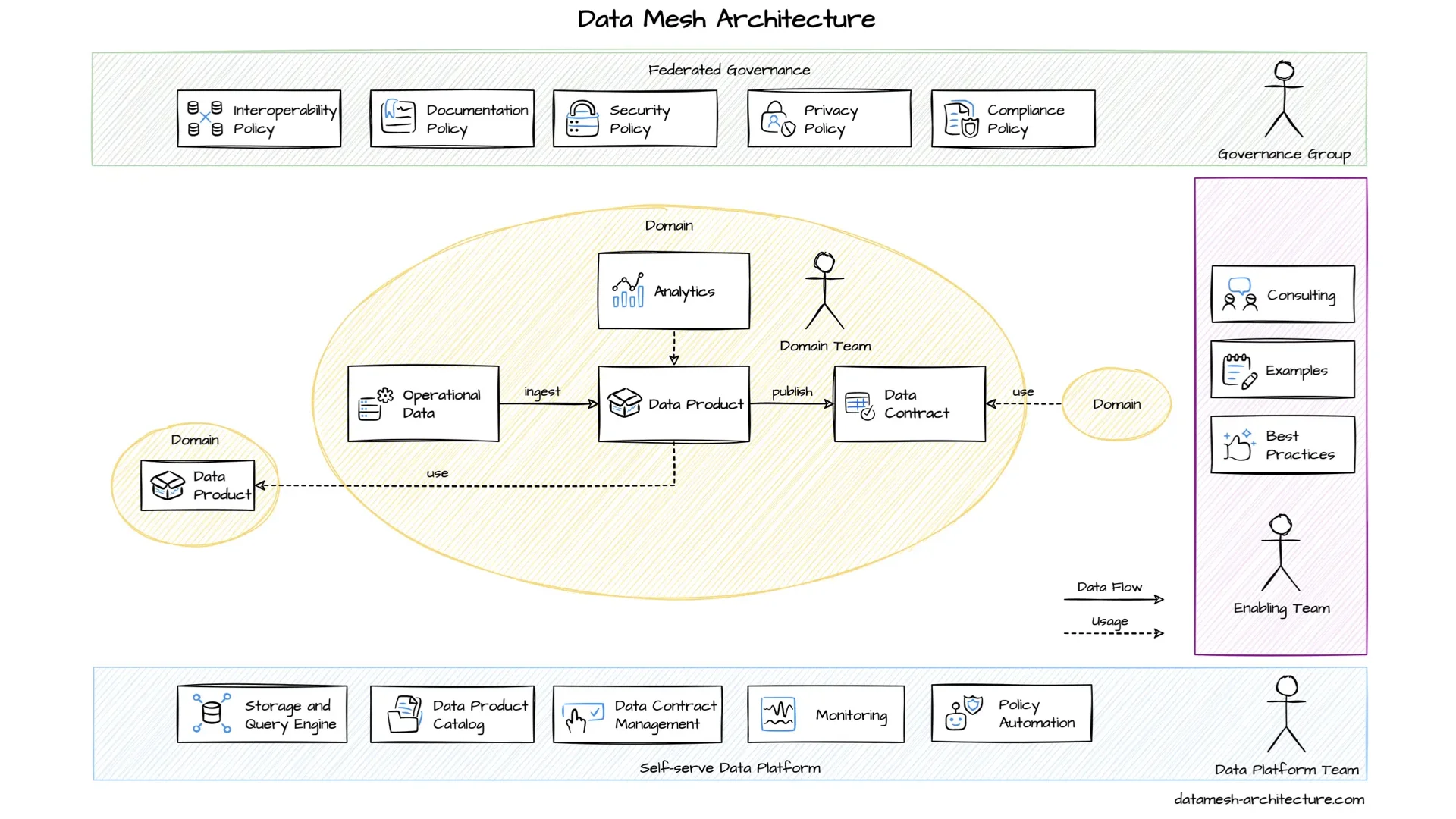

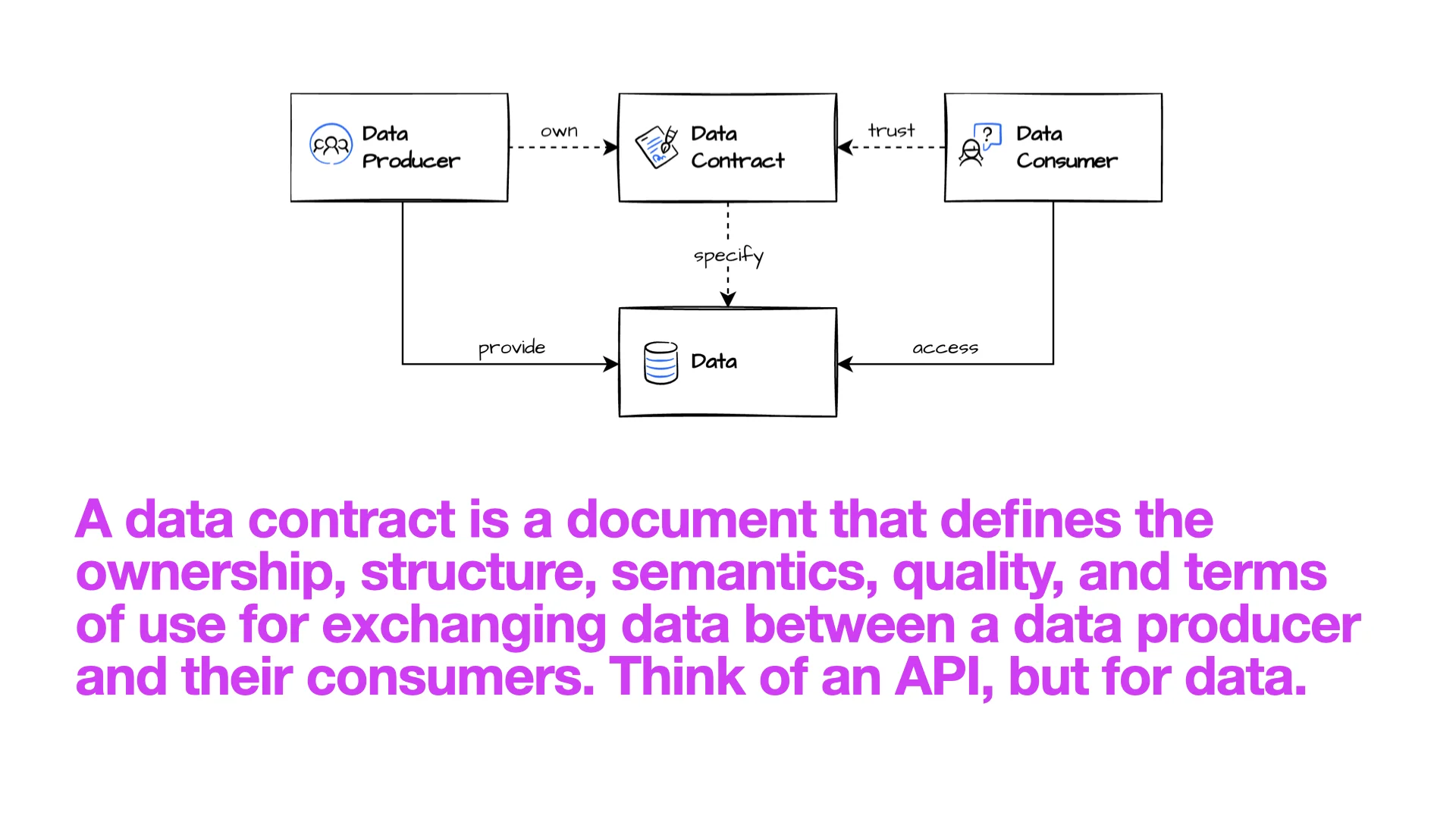

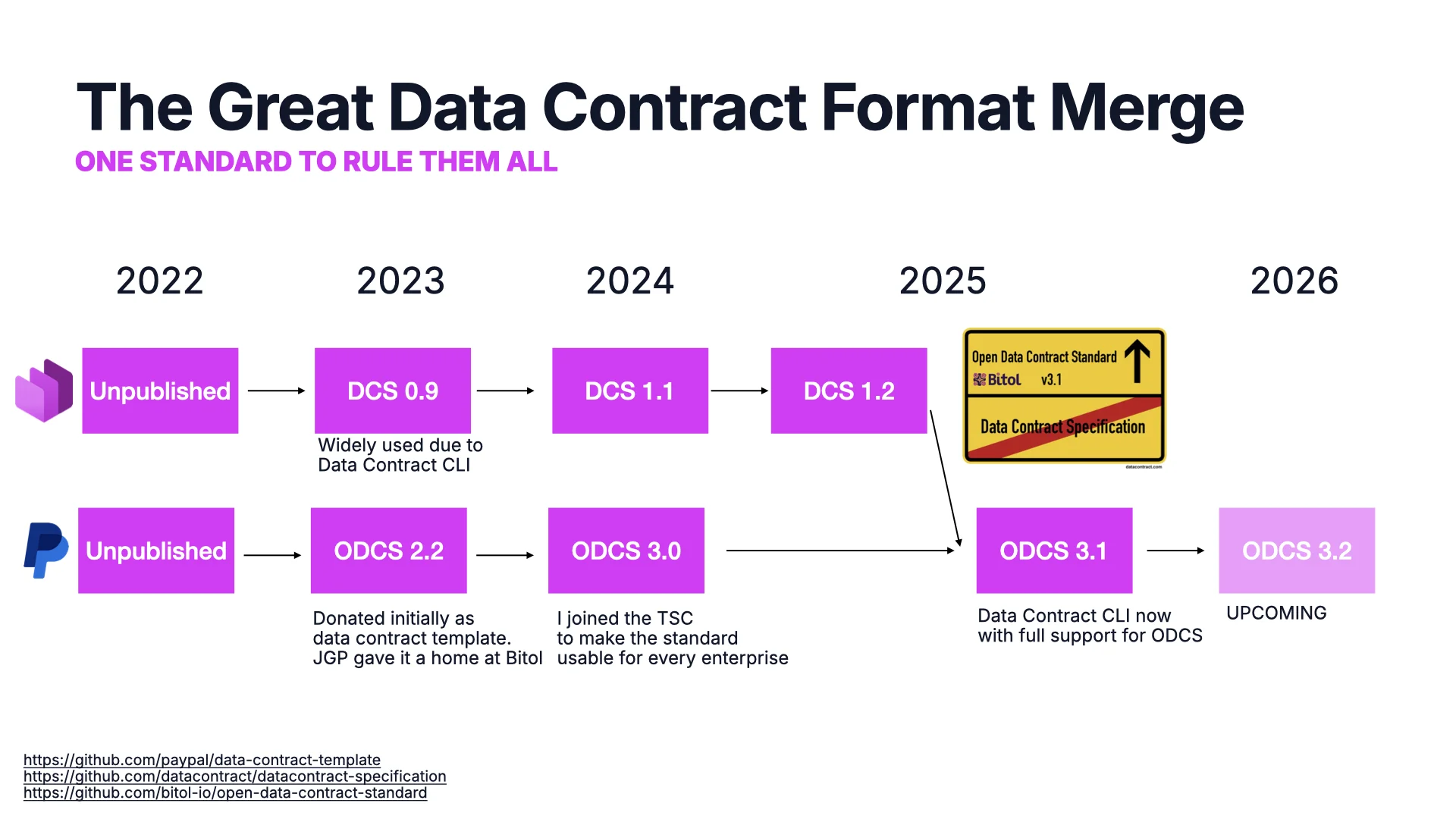

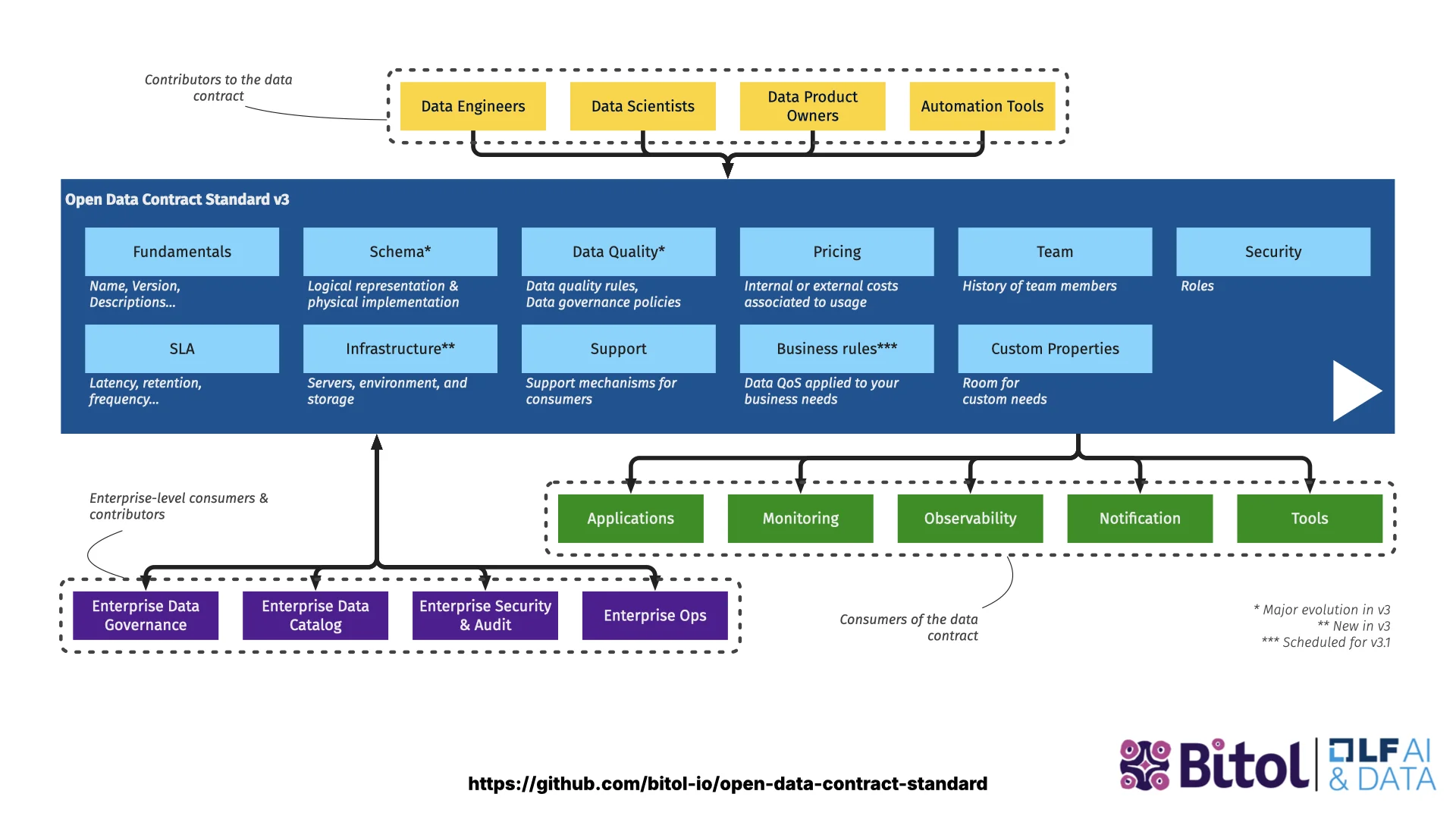

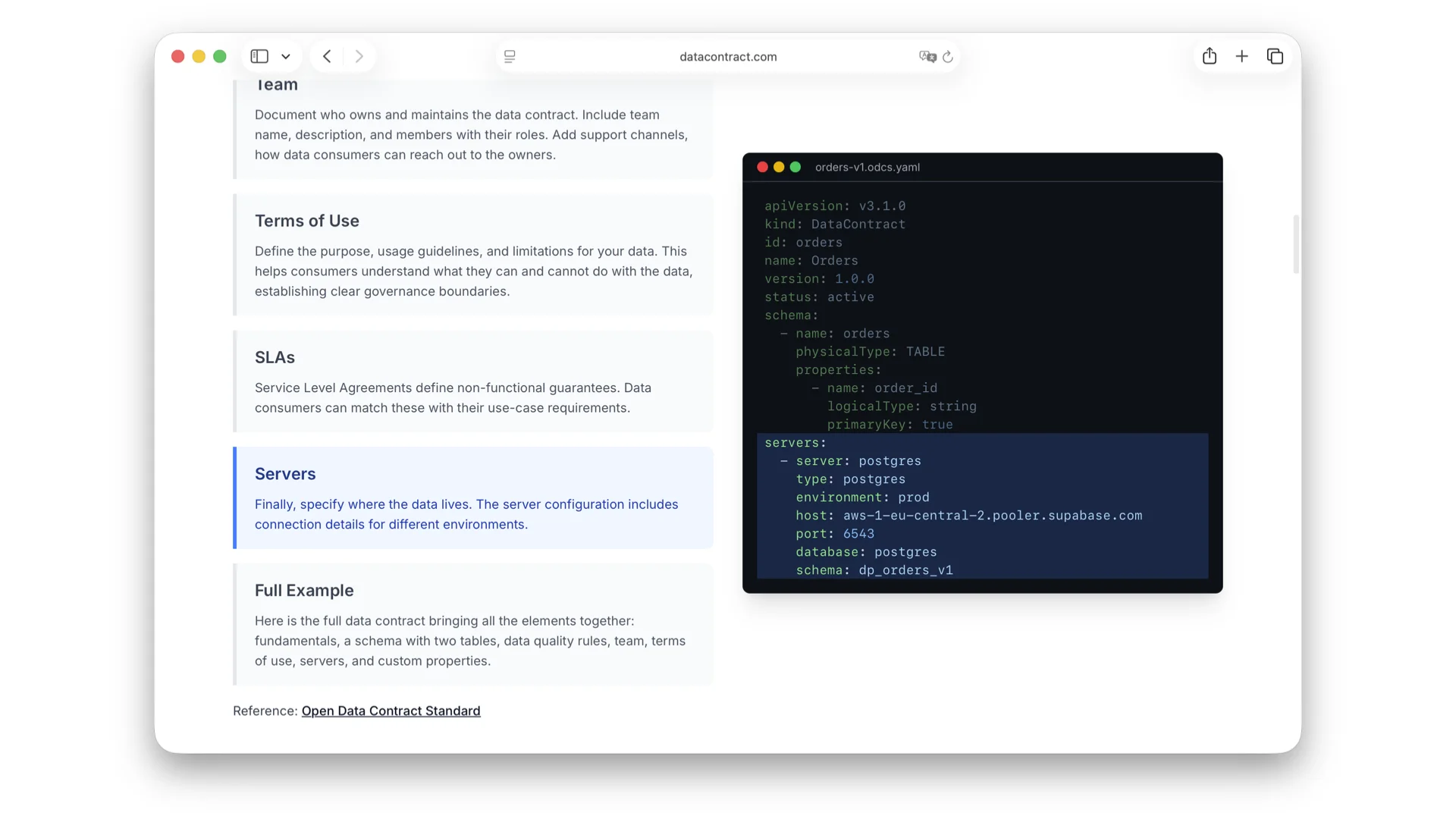

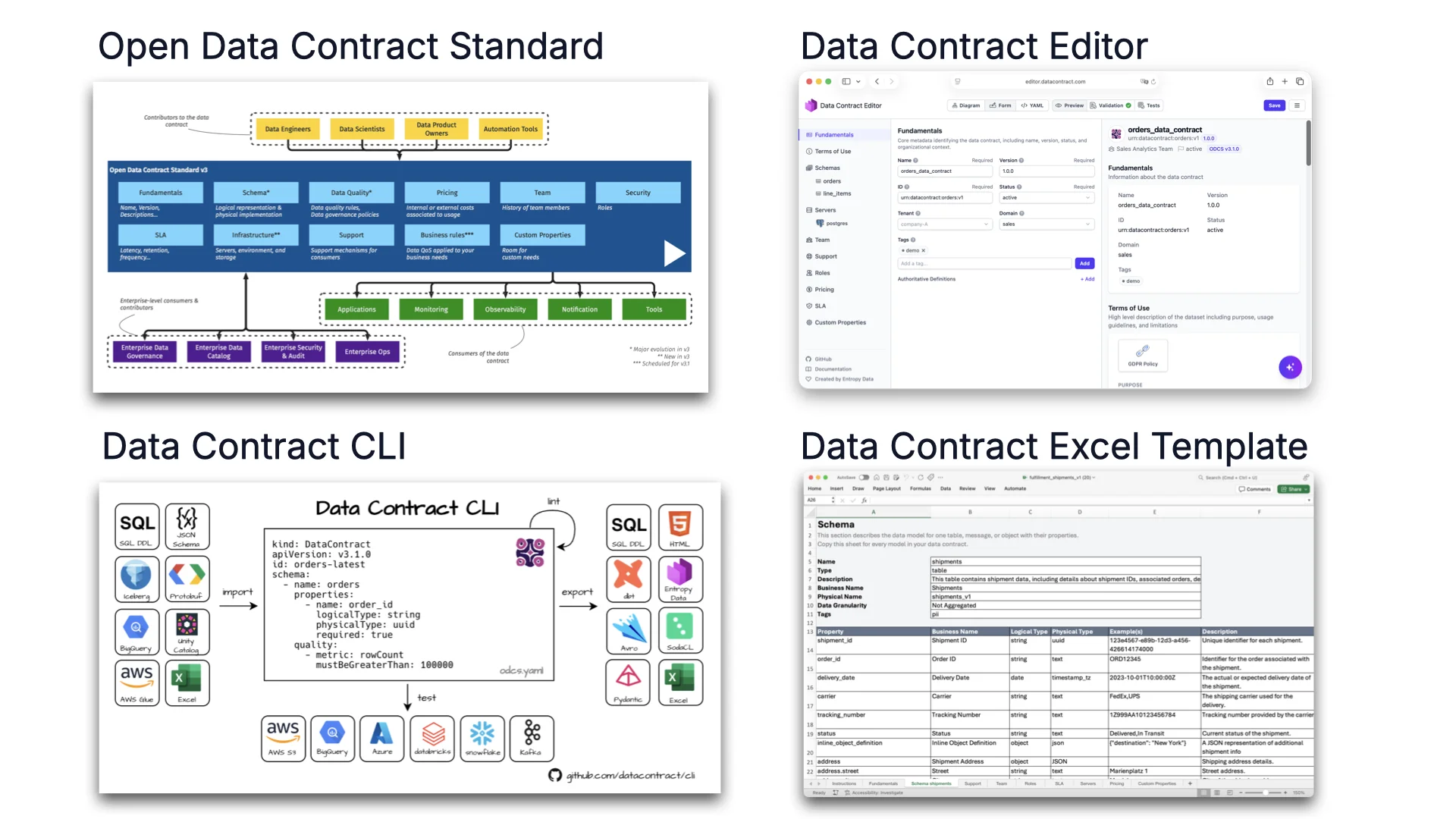

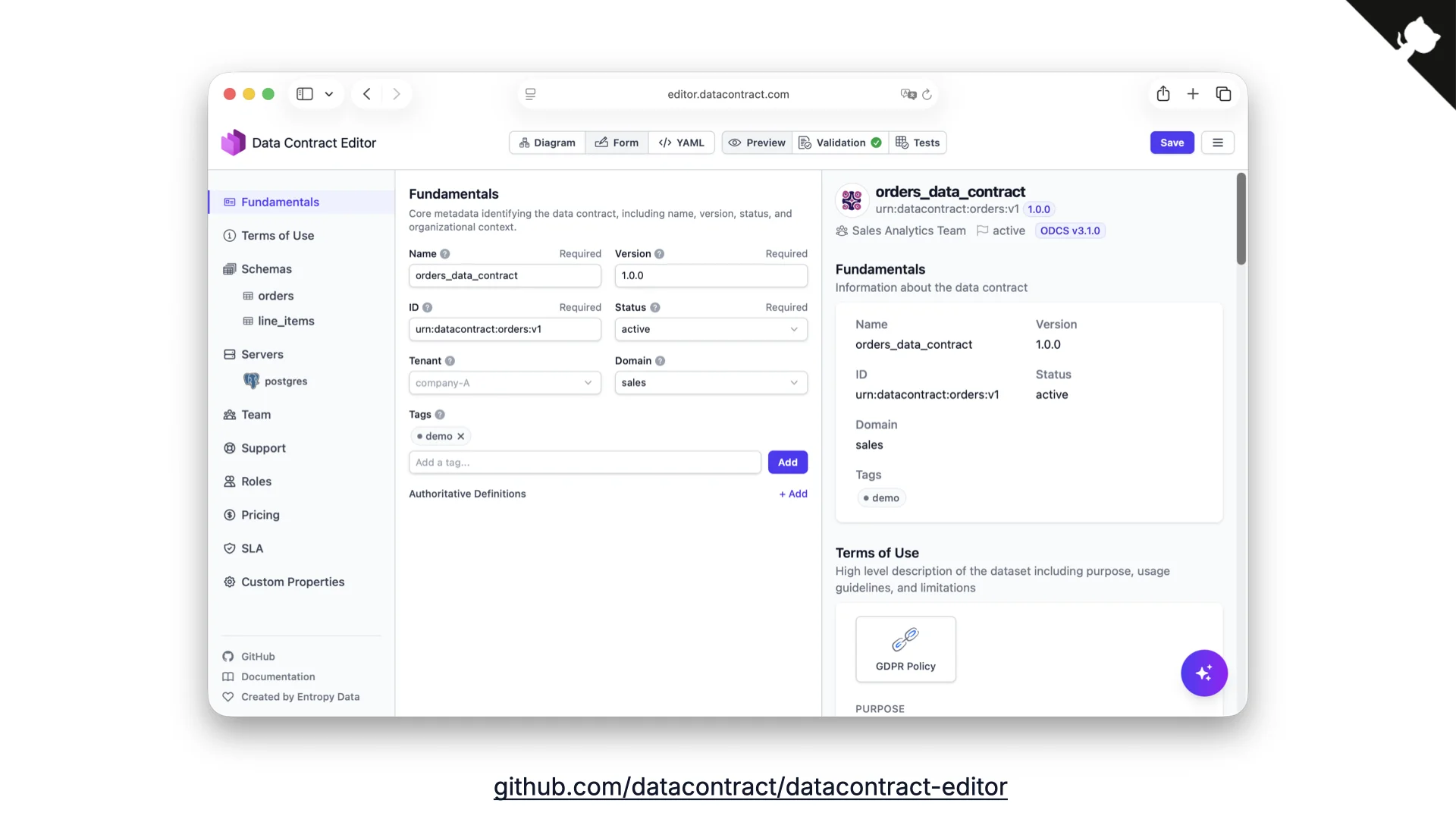

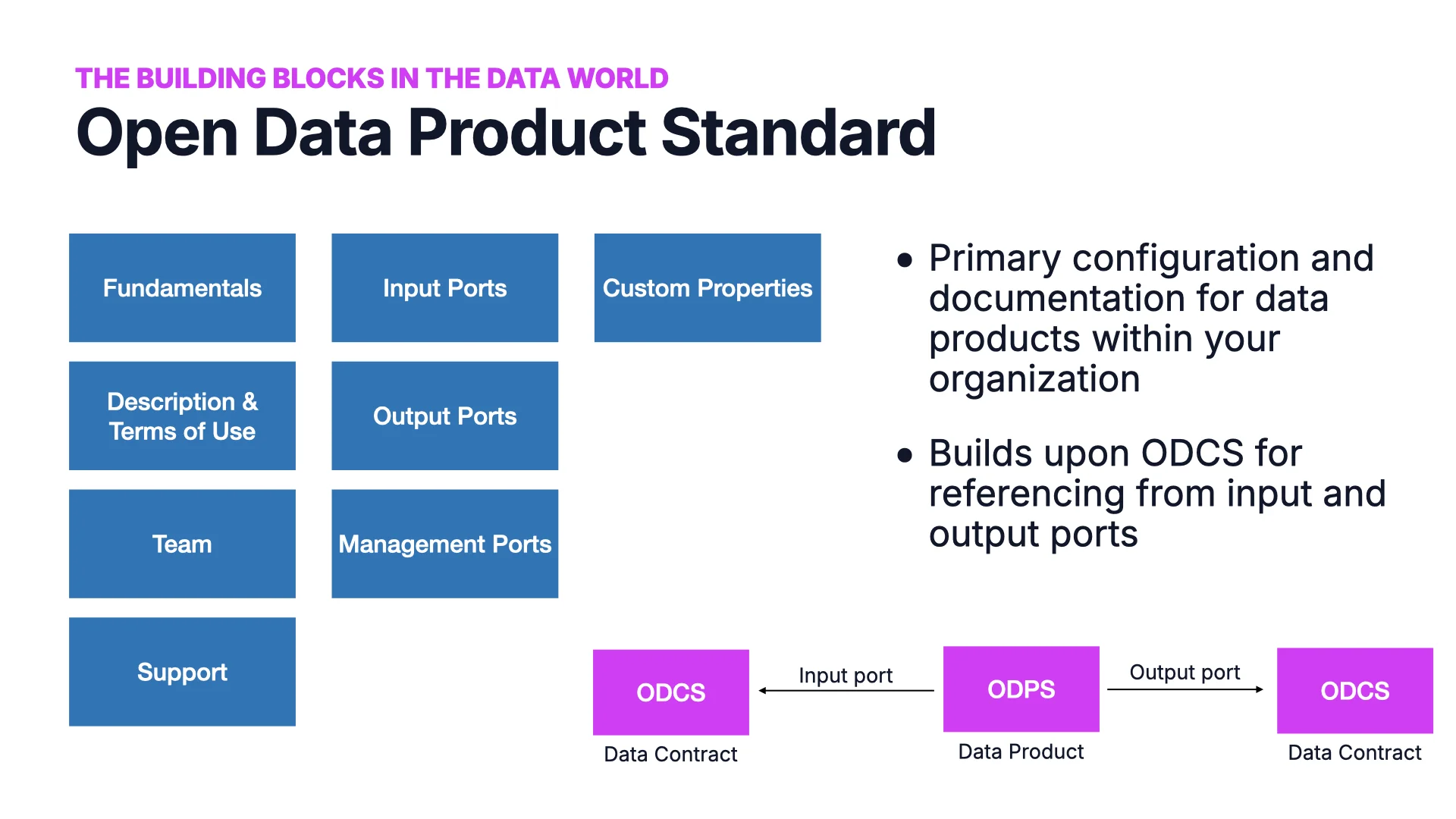

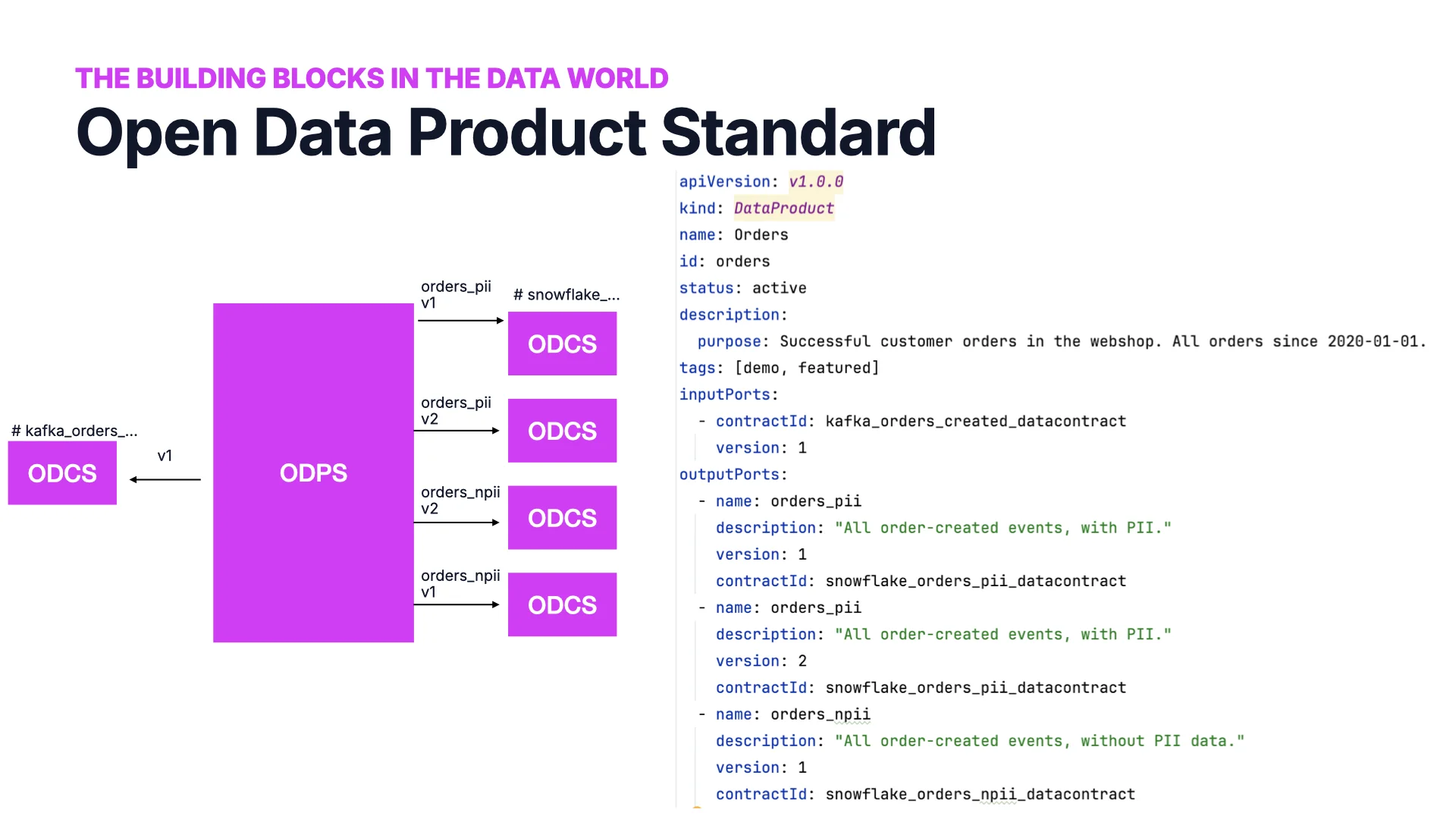

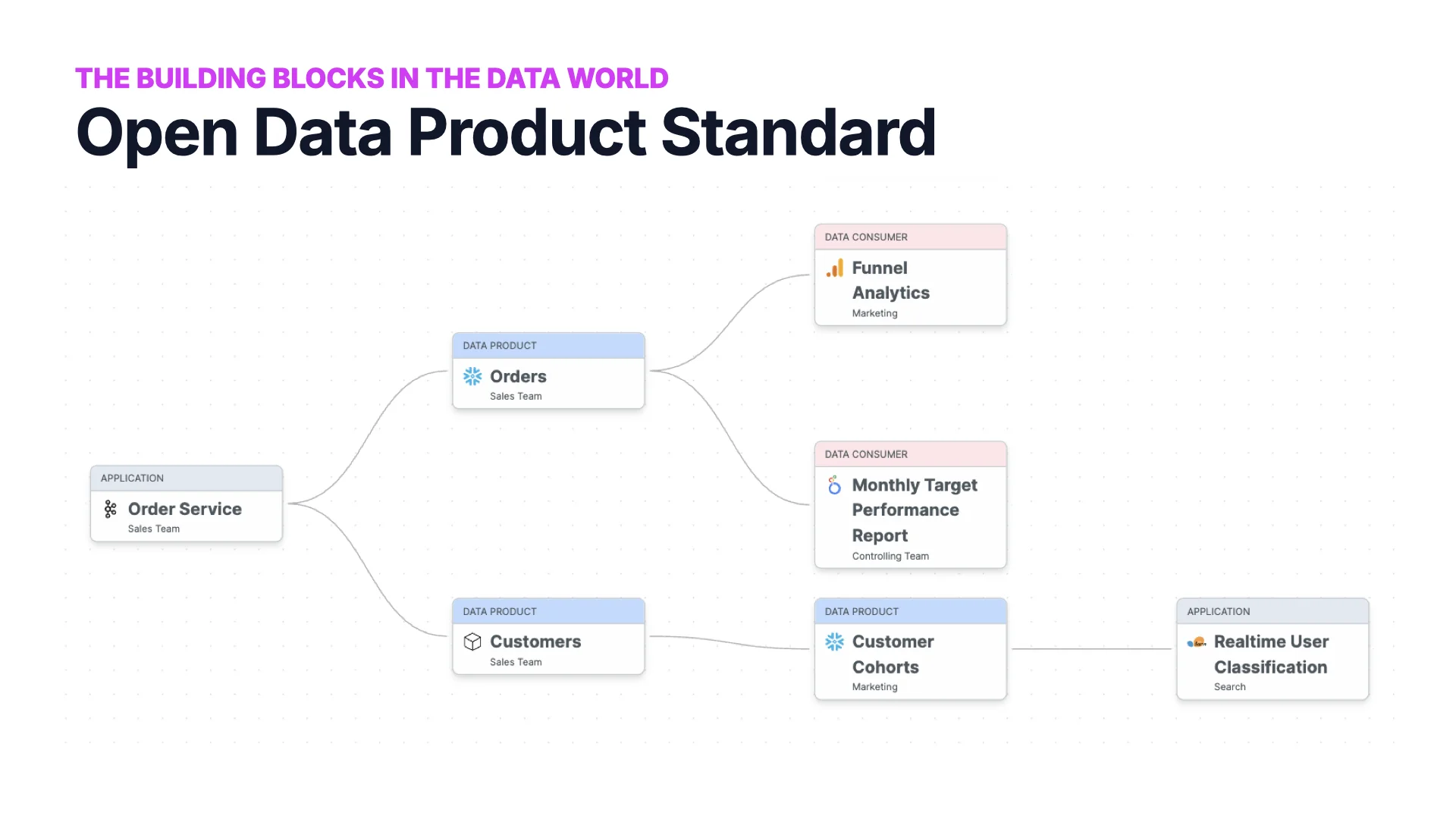

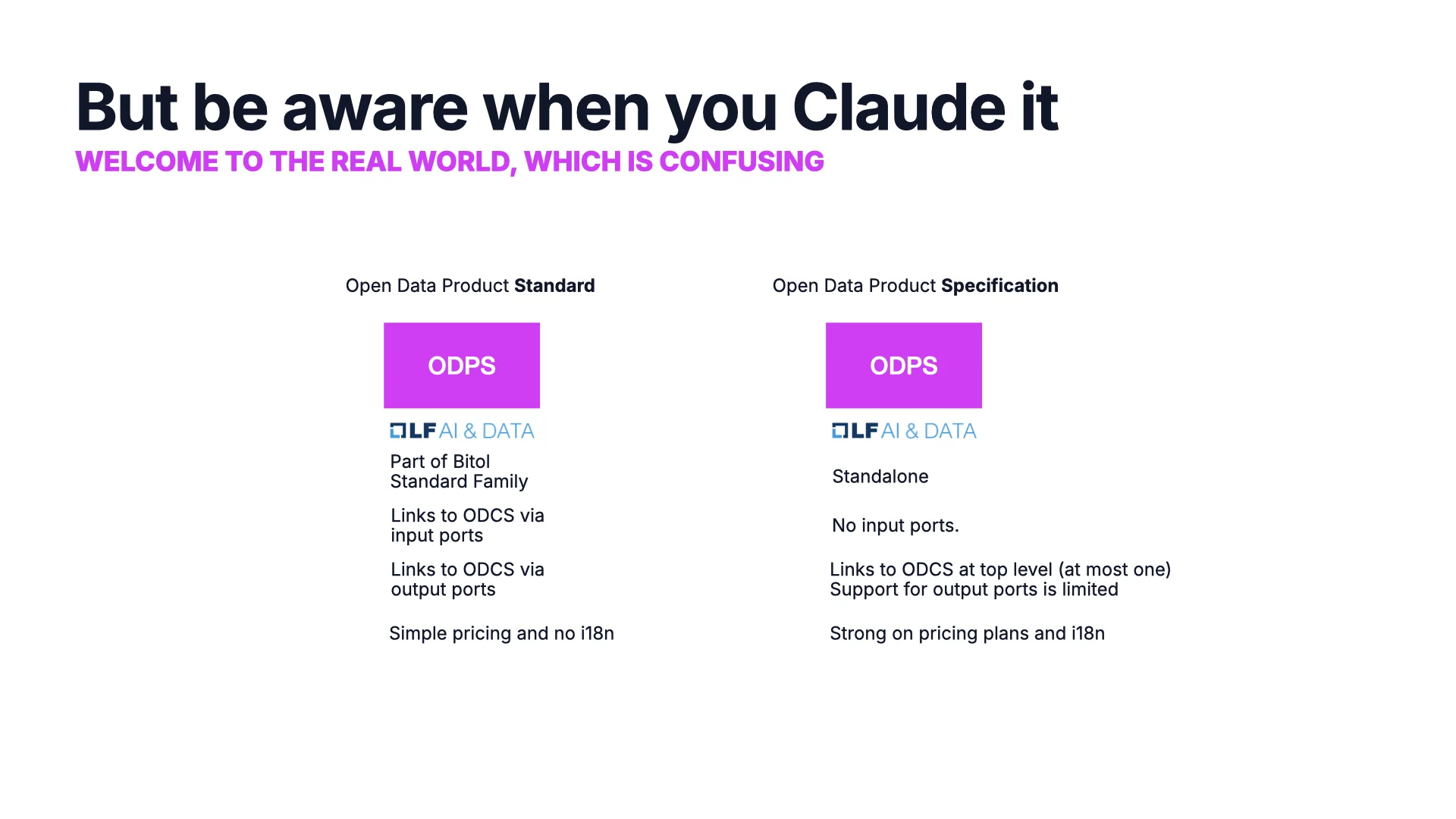

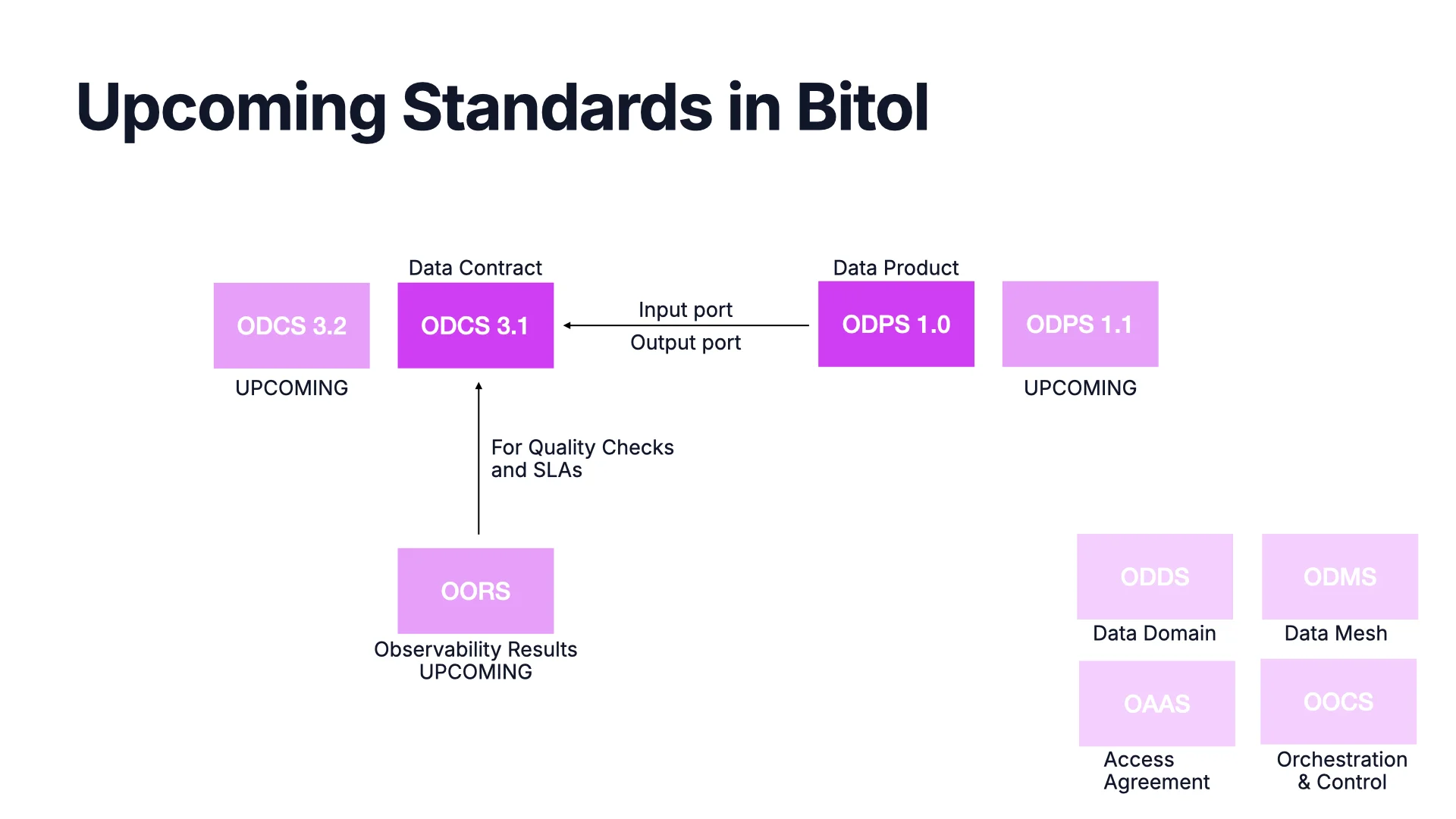

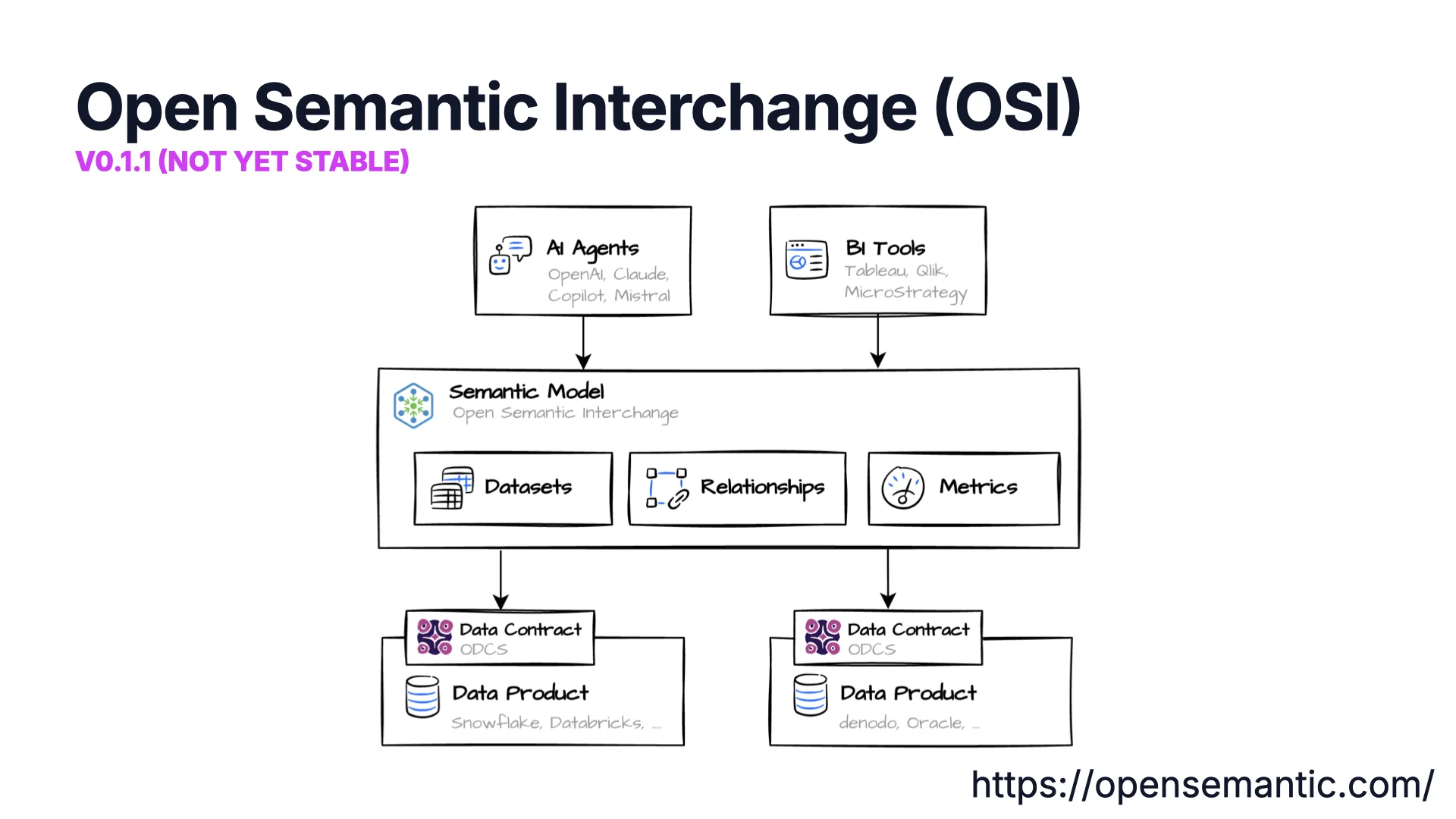

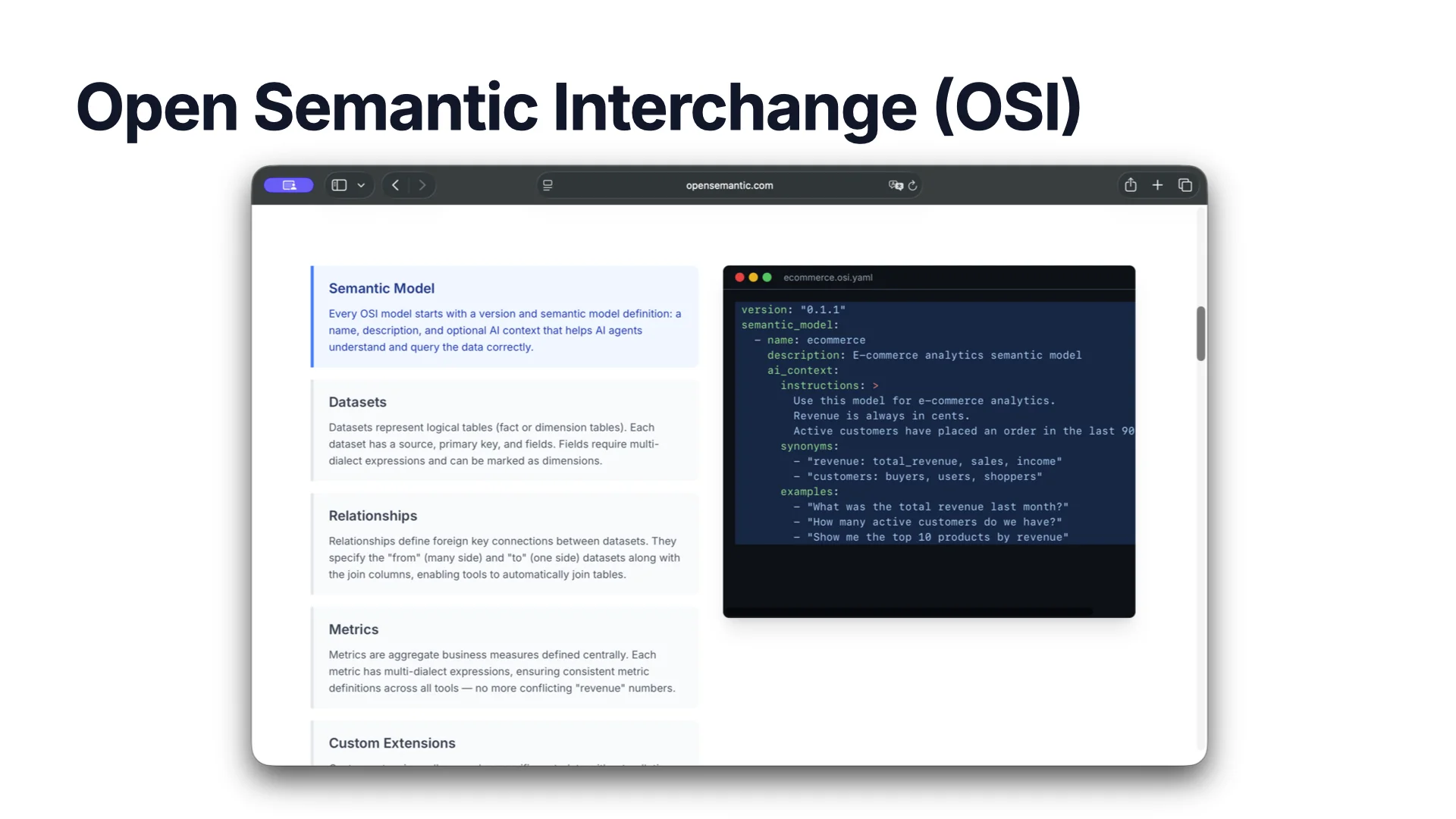

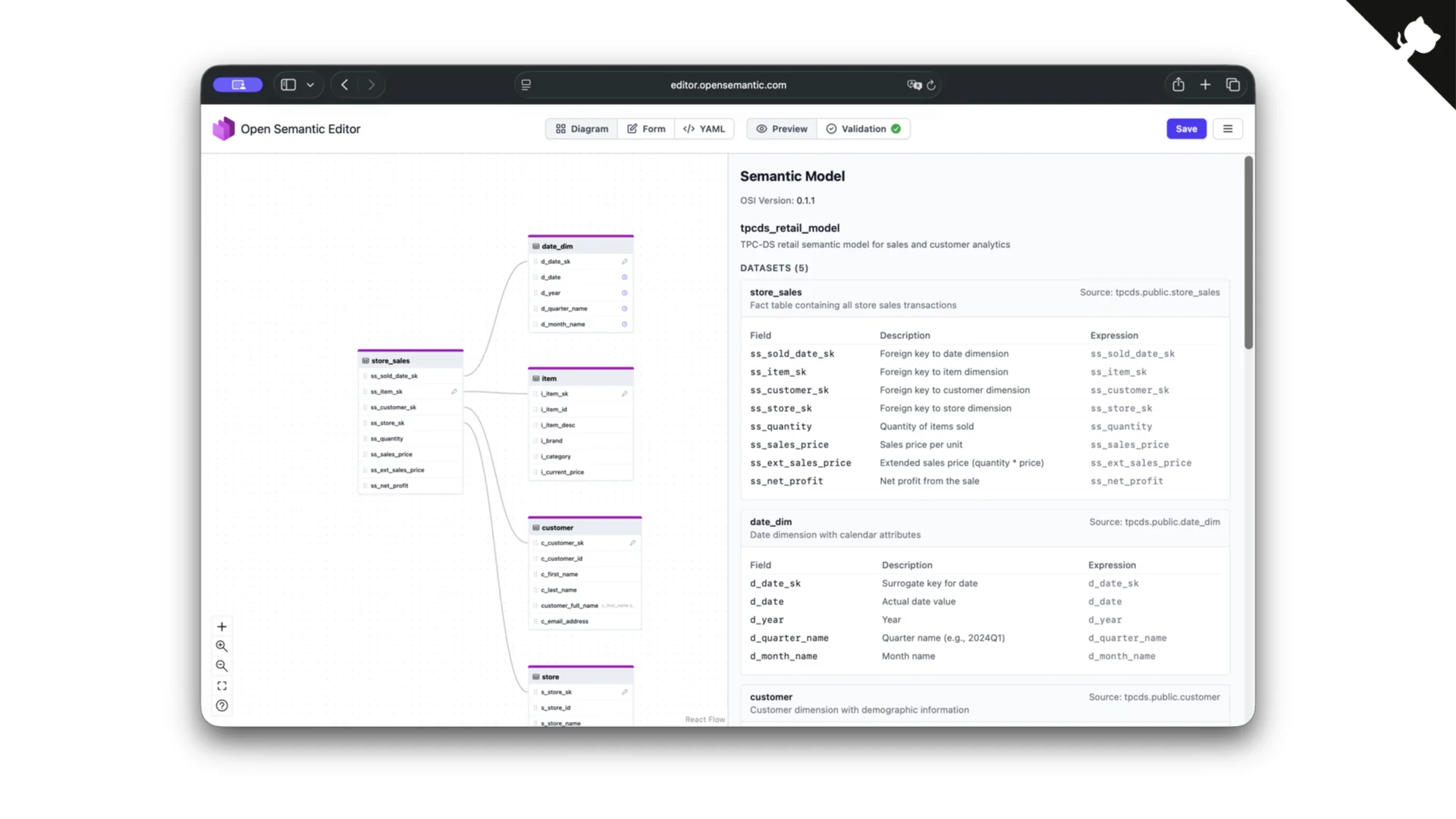

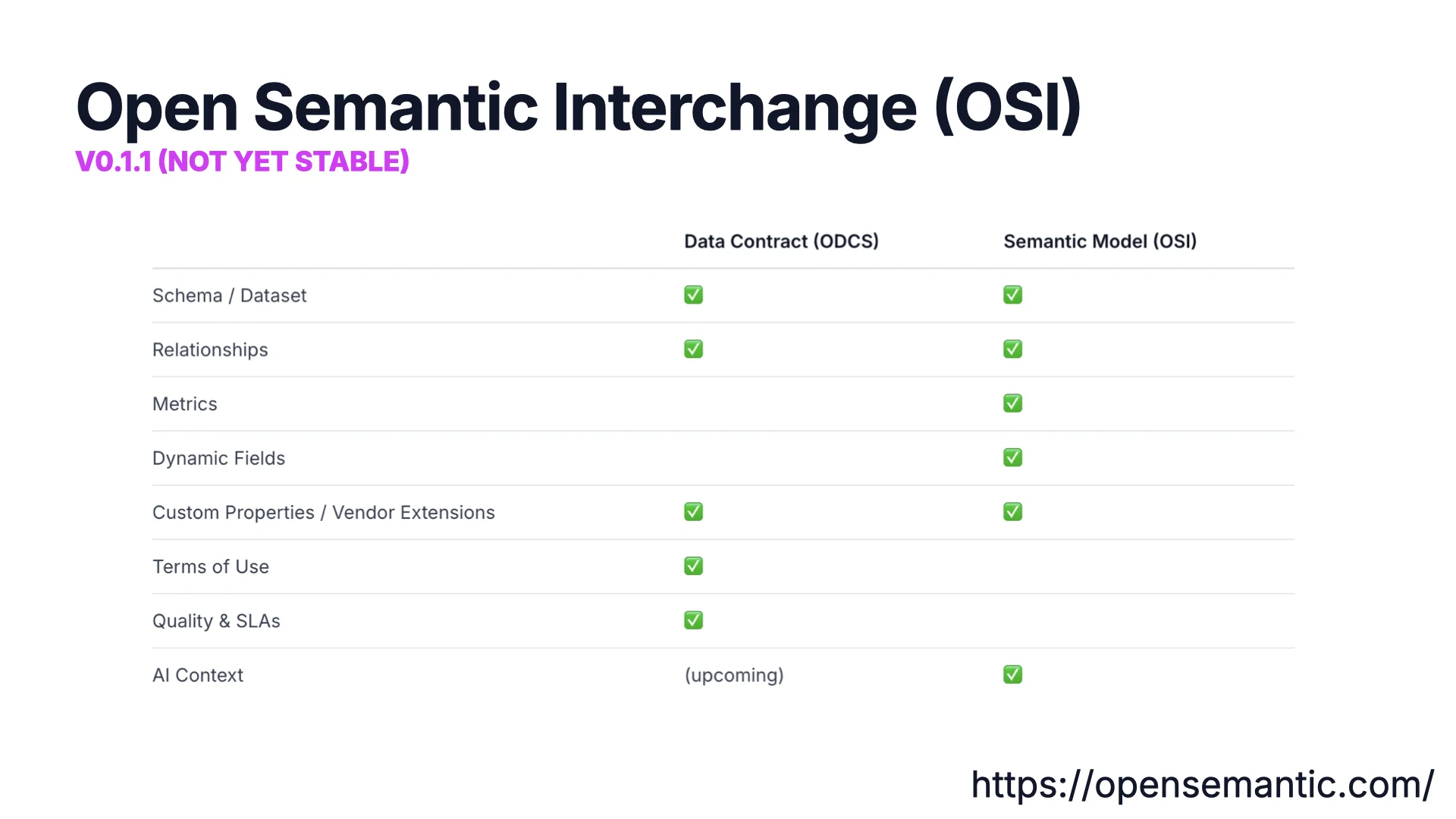

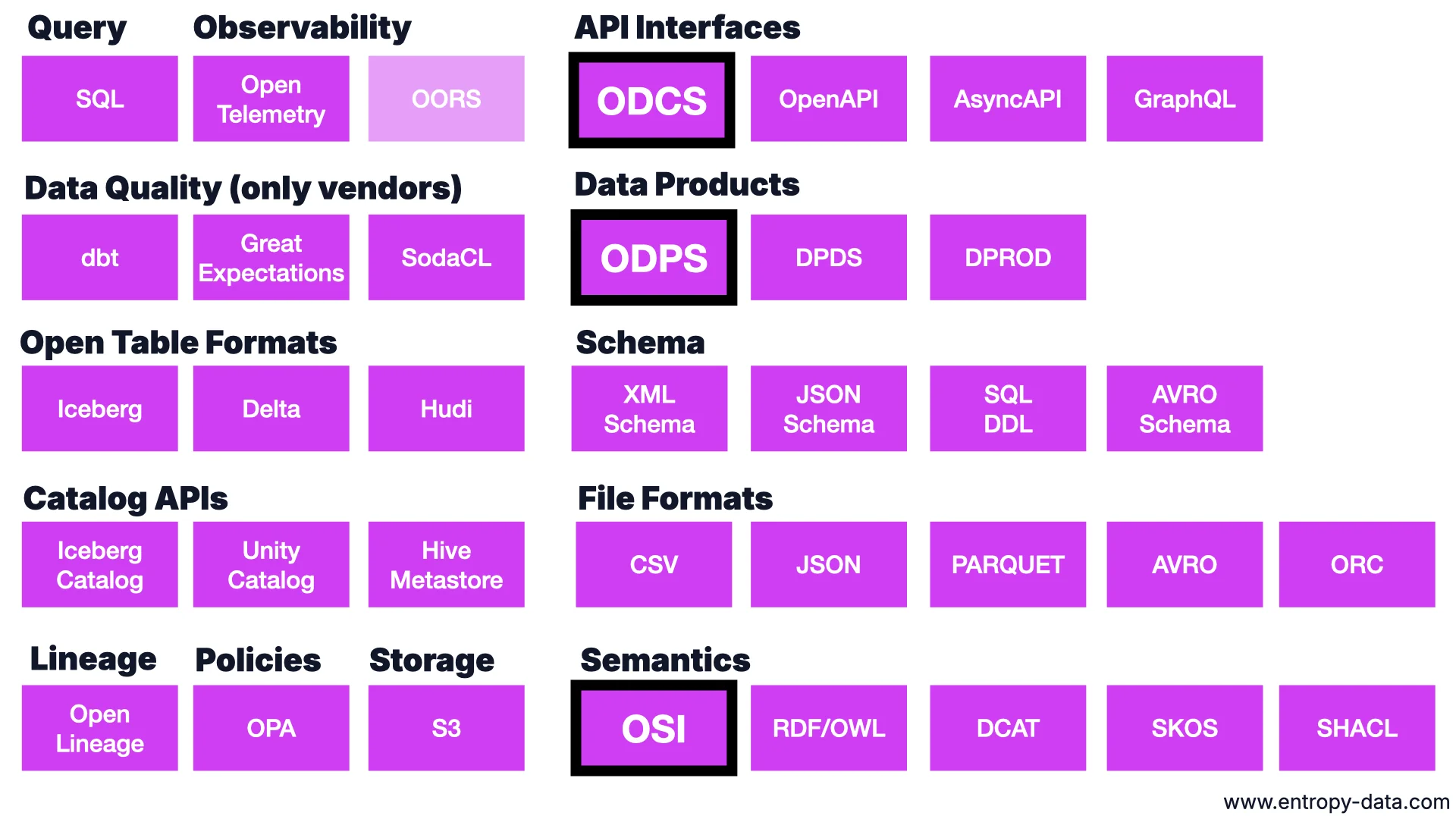

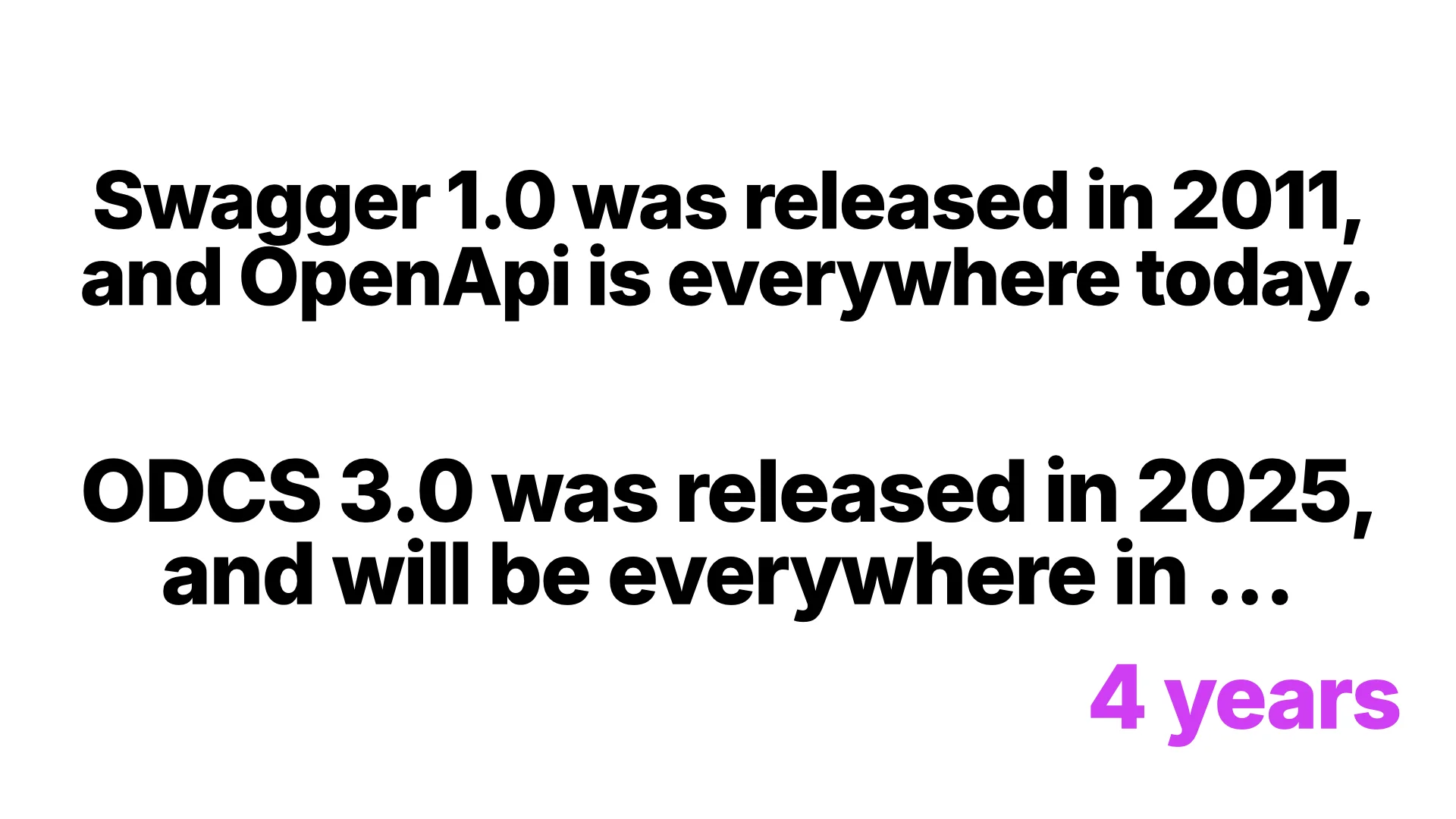

In this talk at Data Mesh Belgium Meetup #7 in Leuven, I walk through the three open standards shaping how we build data mesh today: the Open Data Contract Standard (ODCS), the Open Data Product Standard (ODPS), and the Open Semantic Interchange (OSI). I show how they fit together, where the tooling is going, and why one standard will be everywhere in four years.

Thanks to Tom De Wolf (ACA) and Emma Houben (AE) for the invitation and hosting the meetup, and to the BITOL community for the open standards we build together.

Q&A

Selected questions from the audience after the talk.

Q: If you have many data contracts coming from the same source, and you have to maintain and modify the same quality checks across all of them, how do you handle that?

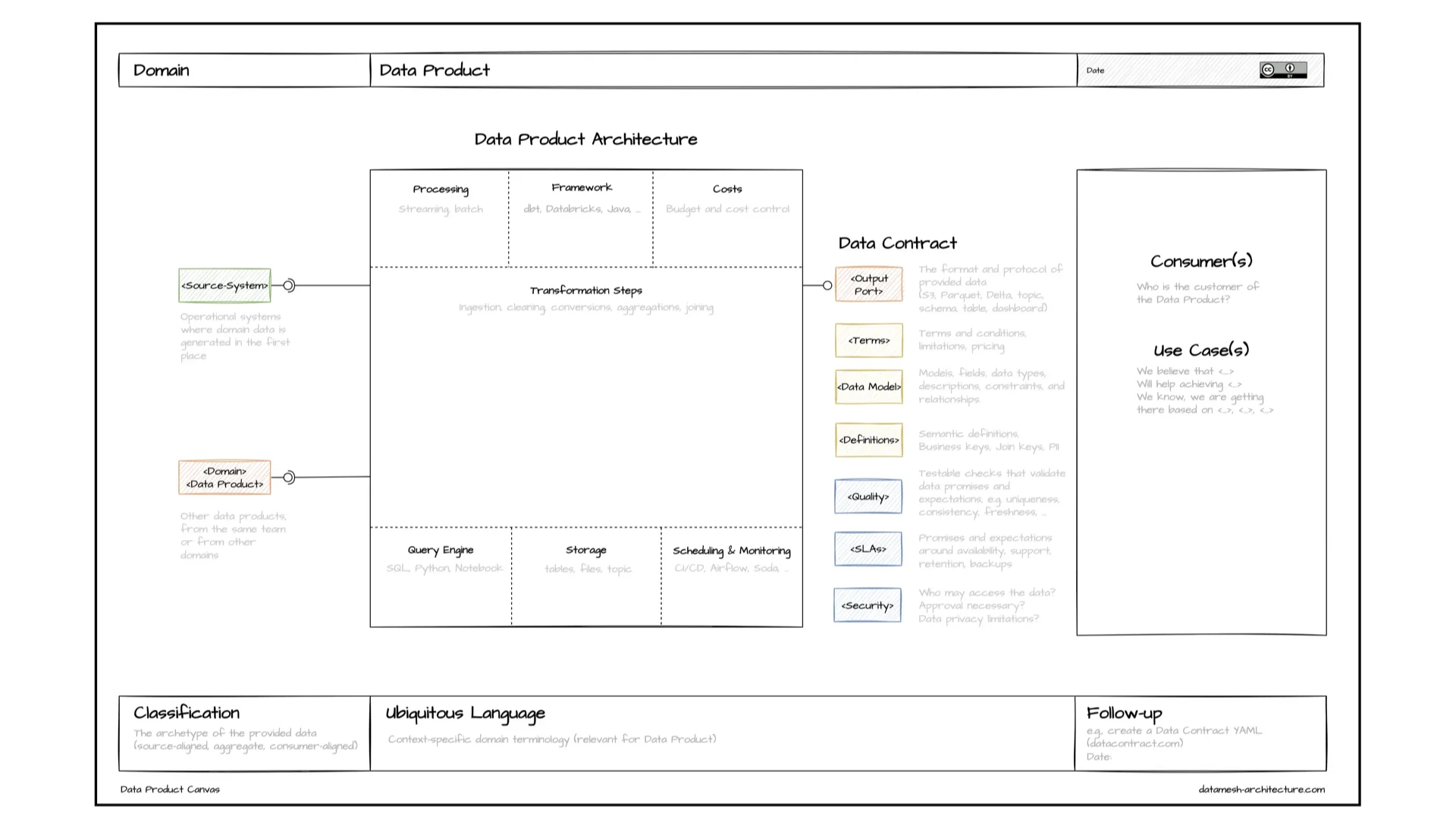

The best approach is to push the quality check upstream -- as close to the source system as possible -- so you catch errors early and do not have to run the same expensive checks repeatedly down the graph. If that is not possible, one answer is AI: with agents like Claude Code it is relatively cheap to keep related checks consistent across contracts, and that is not as cheeky as it sounds. Beyond that, the pattern we typically use is linking from the contract to a semantic model. You define order_id once -- description, type, format ("starts with 053") -- and inherit it everywhere. Bonus: because two contracts point to the same order_id concept, the AI knows those tables can be joined. Same thing, not similar.

Q: How does OSI relate to DCAT?

Today they are not related. DCAT lives in the RDF / semantic-web world as a catalog vocabulary, inspired by libraries and dataset offerings. There is a working group in OSI to add semantics -- concepts and properties, essentially a graph encoded in YAML -- which will bring the two closer, but I don't think they will ever fit perfectly. DCAT composes naturally in RDF with other vocabularies (DPROD from OMG, for example). YAML formats like OSI are more strict. The format choice has consequences.

Q: You mentioned automating transformations using the data contract. Can you give an example? My understanding is that the contract does not capture transformation logic.

Correct -- the contract is targeted at the consumer, not the implementation. It captures a few transformation hints (where a column comes from, a short description), but it is deliberately limited. You can always use ODCS's custom properties to encode what you need. What we see working very well in practice: hand Claude Code the input and output contracts plus a skill like "this is how we typically build a Databricks data product" and let it fill in the blanks. Add semantic links pointing to the same concepts across contracts and AI figures out the joins too. For actual runtime lineage details -- how the pipeline really works -- use OpenLineage traces. That is what captures column-level lineage, not the contract.