Talk

Data Contracts: What They Are, Why They Matter, and How to Use Them

Jochen Christ, Co-Founder & CTO @ Entropy Data · March 12, 2026

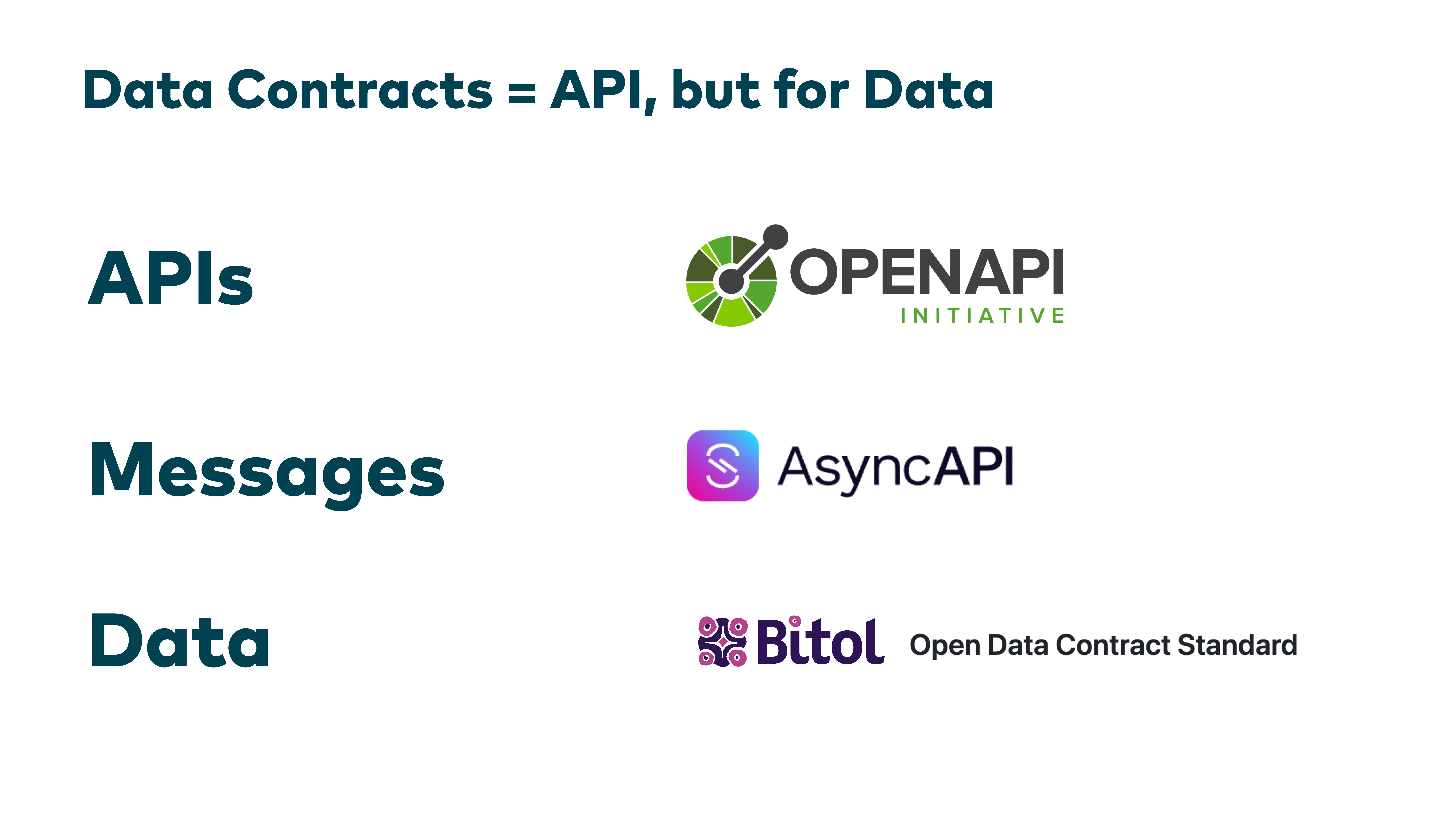

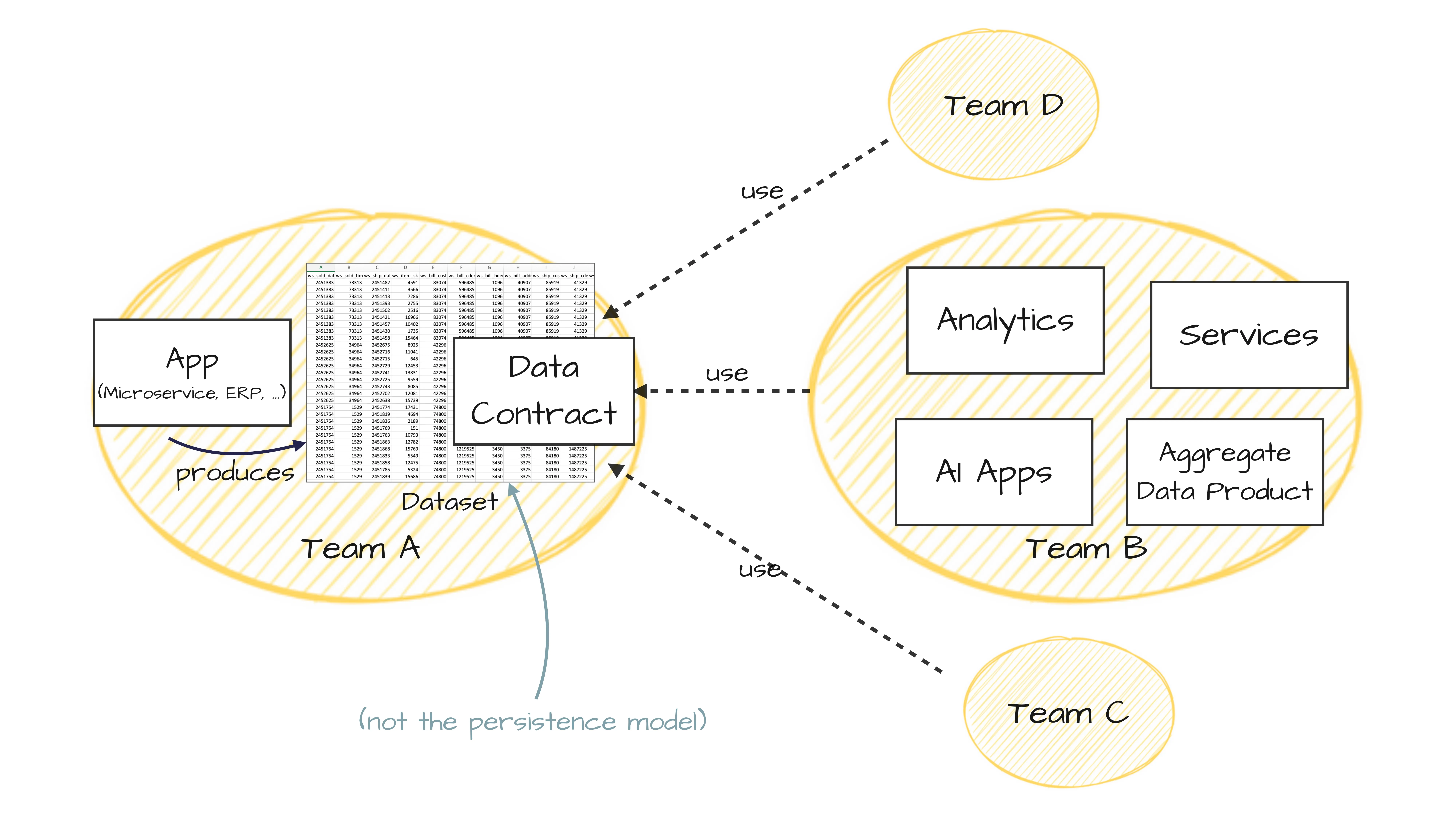

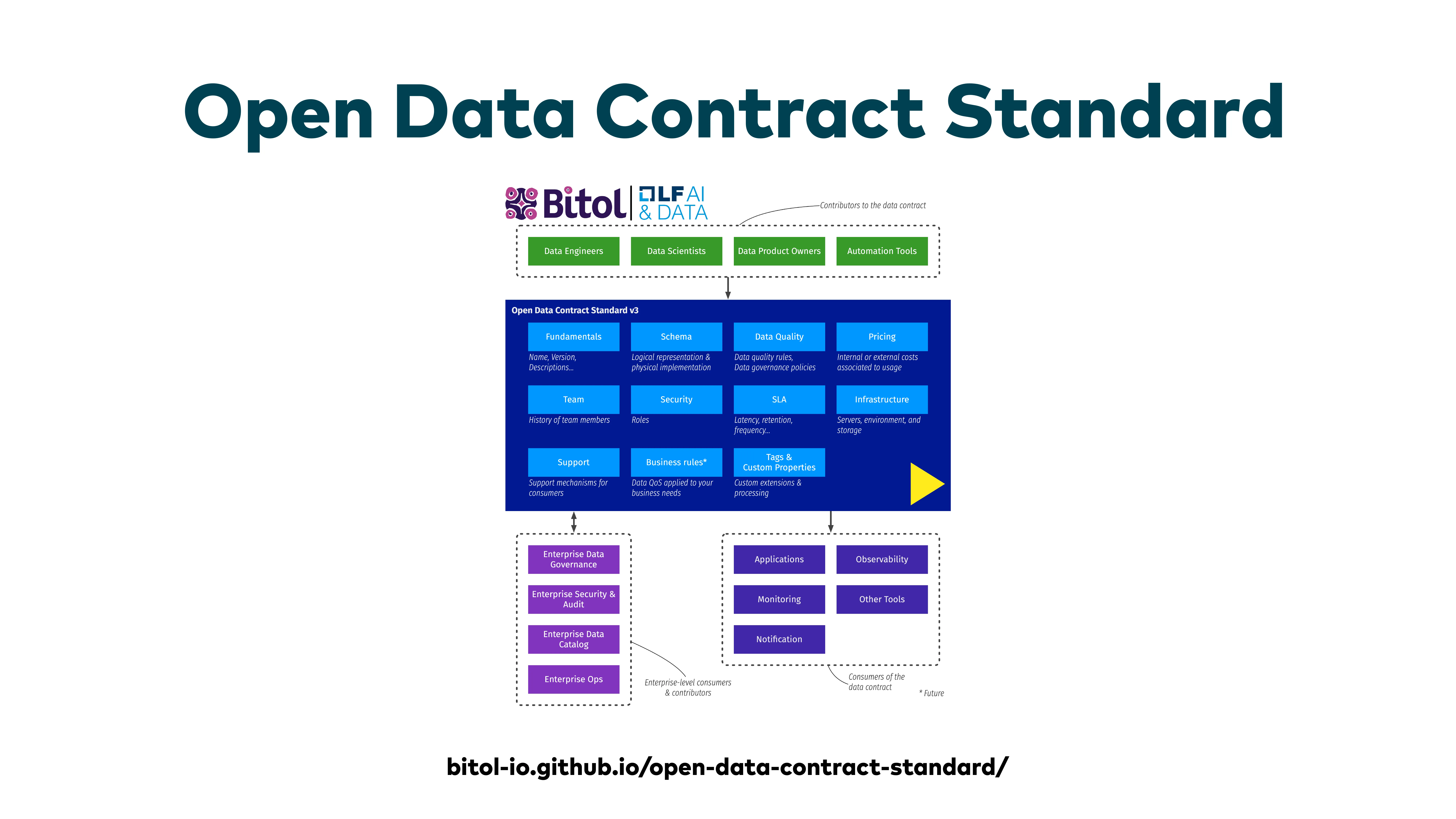

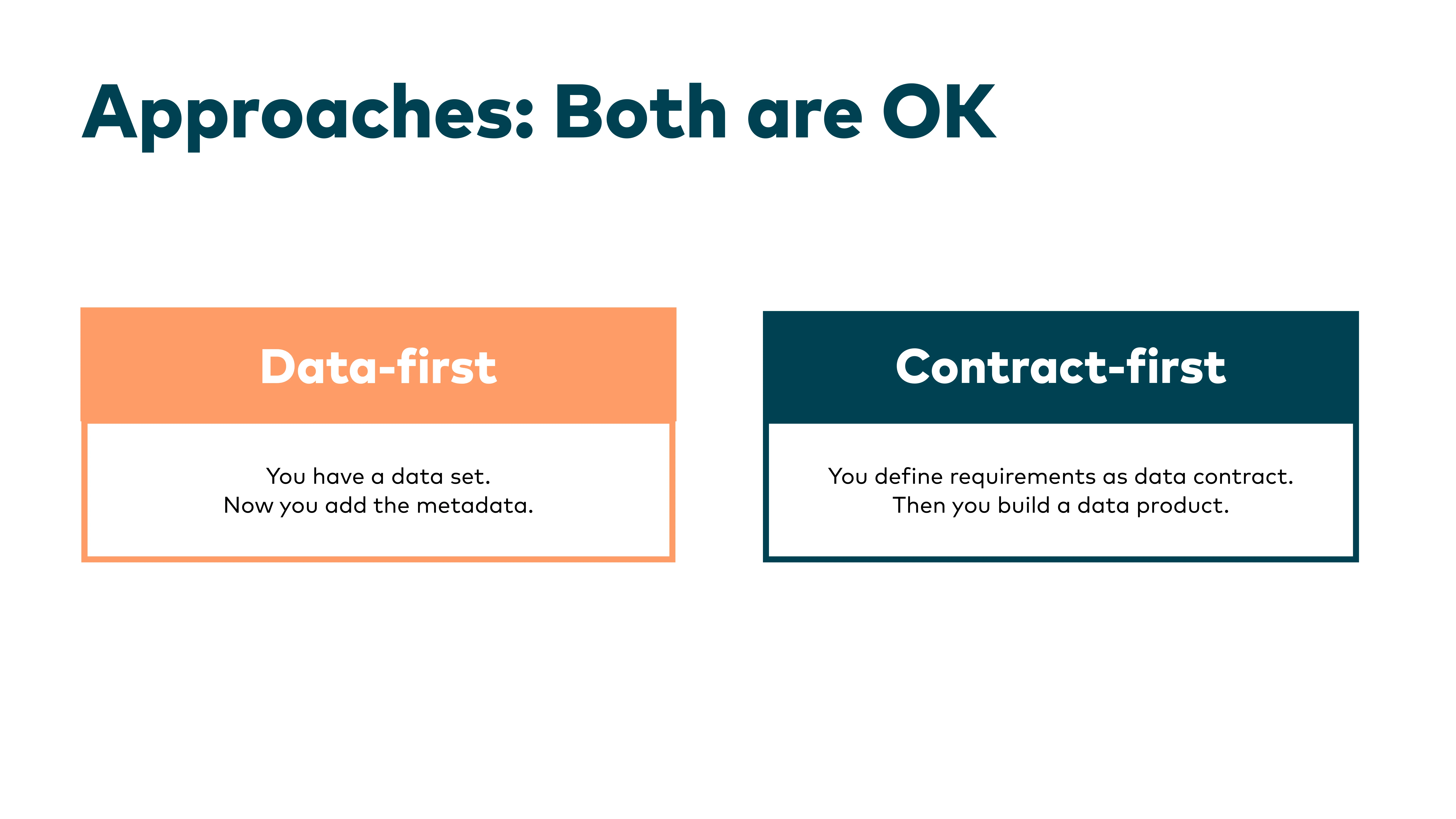

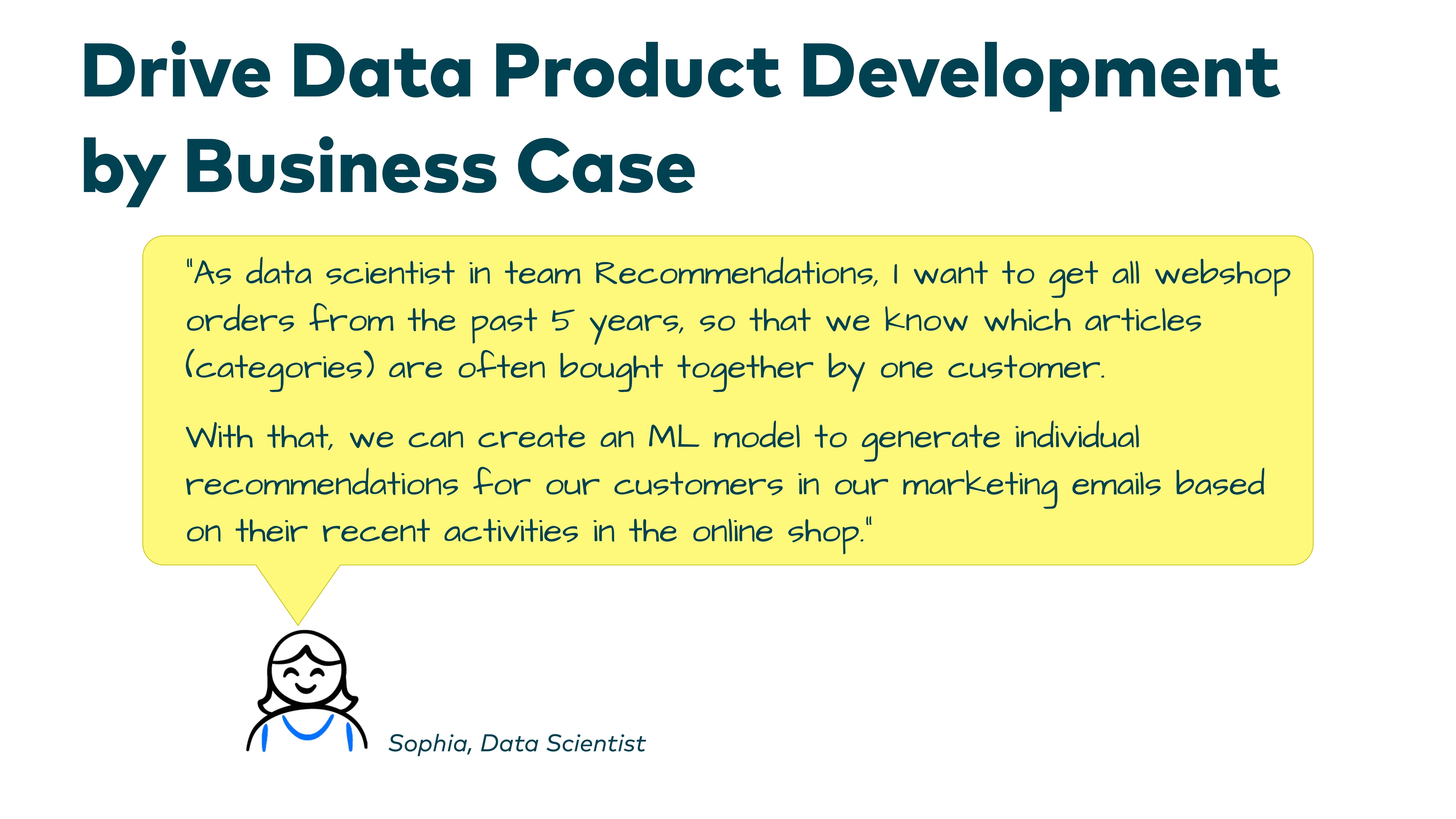

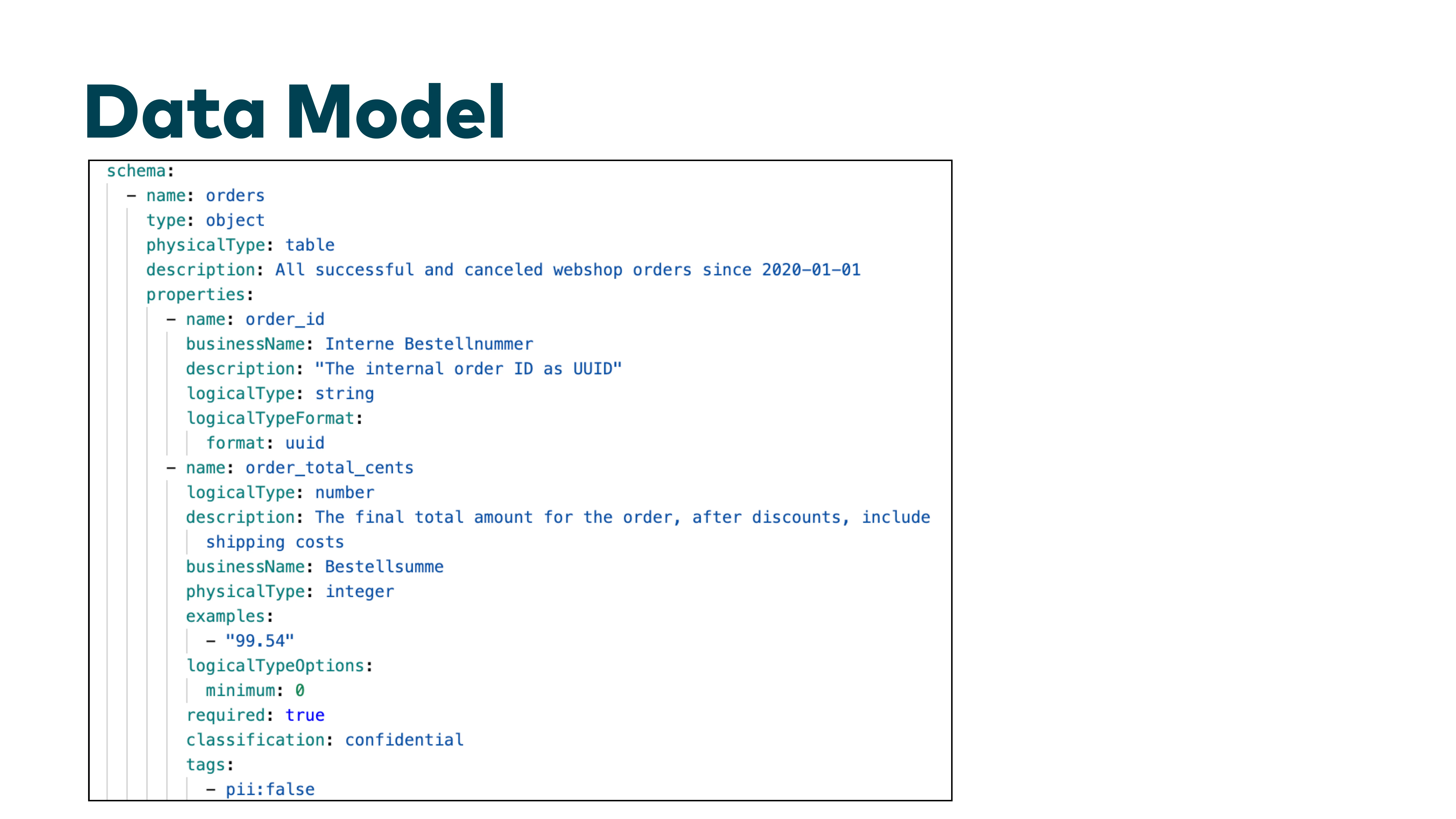

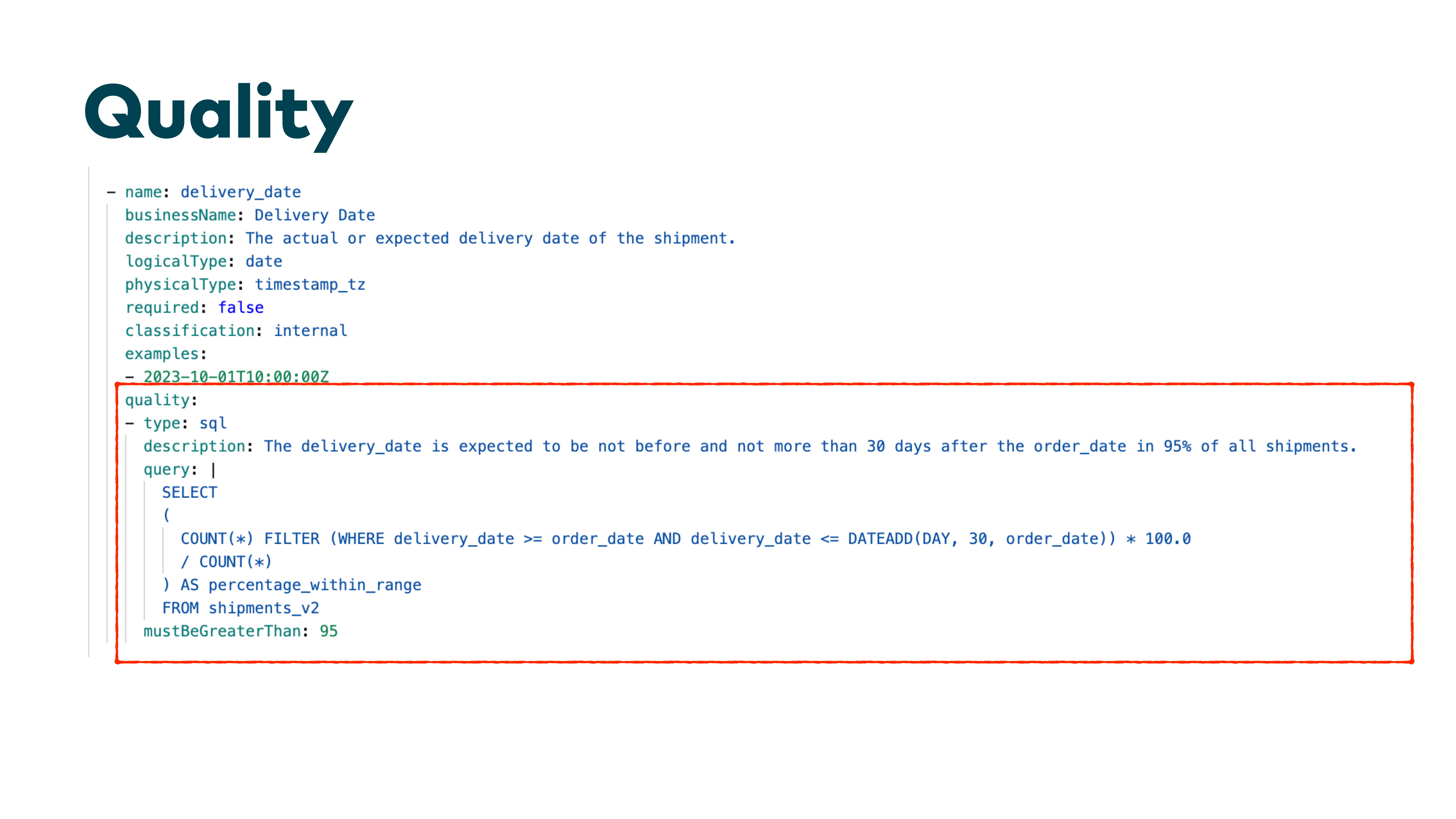

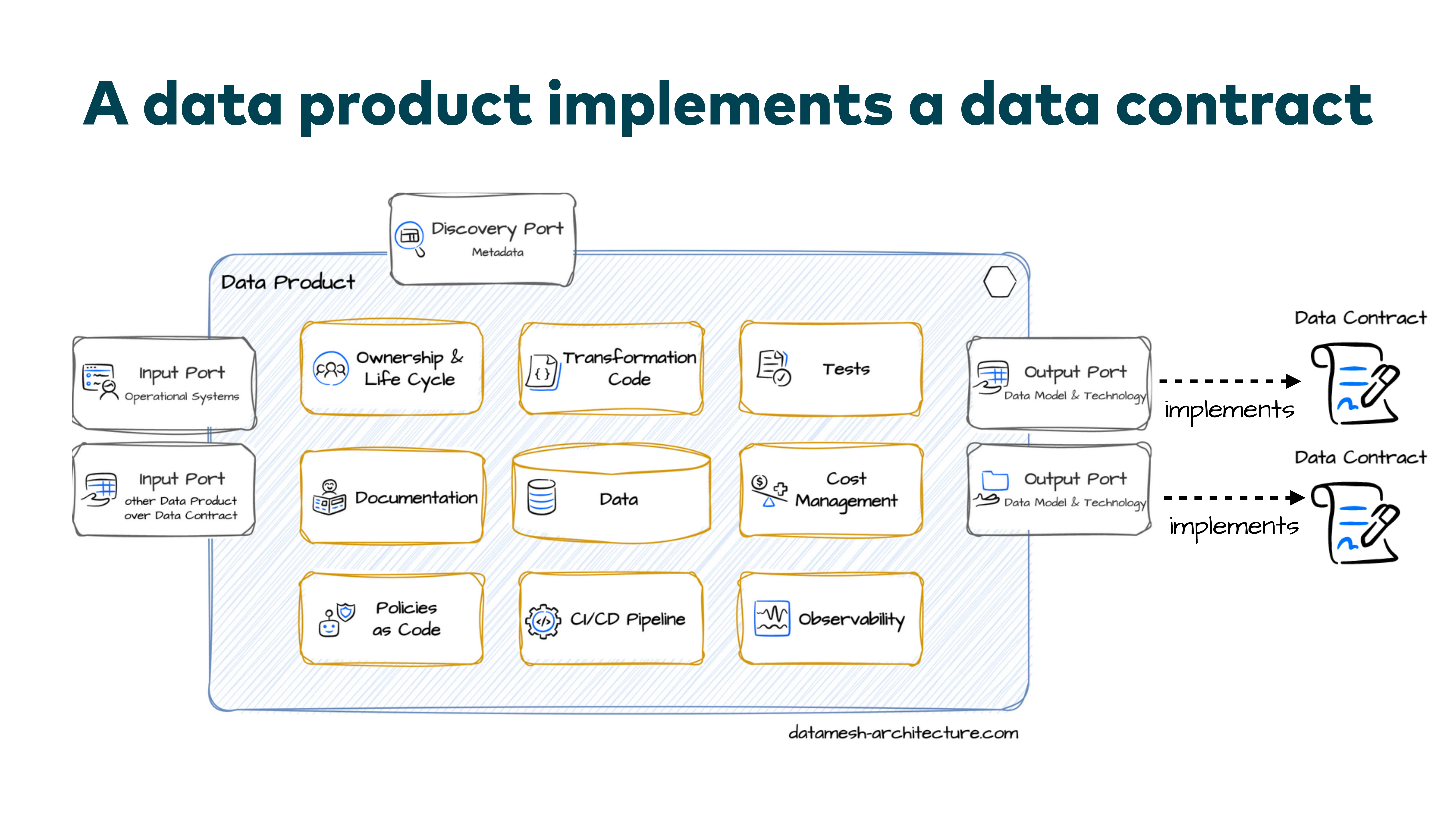

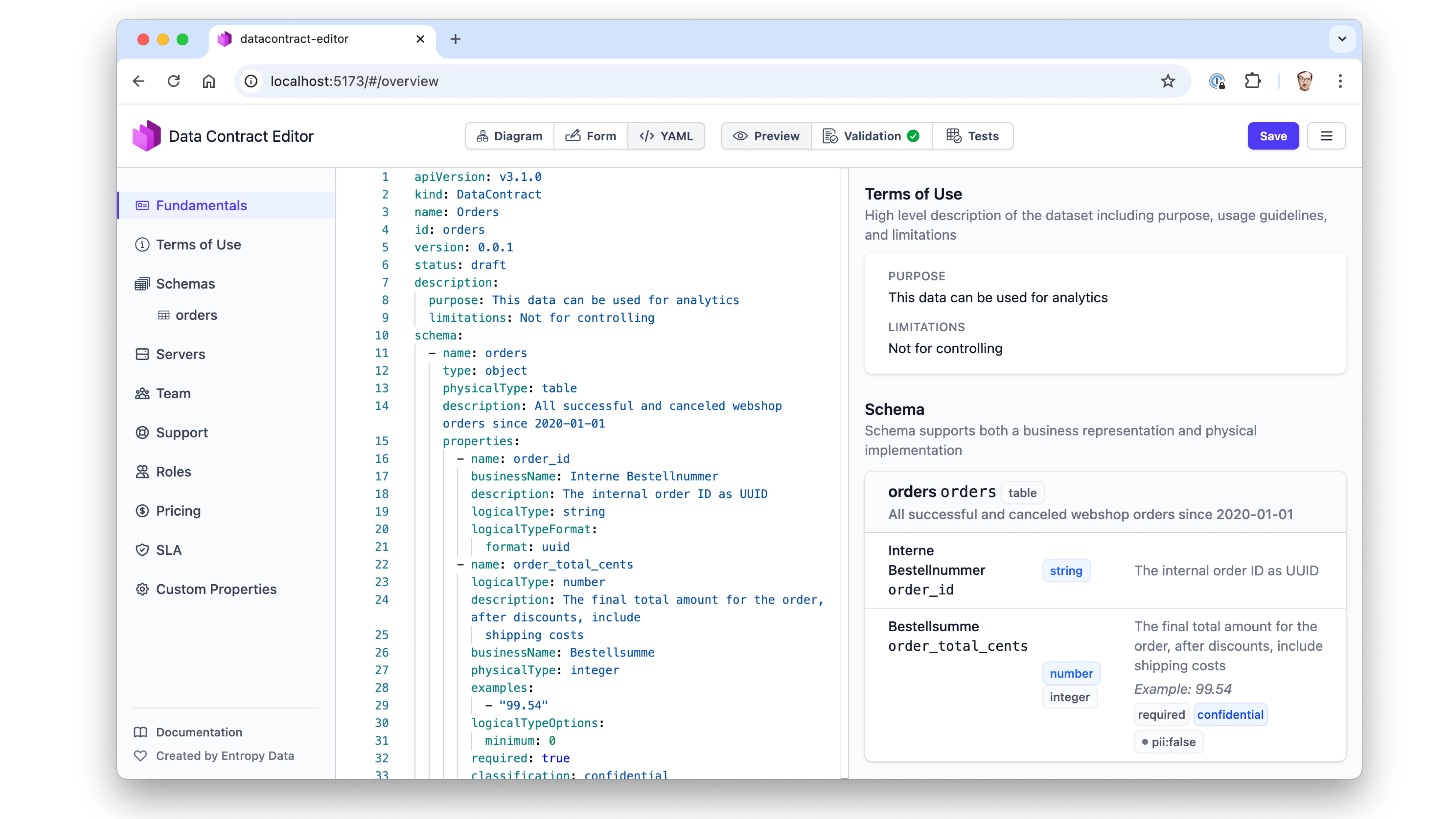

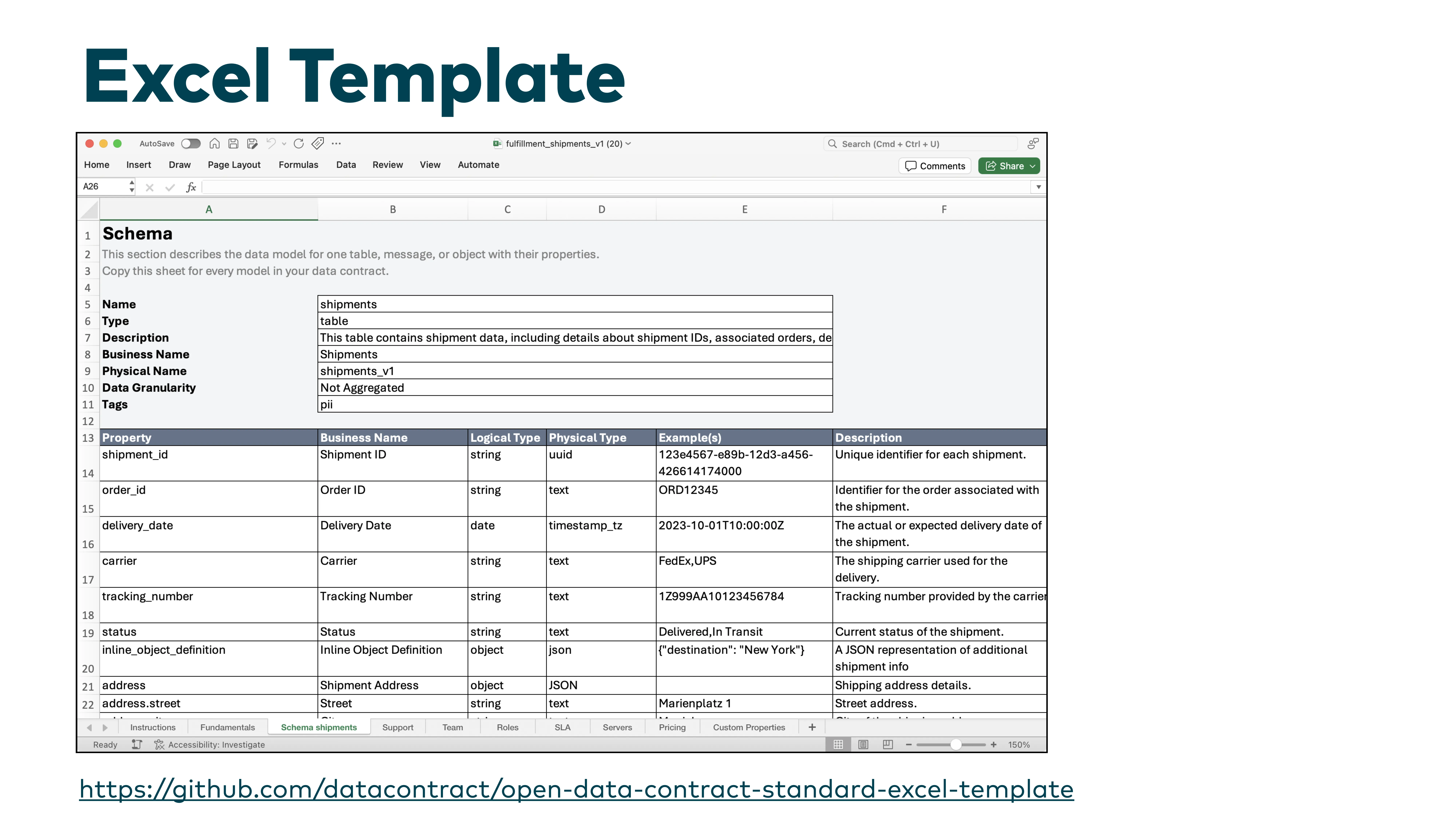

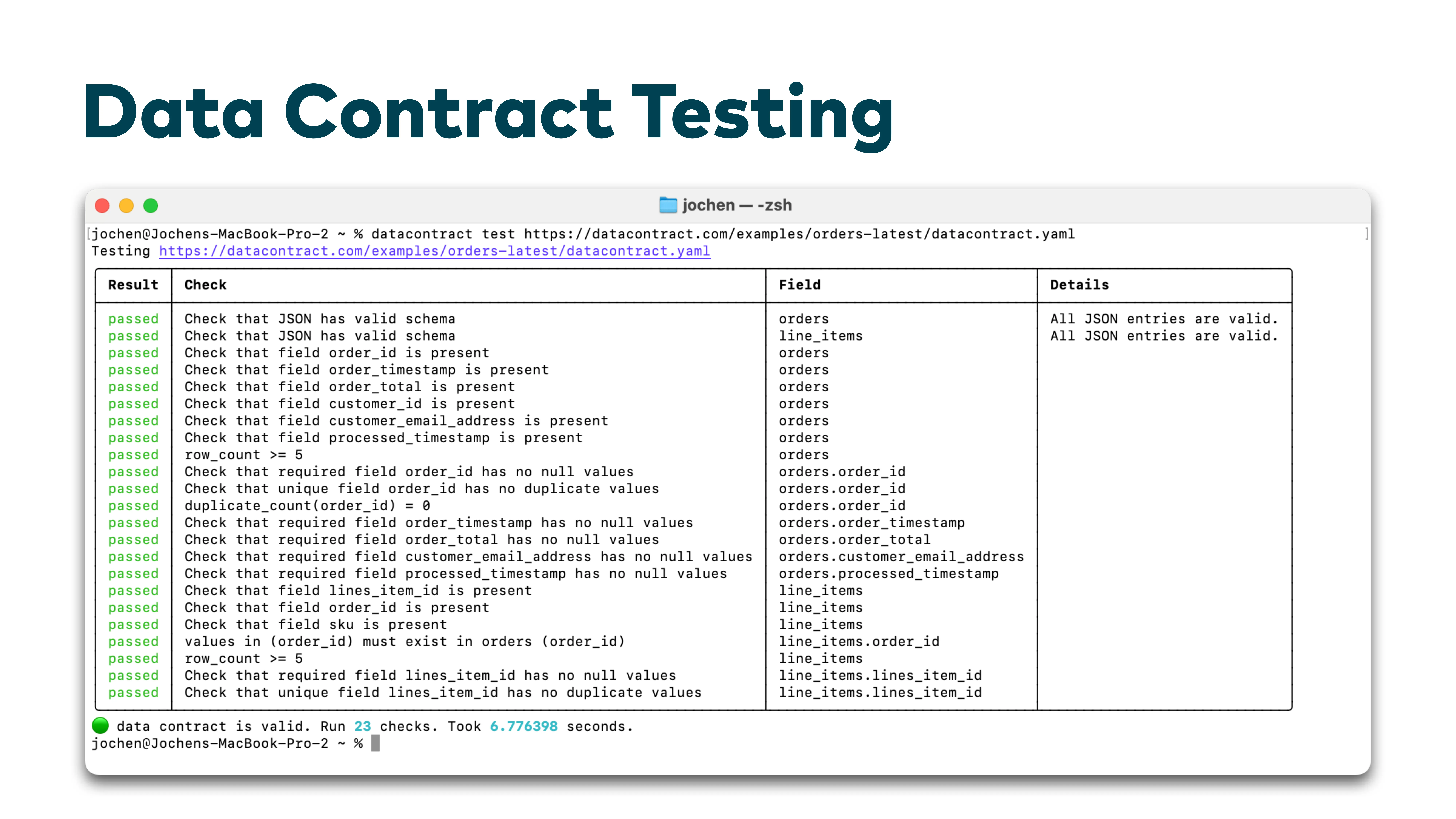

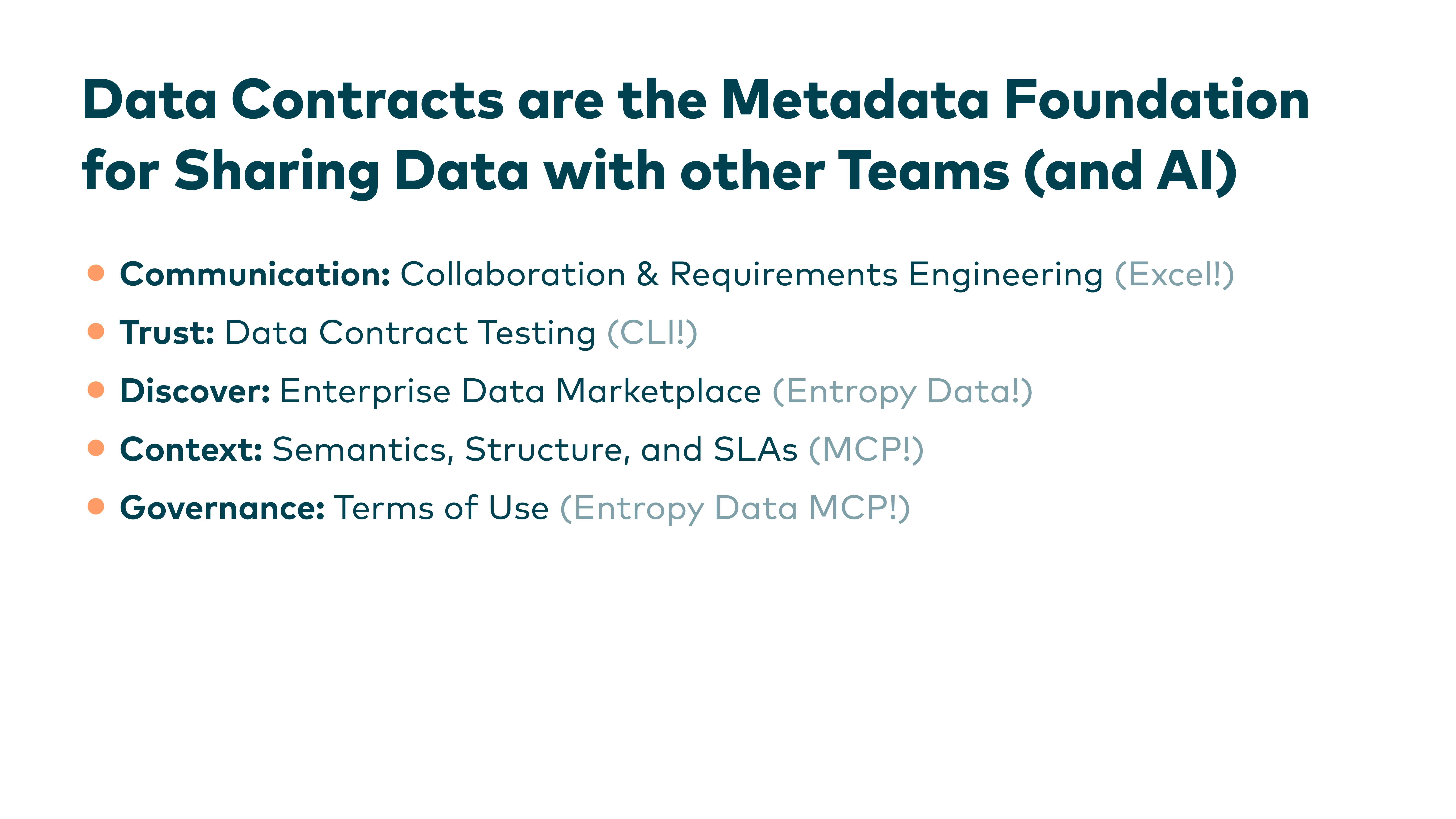

In this talk at the TDWI Roundtable Münster, I explain what data contracts are, how they compare to API specifications in the software world, and why they have become essential for managing data across teams. We walk through a concrete example using the Open Data Contract Standard (ODCS), discuss contract-first vs. data-first approaches, explore tooling like the Data Contract CLI and Data Contract Editor, and look at how data contracts become the governance layer for AI agents accessing enterprise data.

Note: The talk was delivered in German. The transcript below is an English translation. Transcribed and summarized using AI.

Thanks to TDWI for hosting the Roundtable Münster and Bodo Hüsemann for being the host at x1F.

Q&A

Selected questions from the audience during the talk.

Q: Can I use the same dataset in different granularities —e.g., aggregated vs. raw? How do I handle variants?

Conceptually, those are different data contracts. Each variant —different granularity, different format —gets its own contract. You can link them or tag them as related, but logically they are separate interfaces with separate guarantees.

Q: Can I use data contracts for operational data exchange between microservices, not just analytical data?

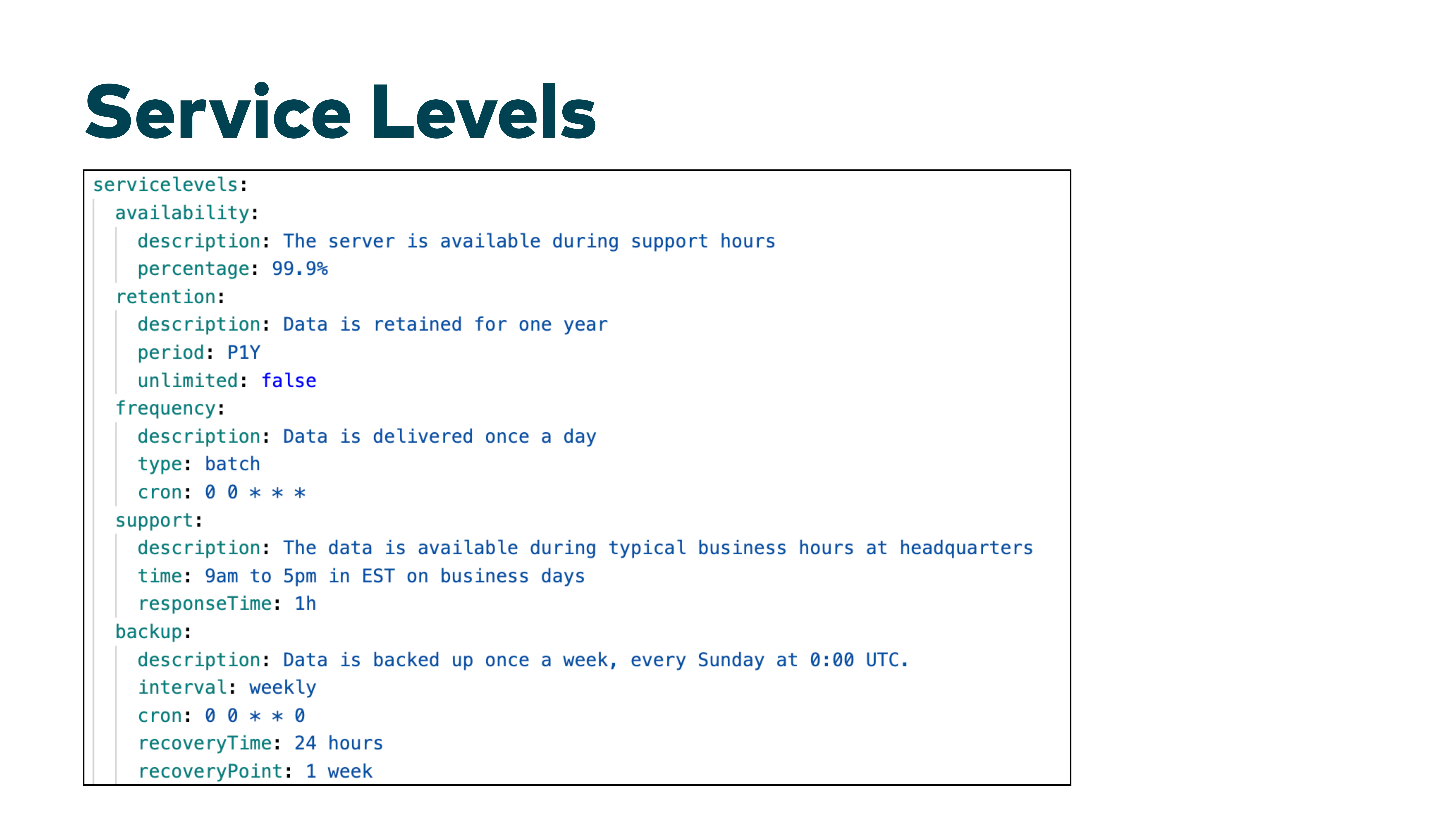

Yes, define the non-functional guarantees —availability, latency, support hours —in the contract. Then the consumer can decide whether those guarantees are sufficient for their operational use case. A web shop would never depend on a system with 99.8% availability without adding an anti-corruption layer or asynchronous decoupling.

Q: How do you formalize terms of use for AI agents? Natural language seems too vague for automated enforcement.

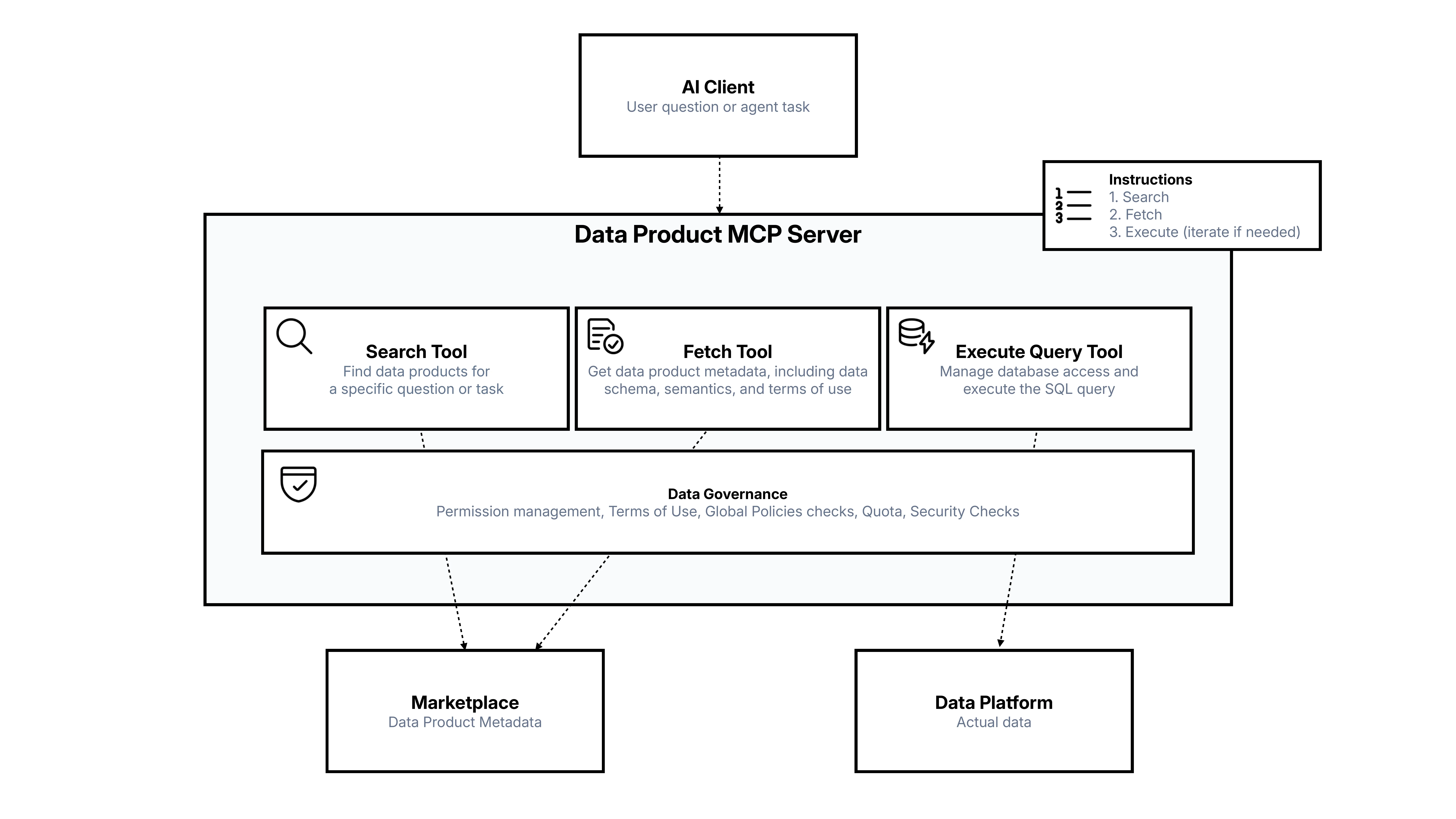

Our approach is to keep terms of use in natural language and have a specialized agent interpret them against the query and the prompt. An AI system can evaluate whether "this data may not be used for marketing purposes" applies to a given query. The governance layer —the MCP server implementation —enforces global policies, quotas, and security checks before letting SQL through to the data platform.

Q: When defining quality requirements, how often do you discover that you need to capture additional information in the schema?

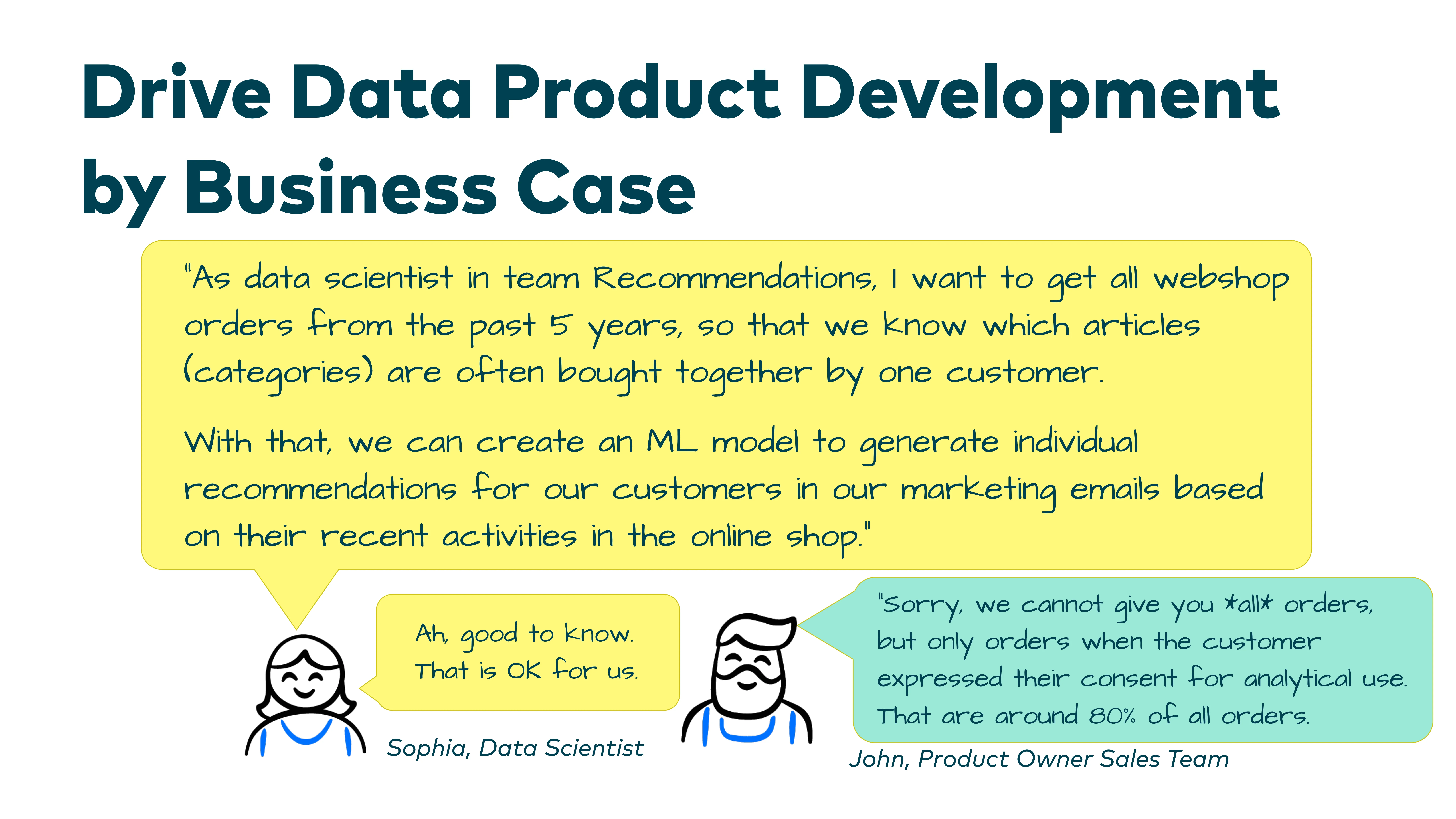

Quite often. When you discuss data models with domain experts, things surface that were hidden. Classic example: status fields where you thought there were only three or four states, but suddenly there is a "partially delivered" state, or error states that break all your assumptions. Status fields and consent filters are particularly tricky. That is exactly why the workshop process is so valuable —it forces these discoveries.