Talk

Let's Talk About Data Contracts: Standards, Tooling & Best Practices

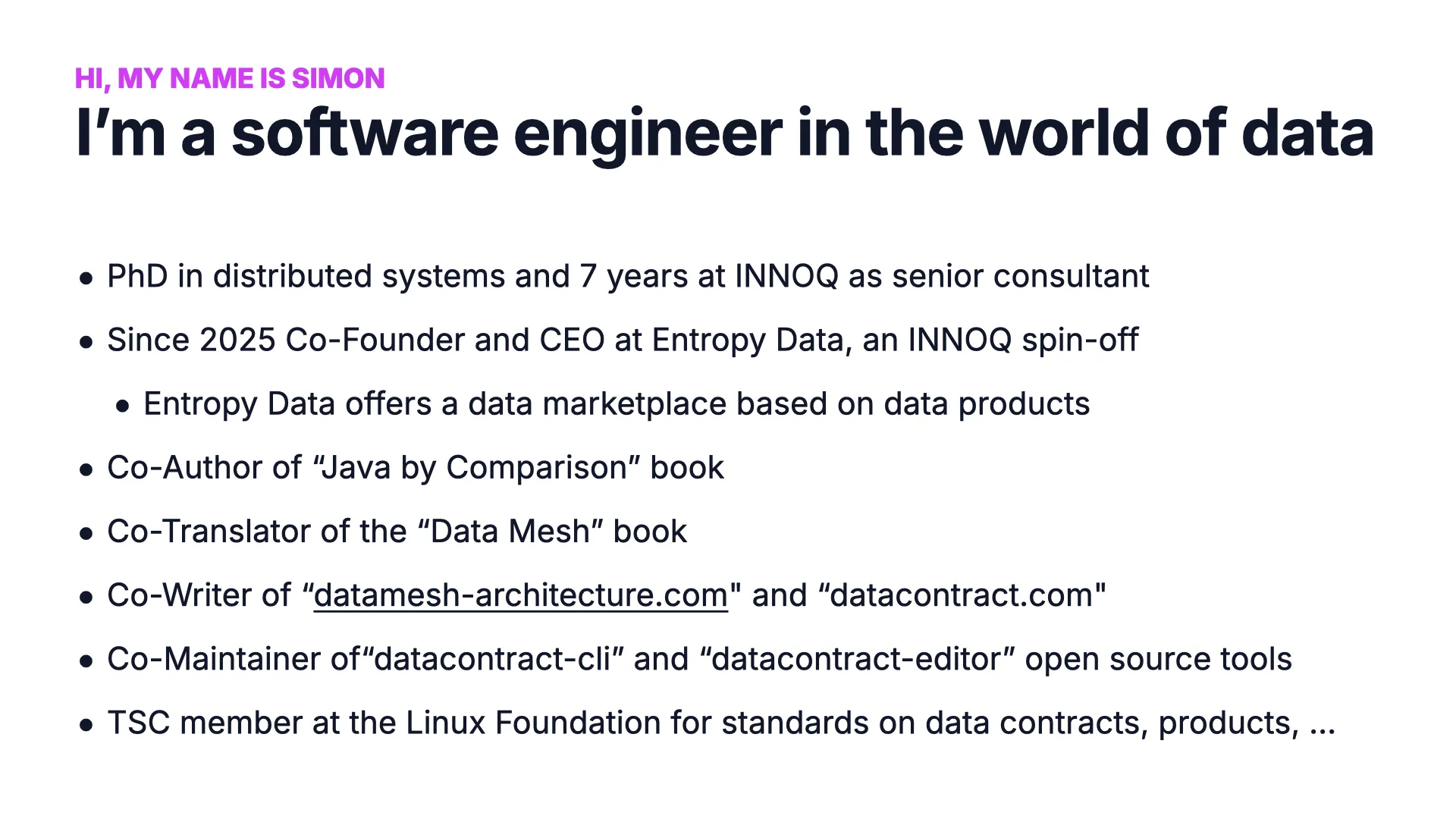

Dr. Simon Harrer, Co-Founder & CEO @ Entropy Data · March 19, 2026

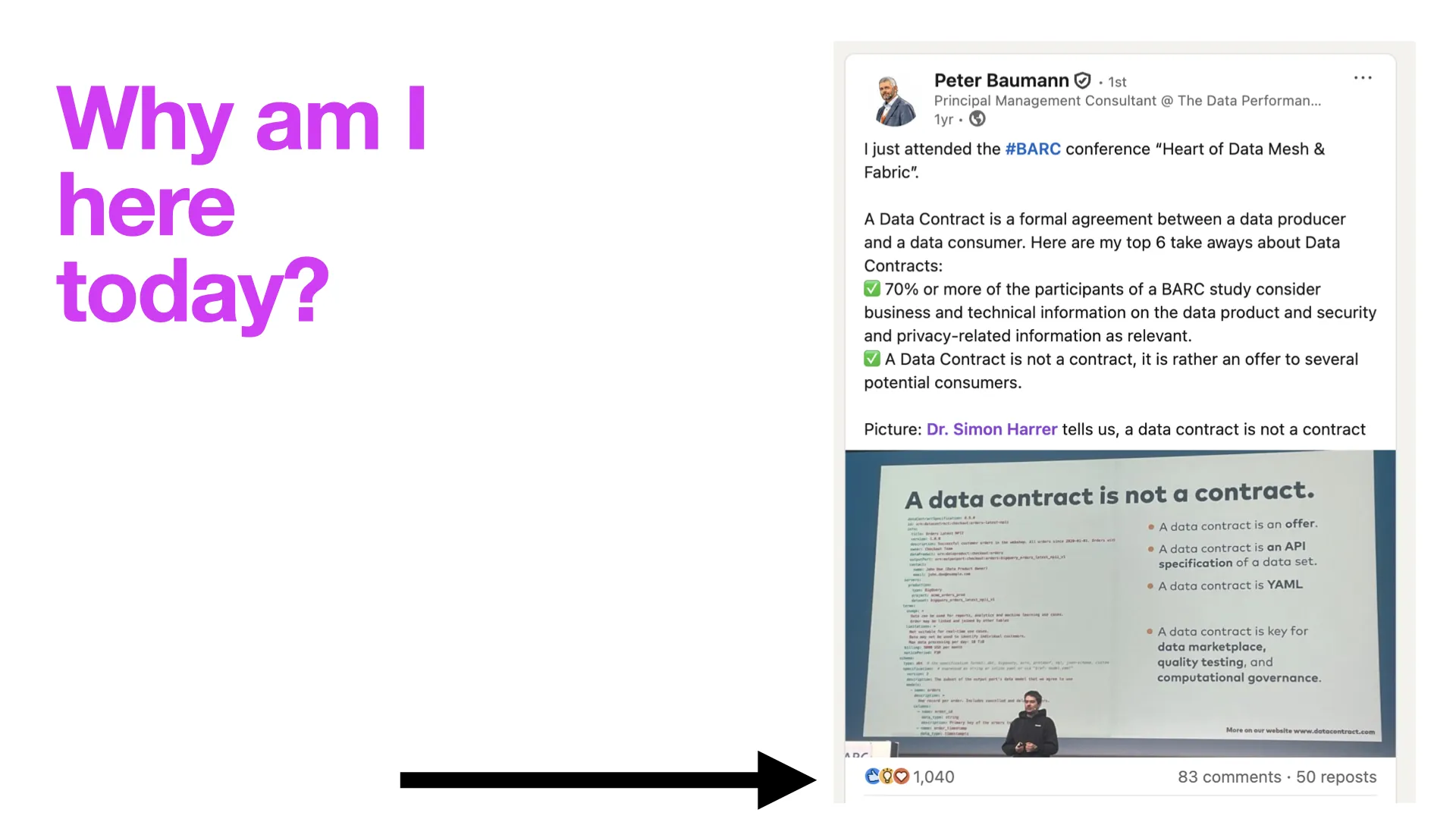

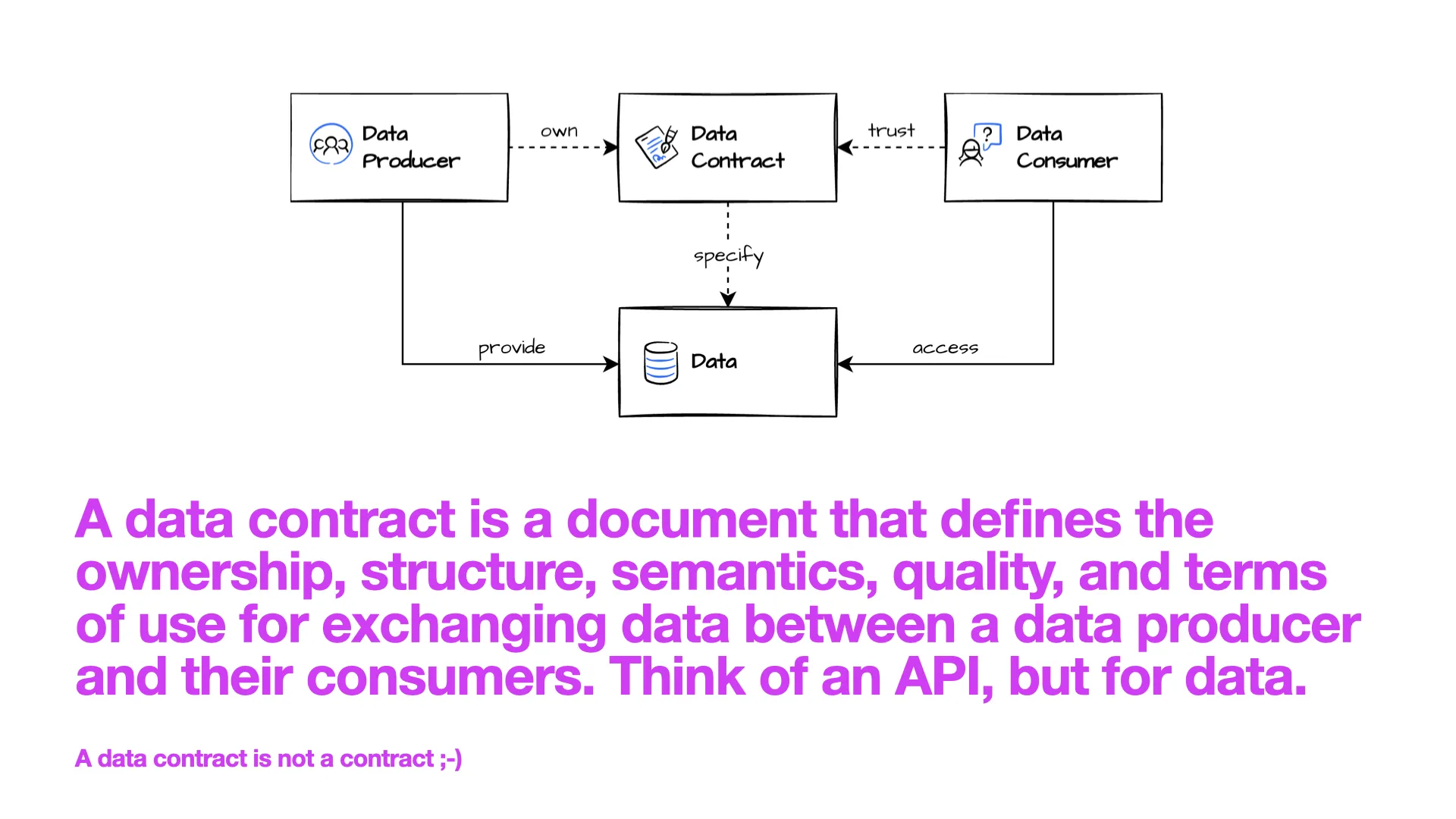

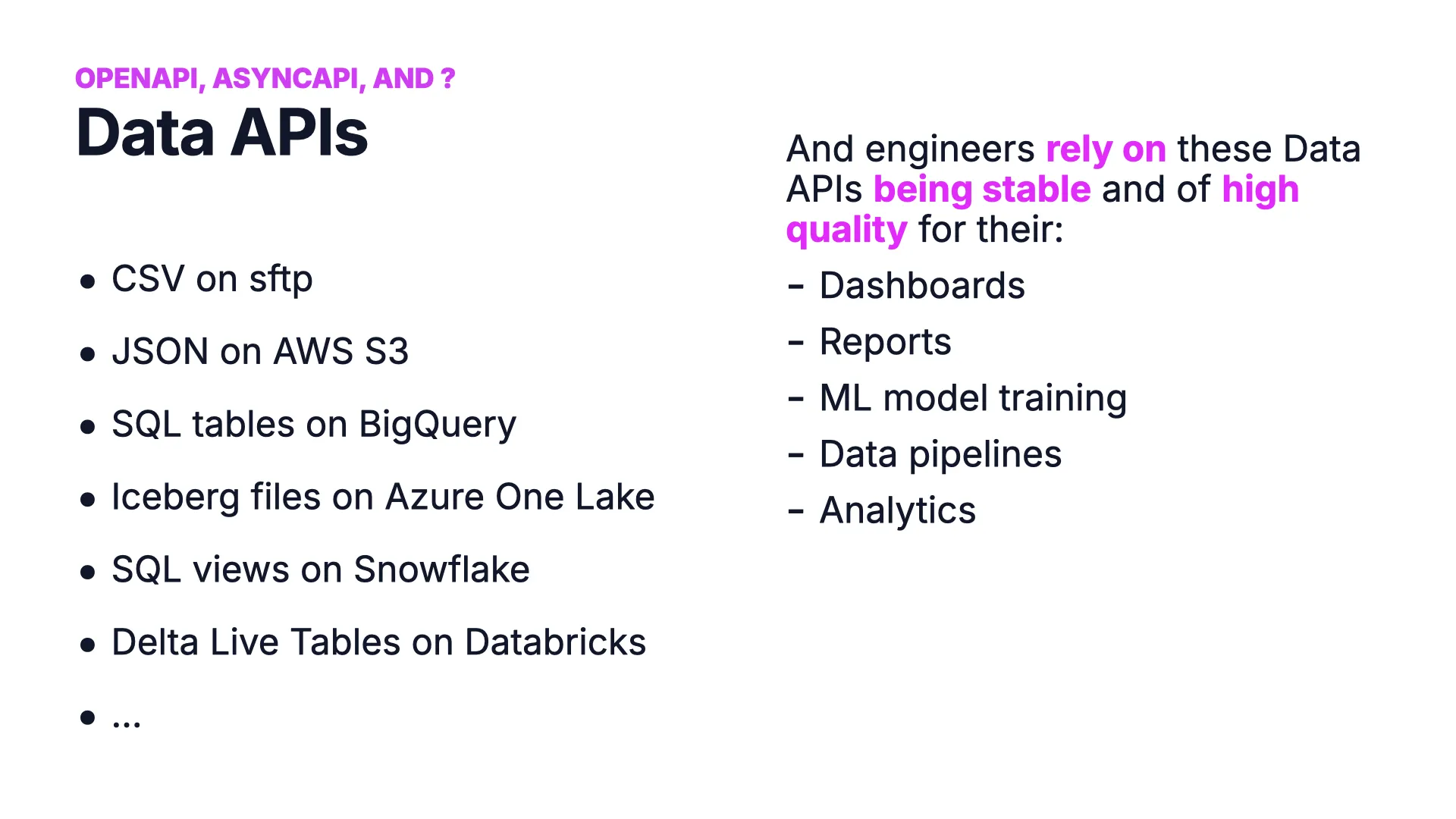

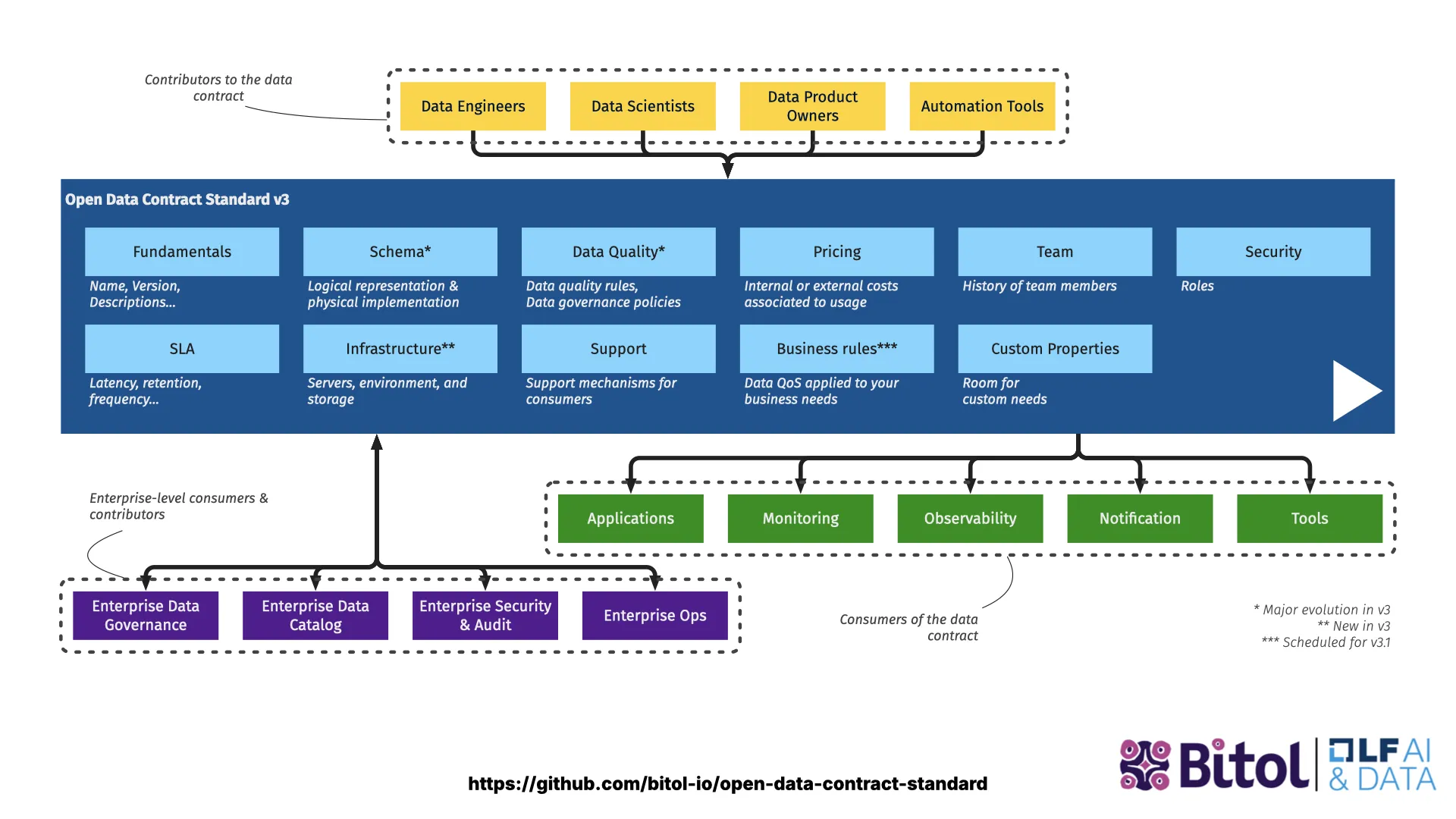

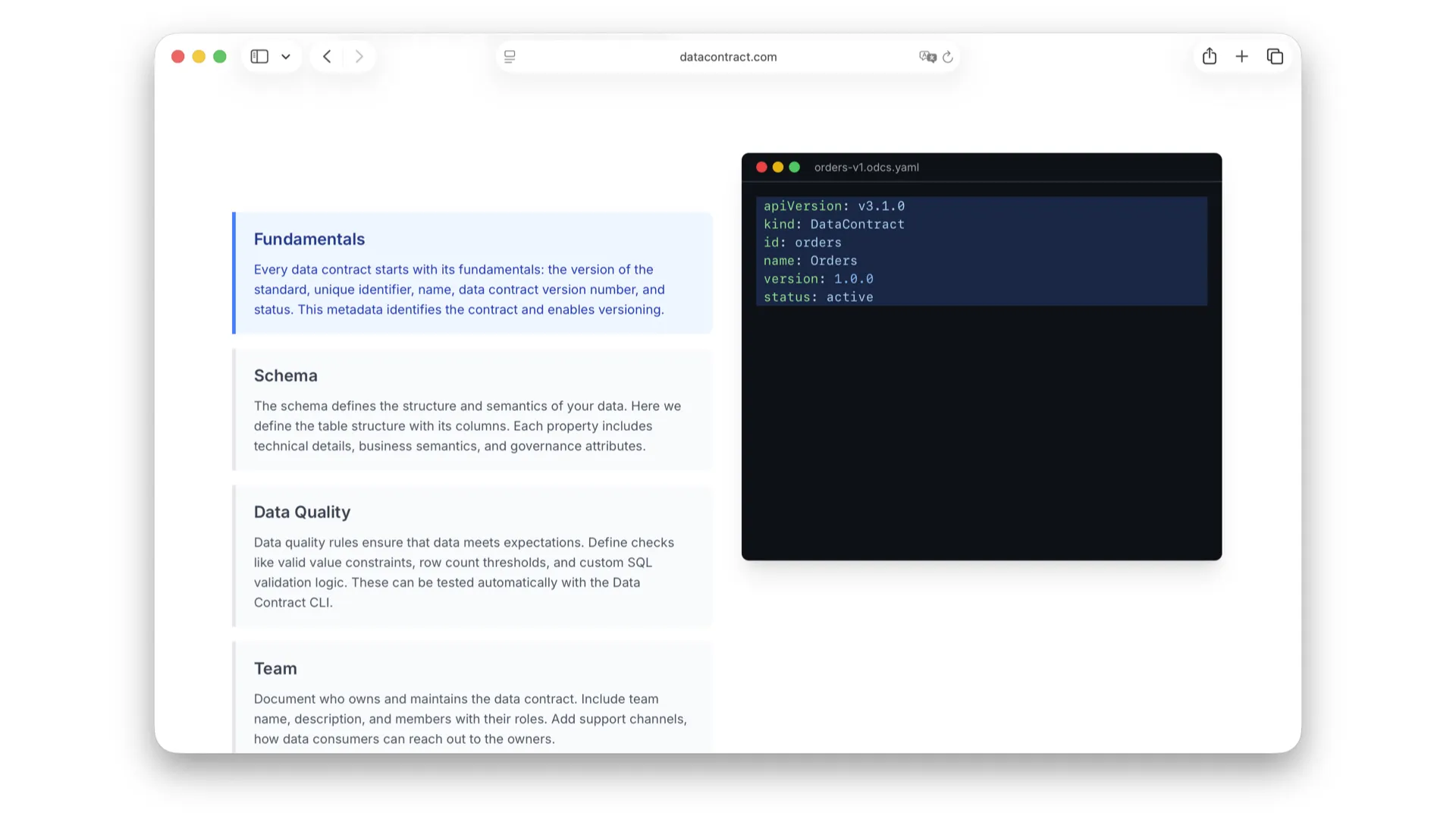

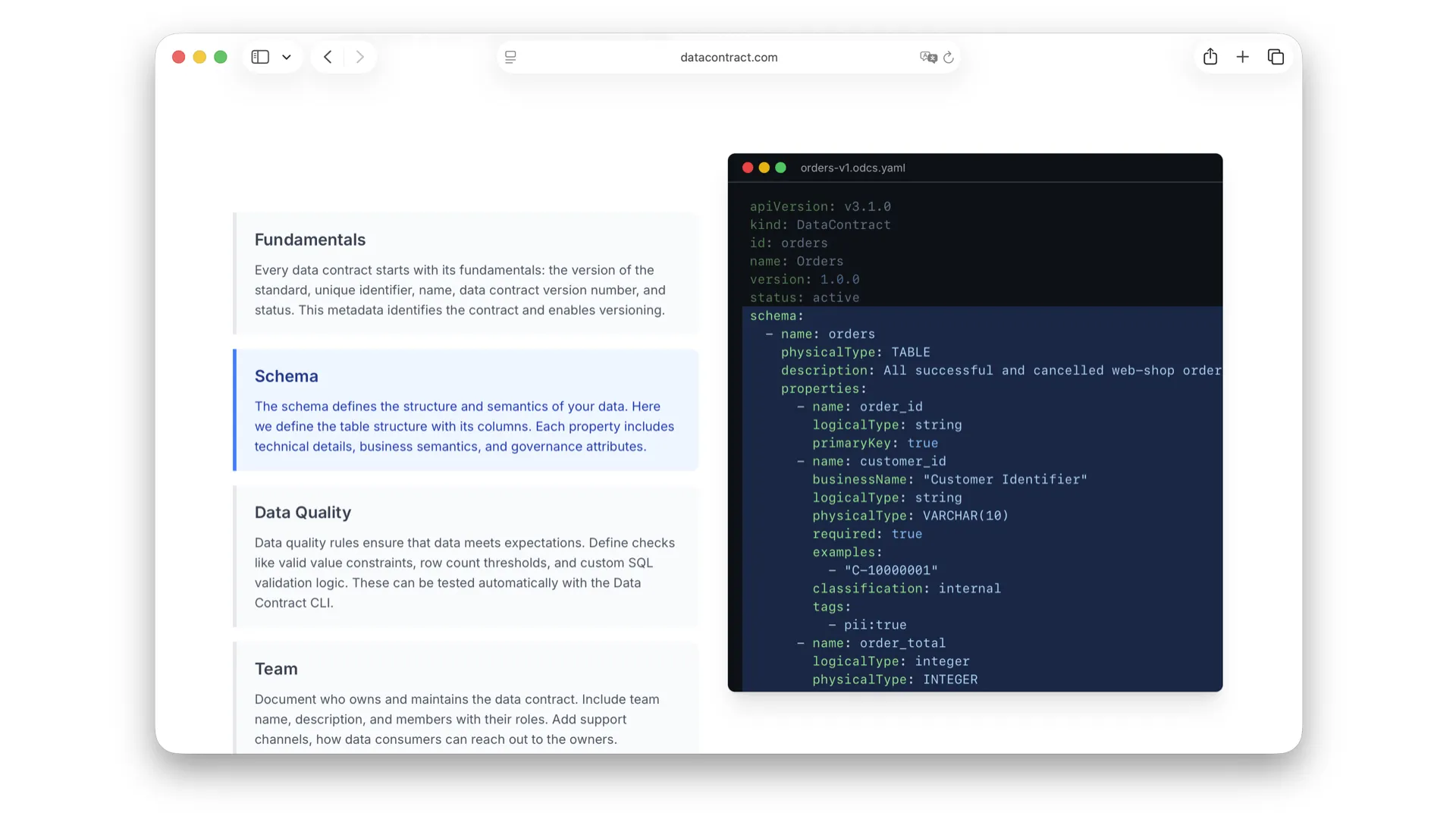

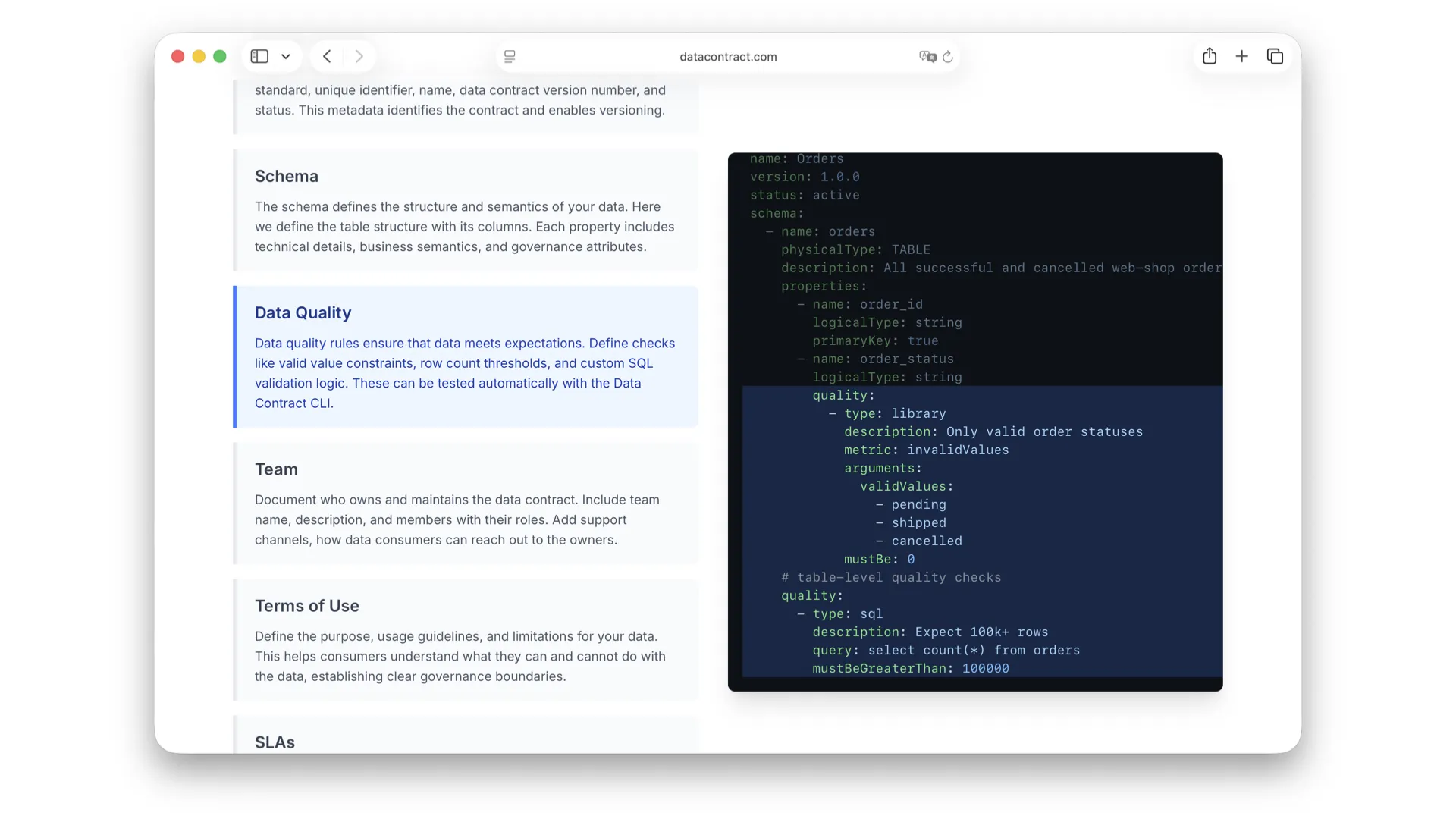

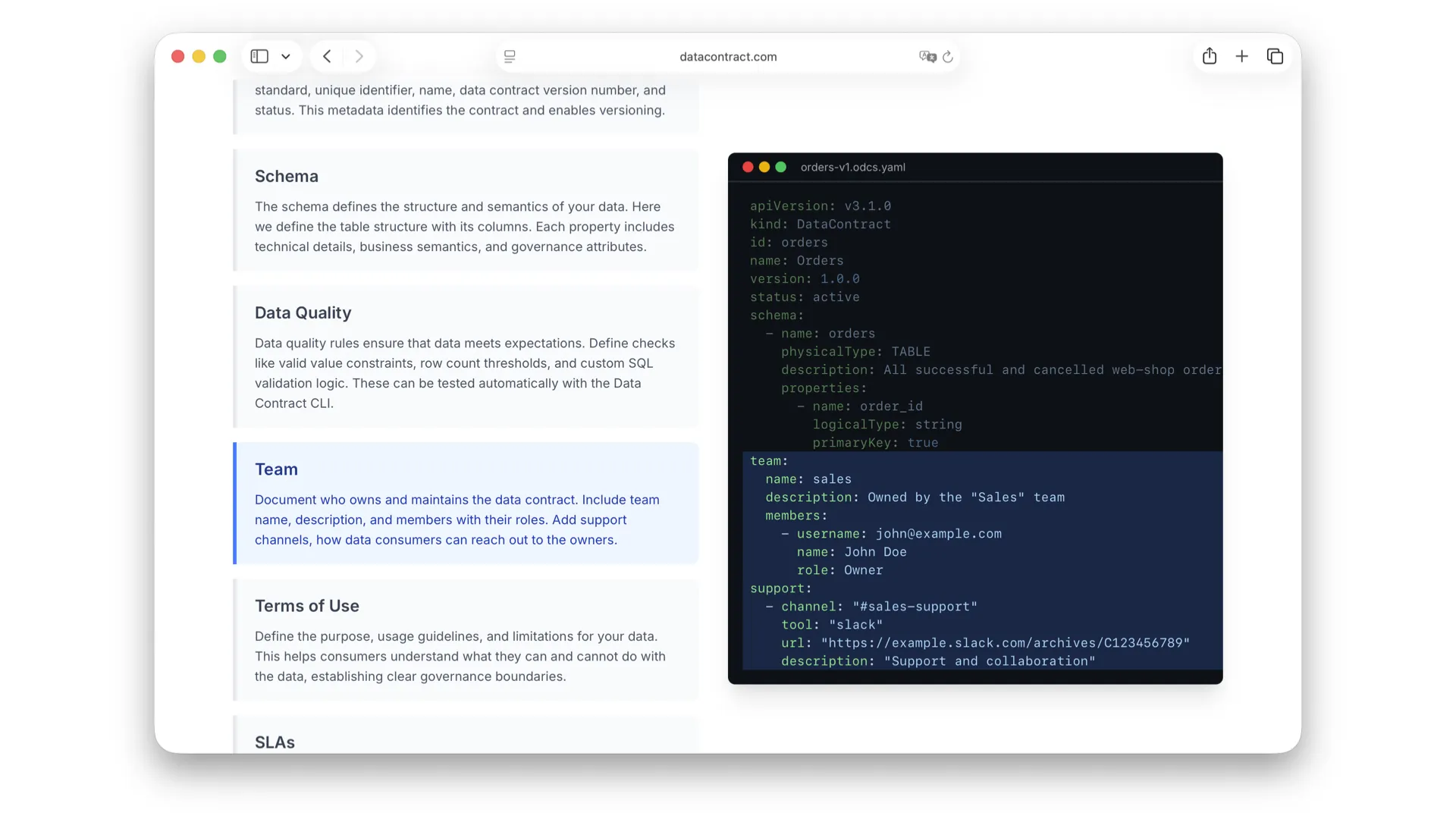

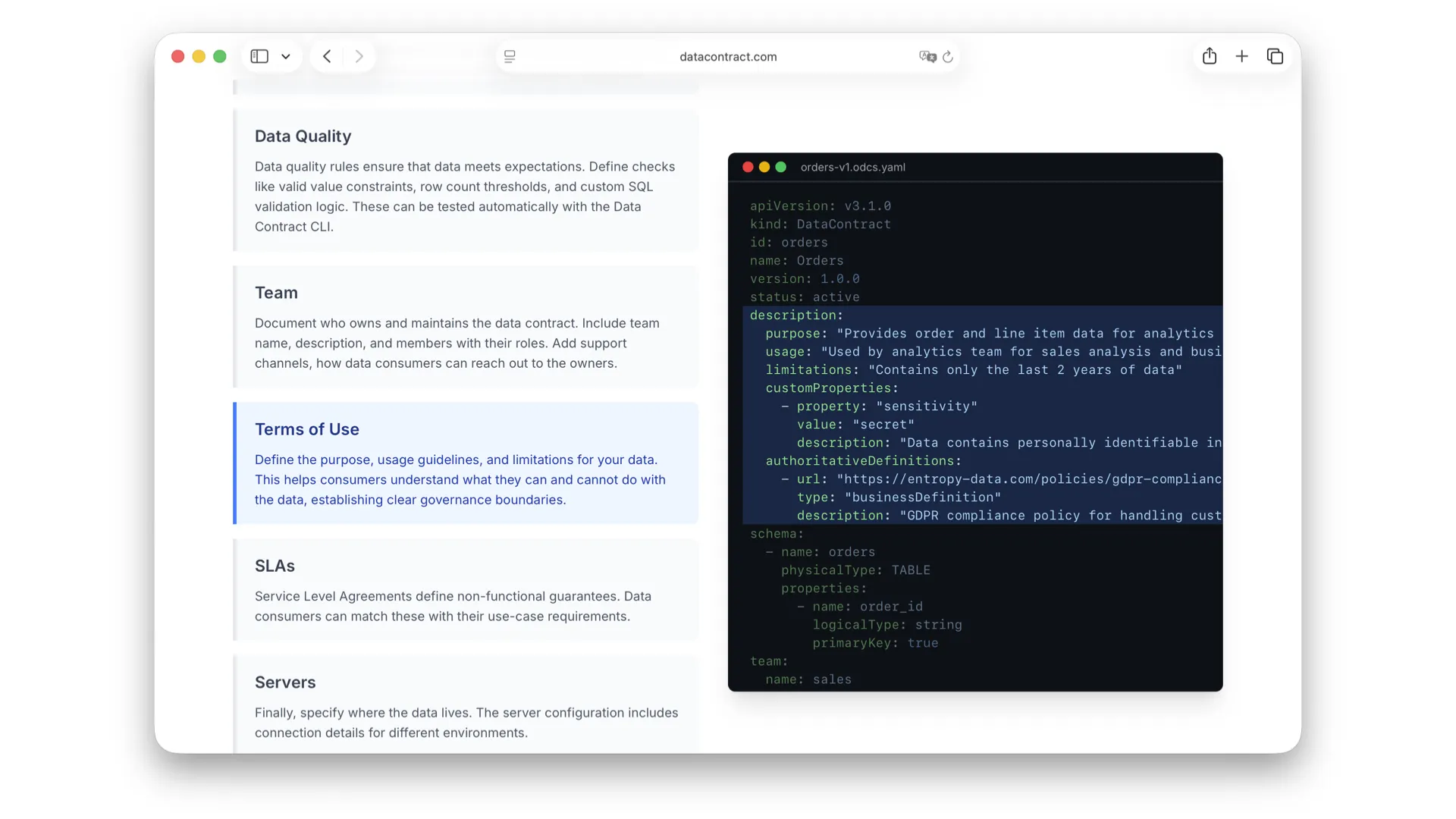

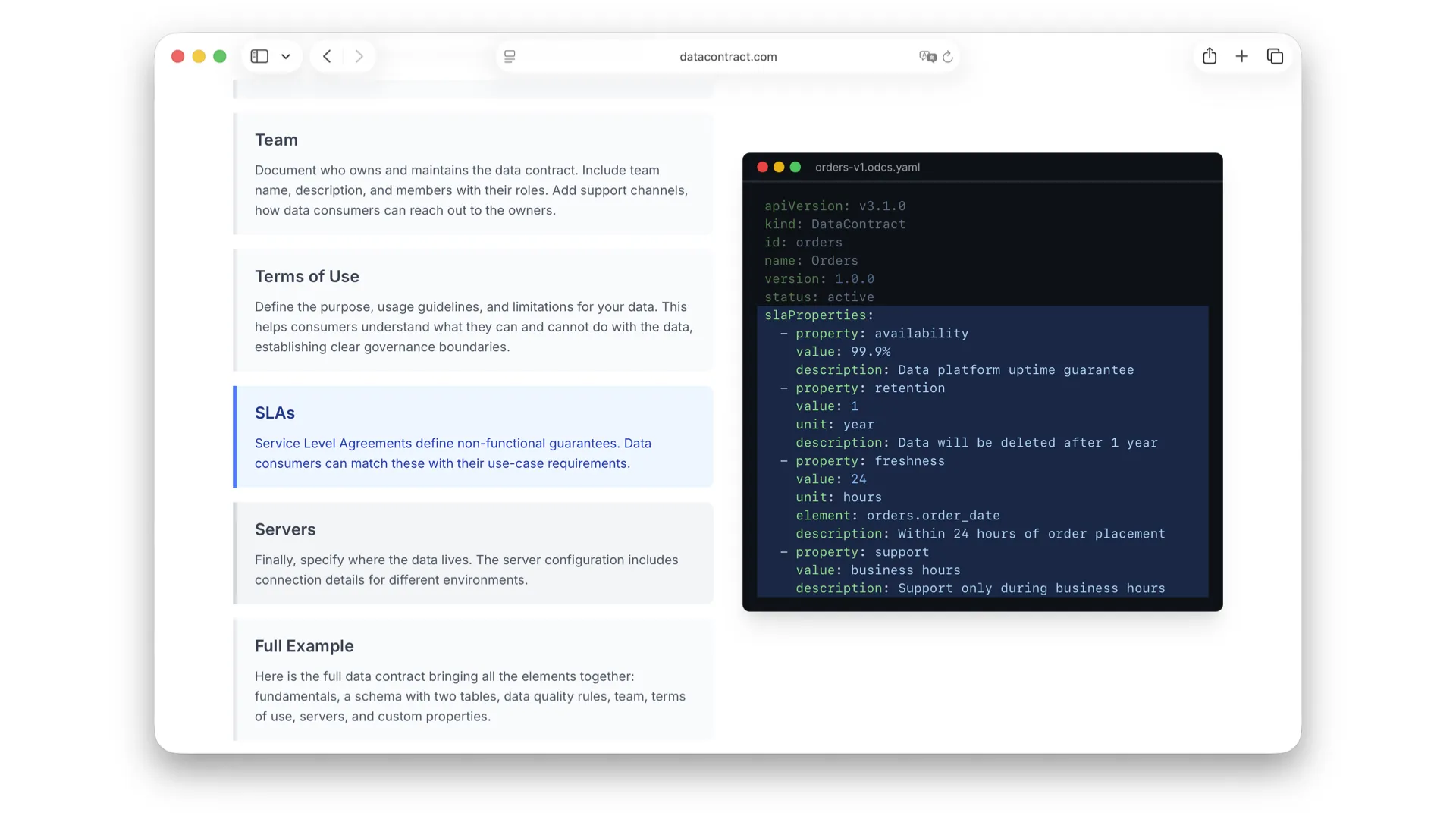

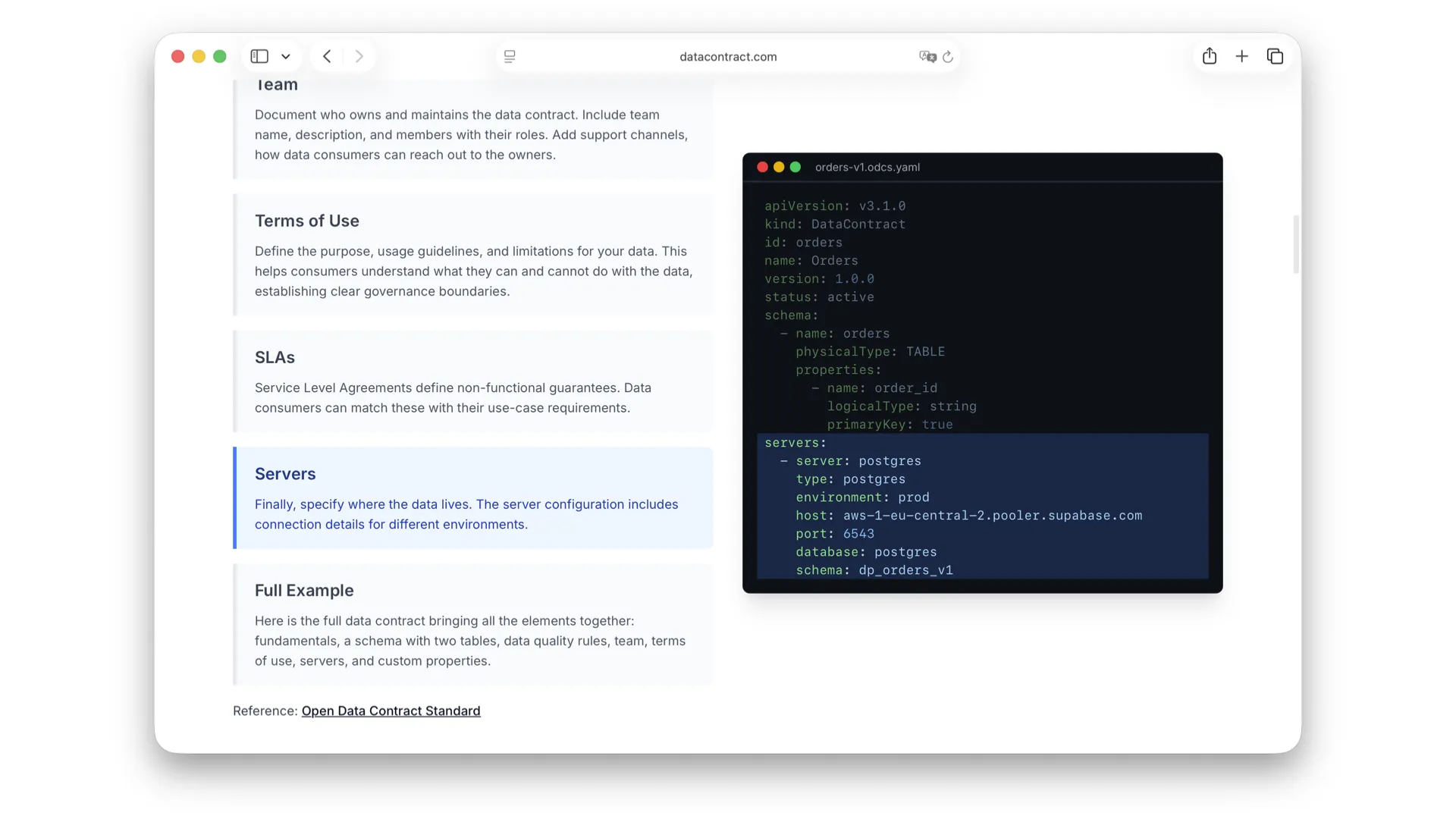

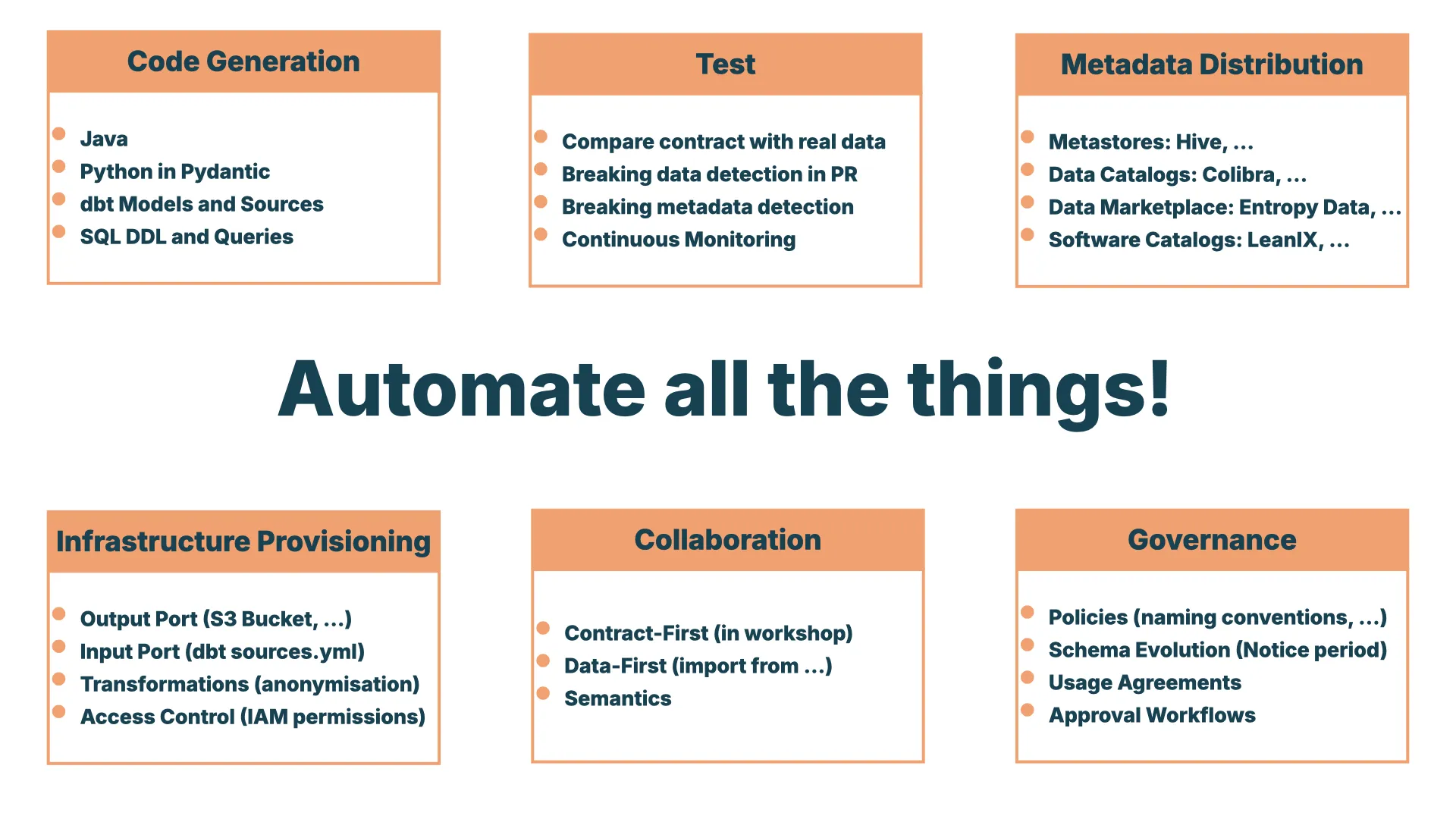

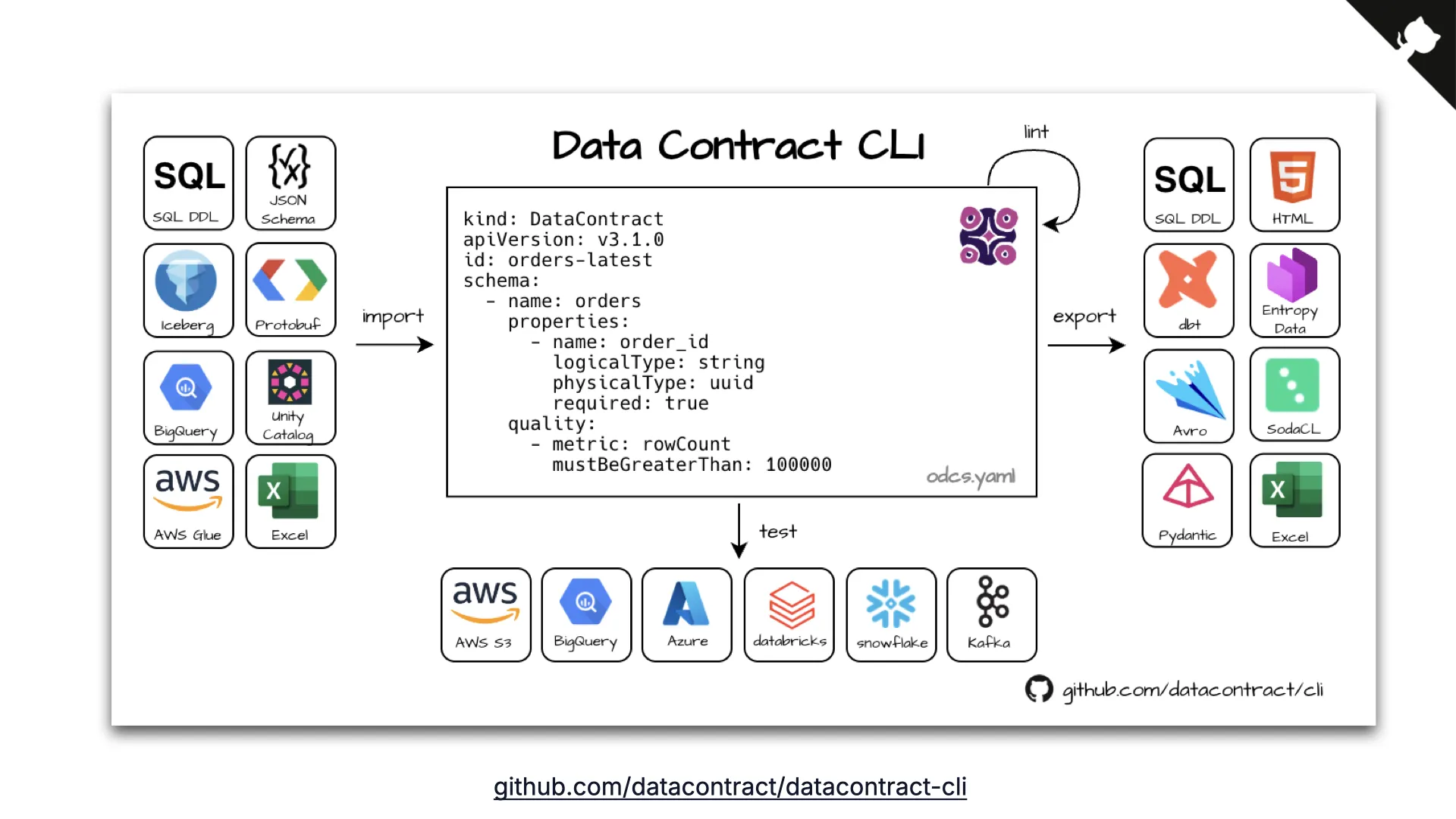

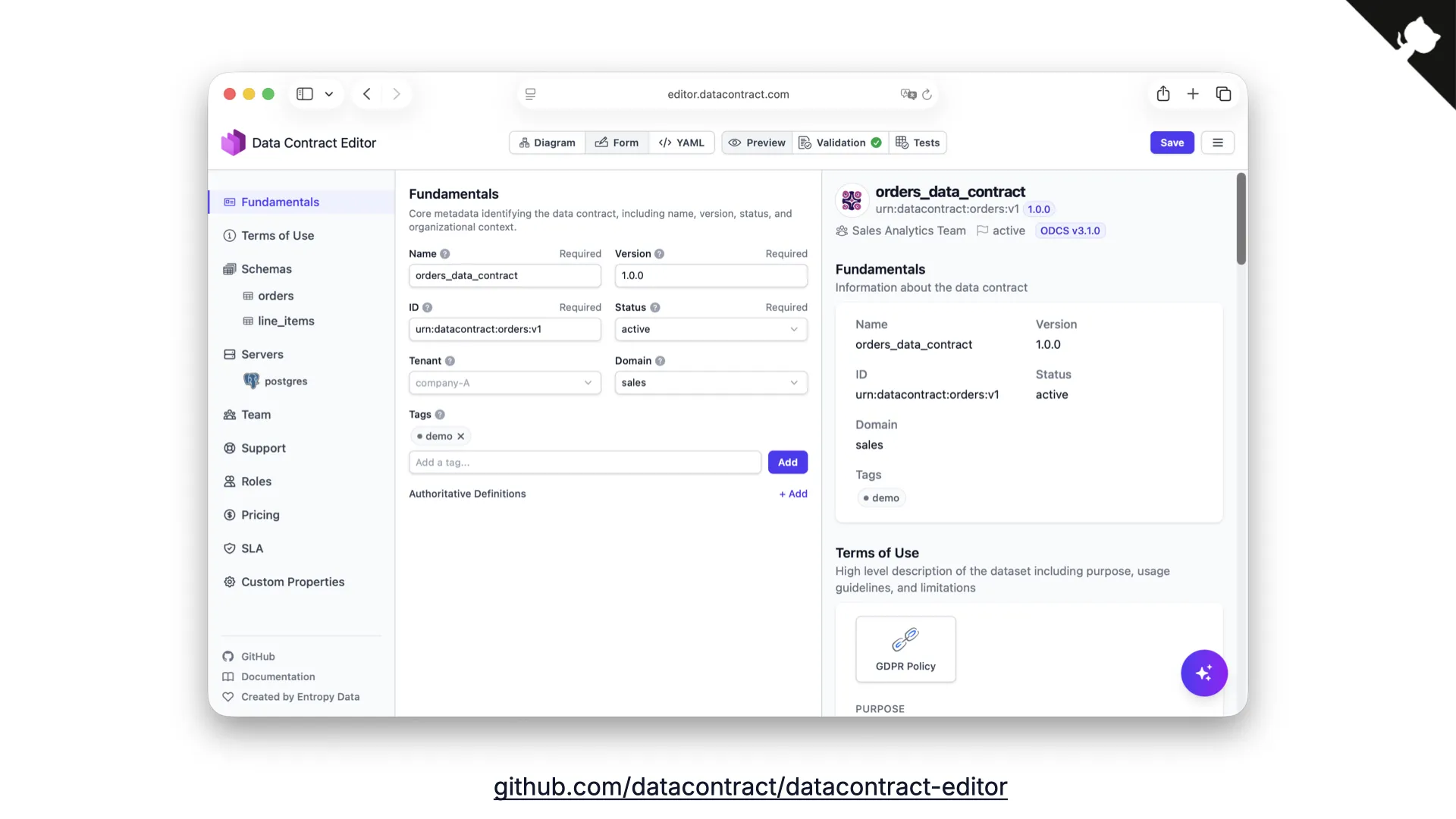

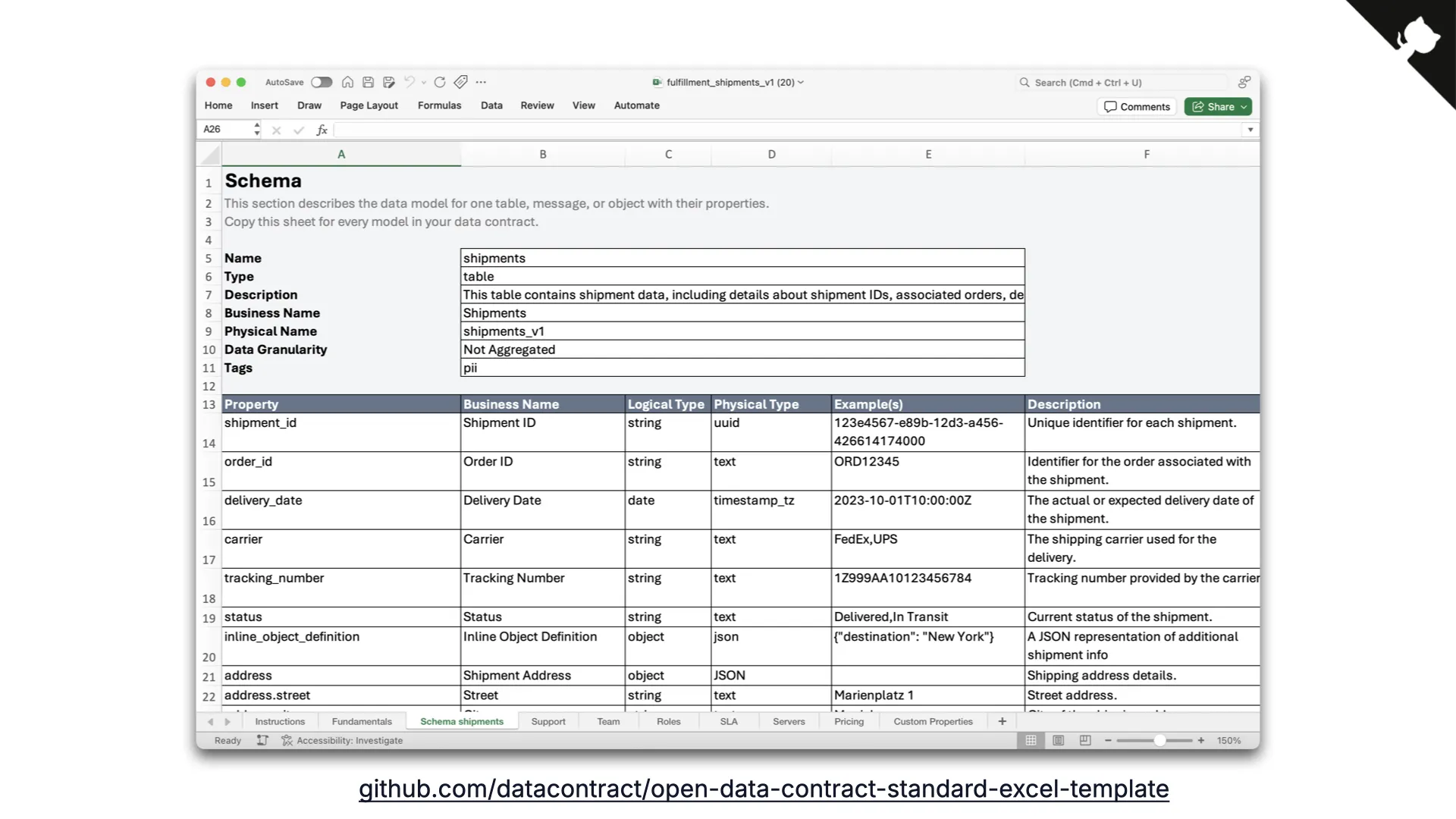

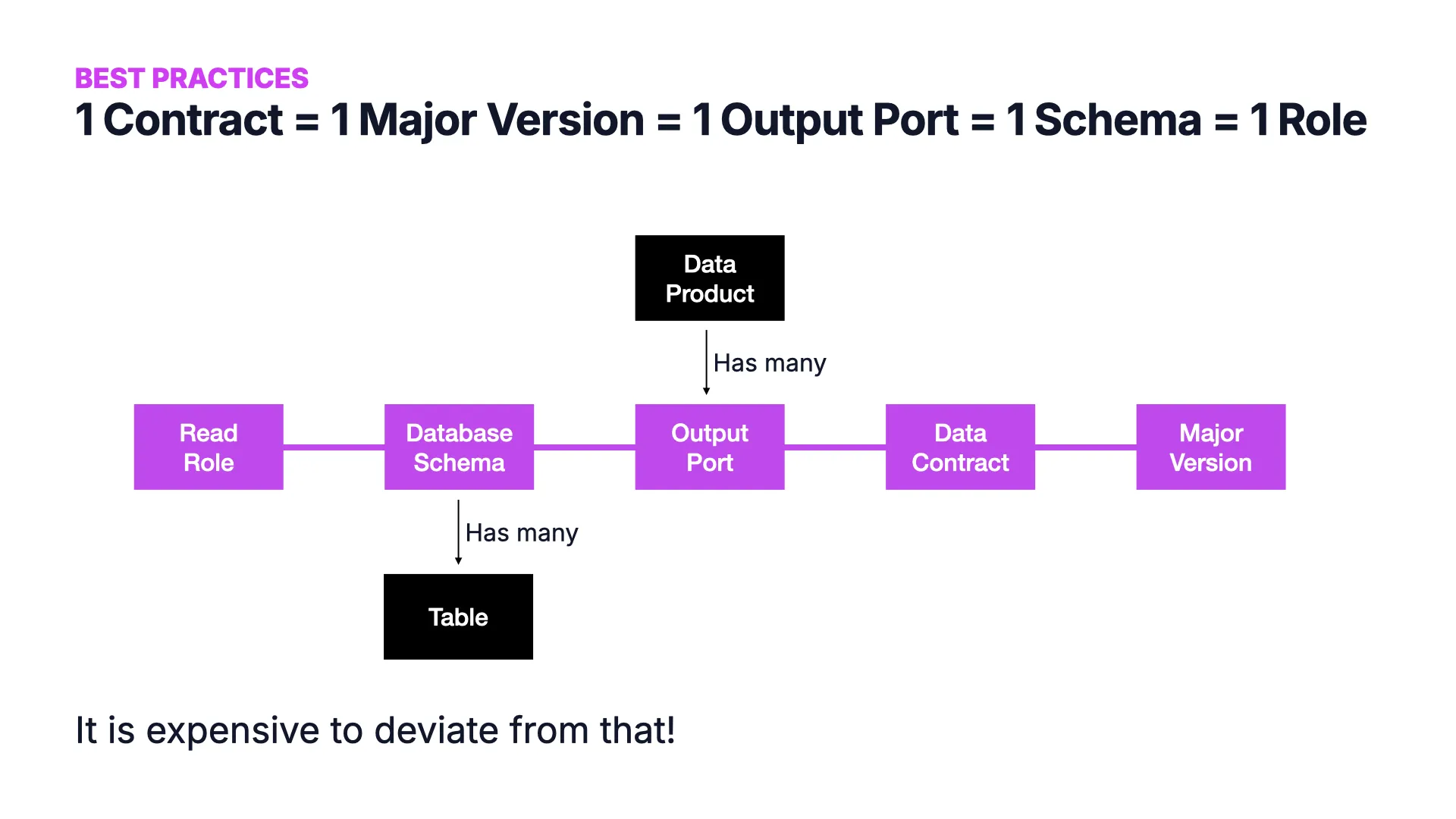

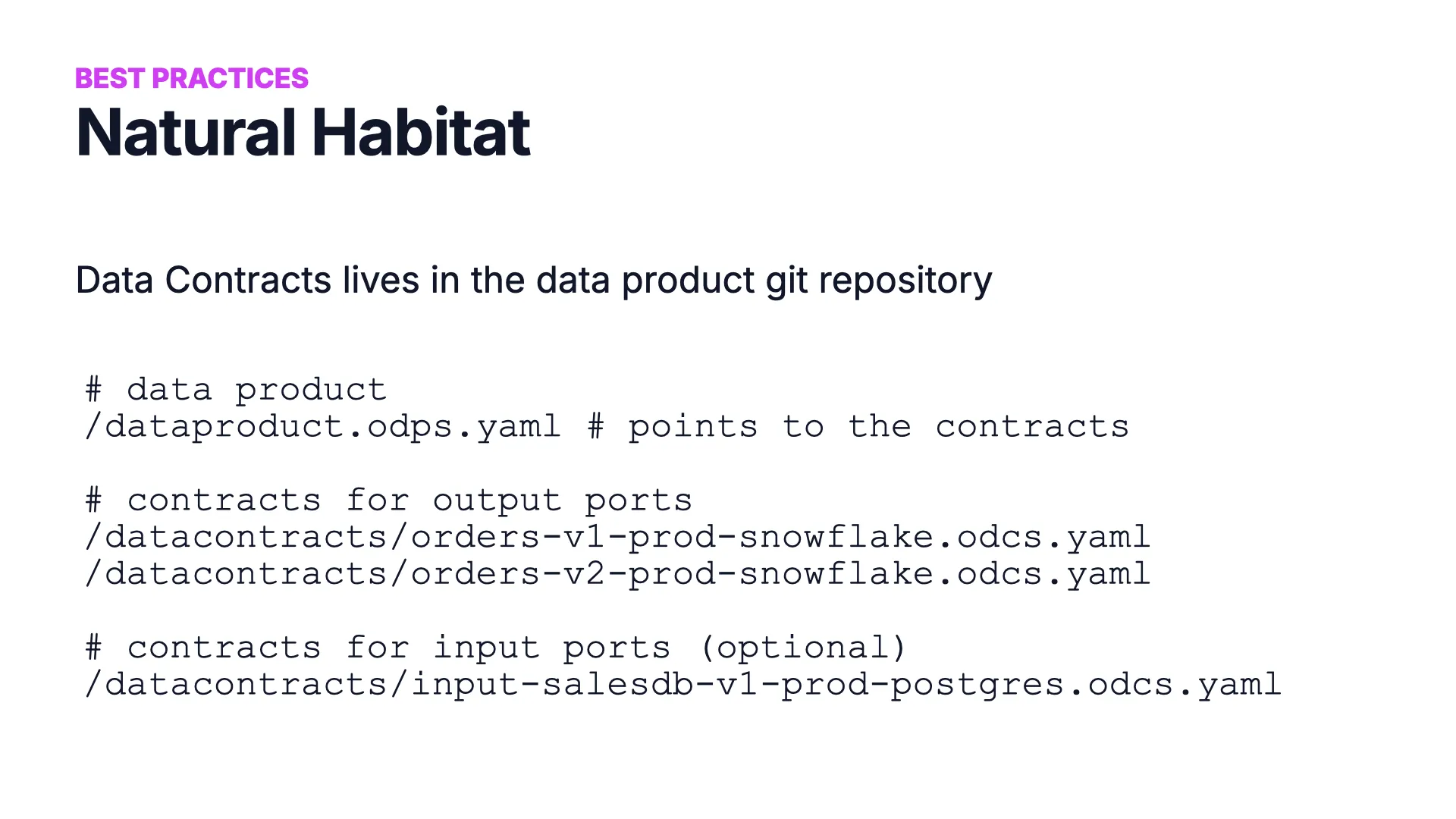

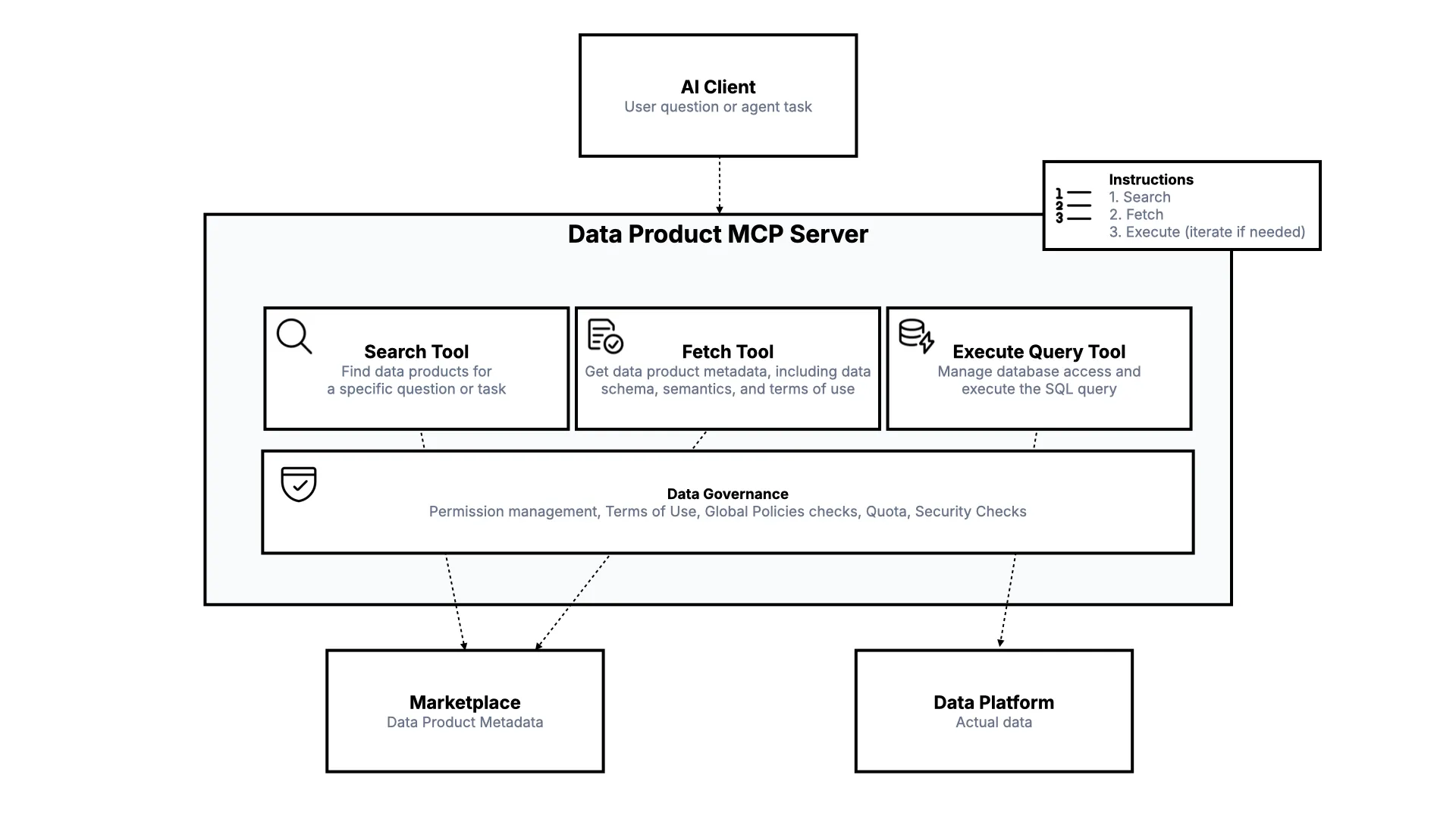

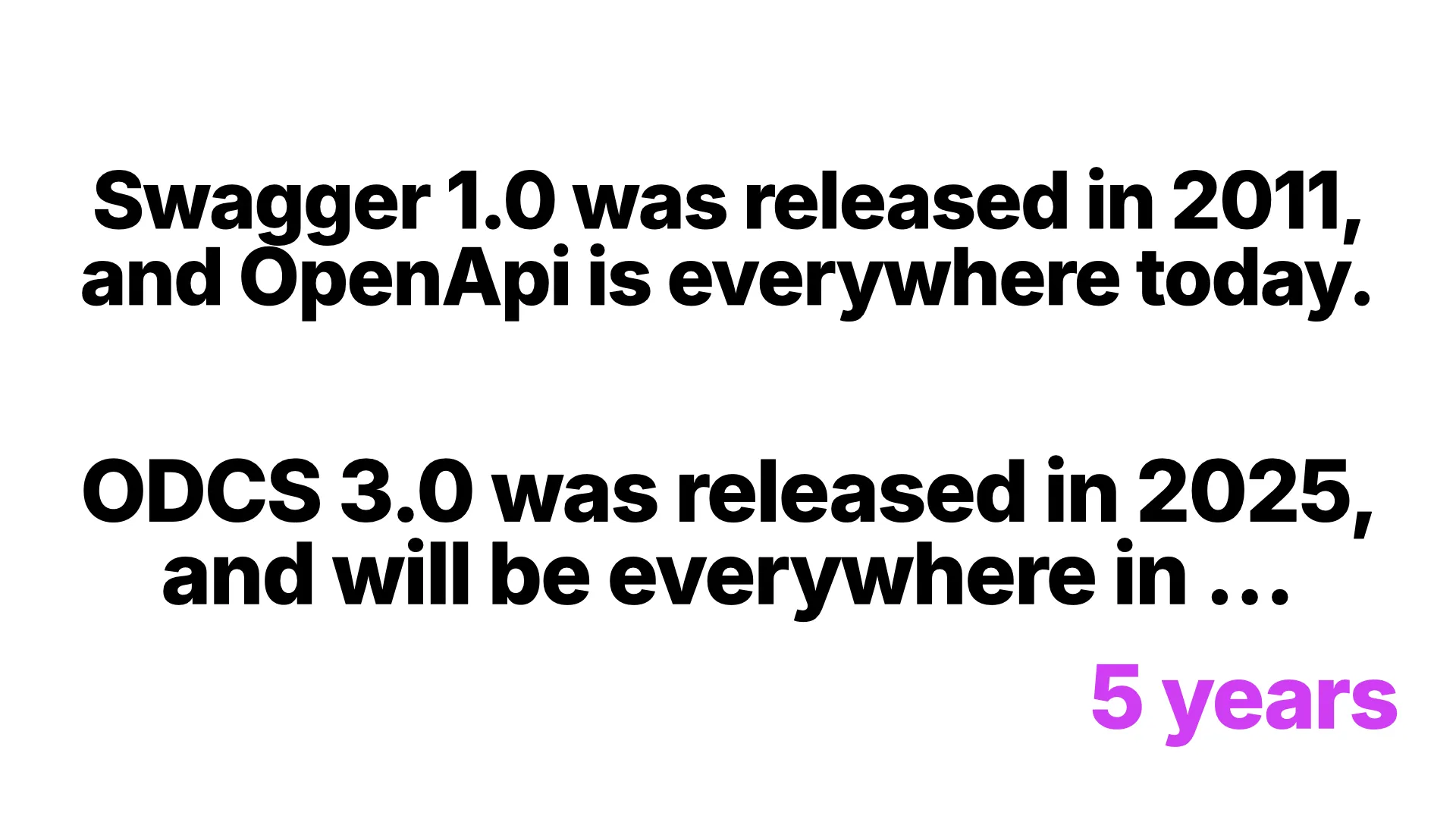

In this talk at the INFOMOTION Data & AI Meetup Cologne, I cover everything you need to know about data contracts: what they are, why they matter, the Open Data Contract Standard (ODCS), open source tooling, best practices for versioning and lifecycle management, and why agentic AI will make data contracts as ubiquitous as OpenAPI.

Thanks to Prof. Dr. Ana Moya, Peter Baumann, and INFOMOTION for organizing the meetup and the invitation to speak, and Jochen Christ for helping shape the talk.

Q&A

Selected questions from the audience after the talk.

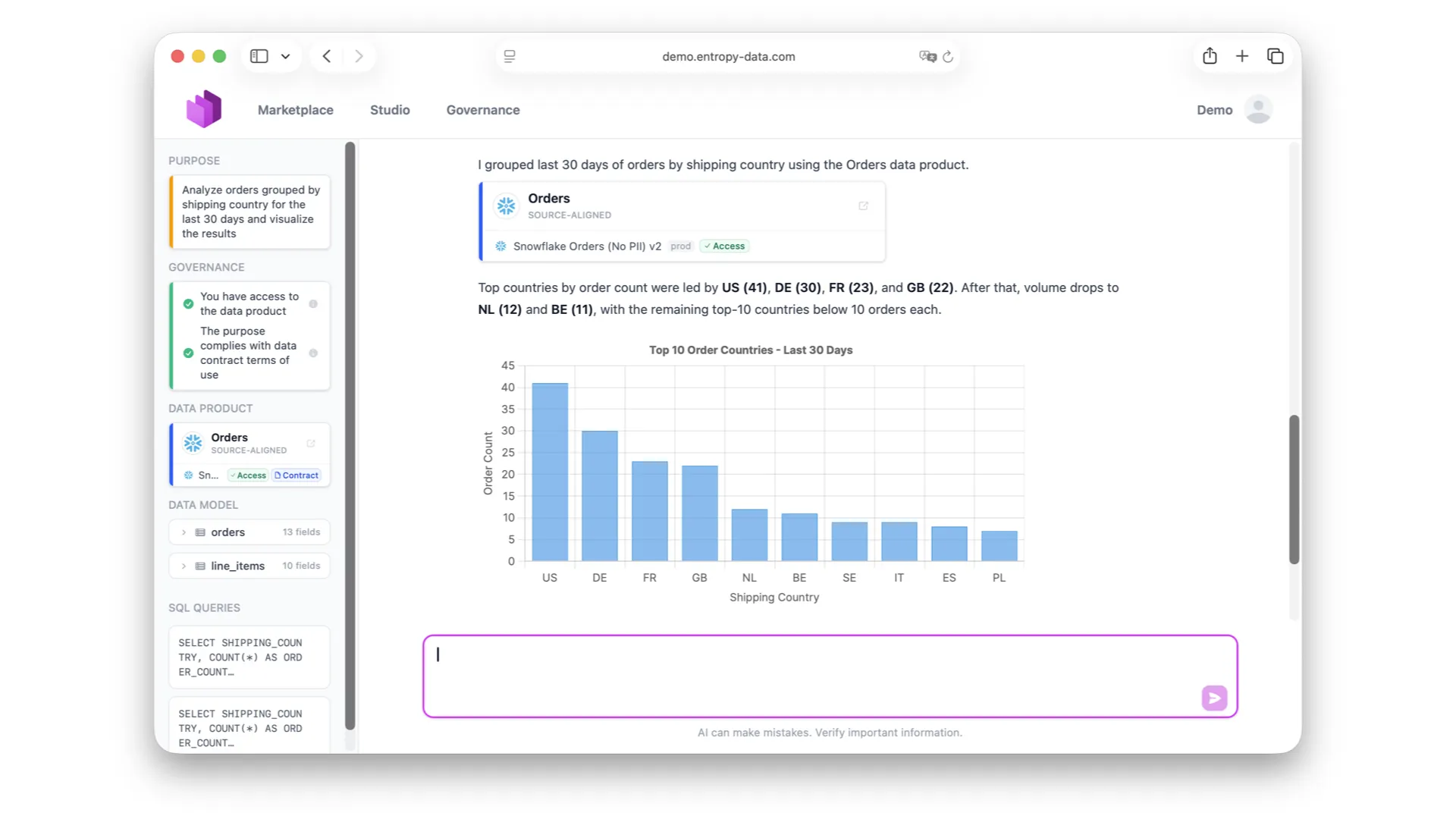

Q: How do we get the business side on board? This all still feels very technical. Even your agent demo returned customer IDs, not names like "Zalando" or "H&M."

Two answers. First, the reason the demo looked cryptic was that the agent only had access to the IDs -- it did not have permission to resolve them to plain-text names. With the right permissions, it would do that. Second, the data contracts are specifically designed to carry business language. We have business names for all columns and tables. You can define a data dictionary or glossary -- say, "this is what an order number means" -- once, and all contracts reference that definition. Additionally, you can now attach example questions, answers, synonyms, and supplementary knowledge to a contract so the AI can answer even better. That is all optimized for business users.

Q: I come from the production/manufacturing side. Can I model relations between data points -- like flow through a pipe, where it goes, and how much should arrive?

Not directly, because contracts operate at the schema level, not the instance level. We look at which columns exist and make assertions about columns, but not about individual rows. If each data point is essentially its own table, you are getting into Digital Twin and Asset Administration Shell territory, which is a different world. You could theoretically create a contract for each table, but you need to be careful that the contract does not end up bigger than the data itself.