Talk · JAX 2026

Data Architecture: The New Backbone of Modern Software

Dr. Gernot Starke (INNOQ Fellow) & Dr. Simon Harrer (CEO, Entropy Data) · May 7, 2026

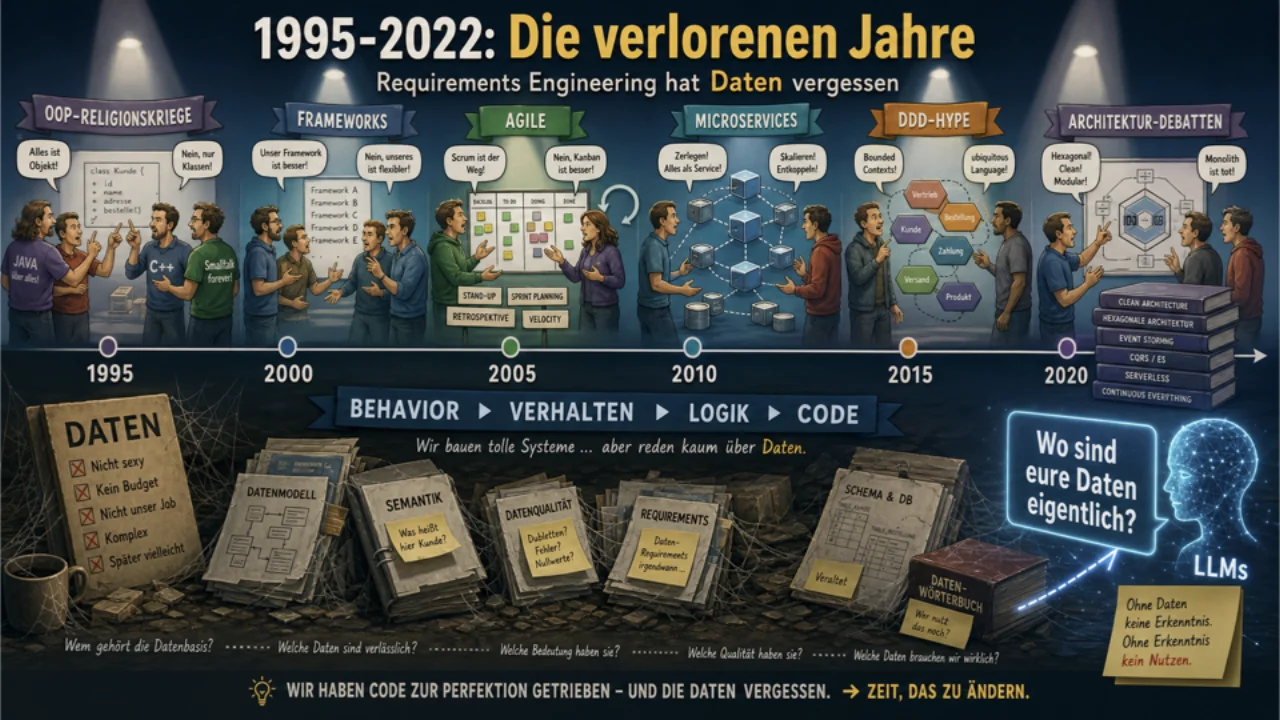

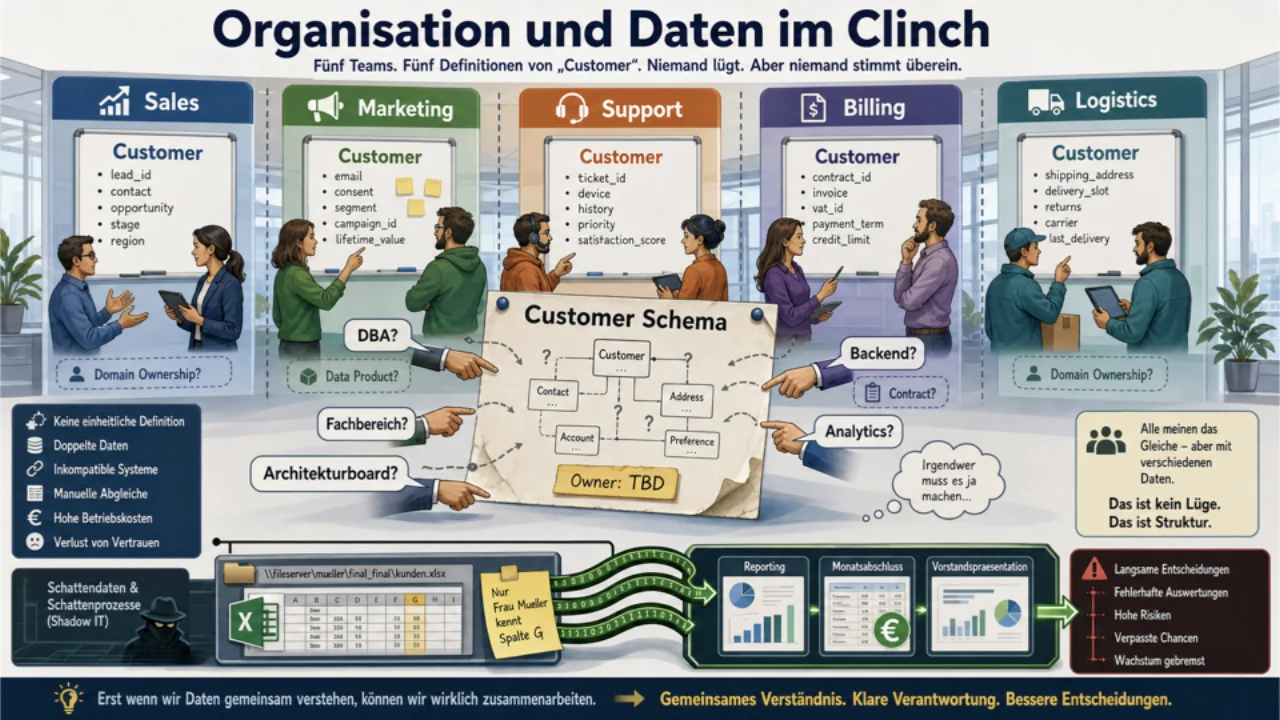

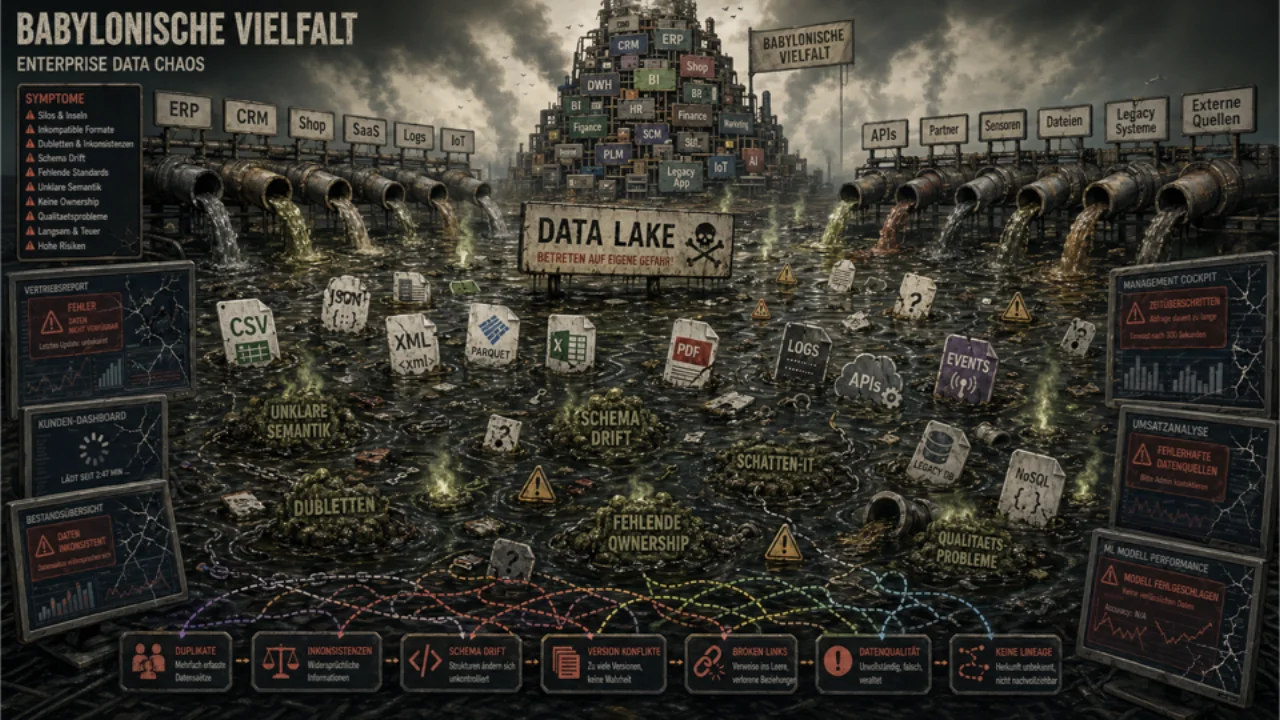

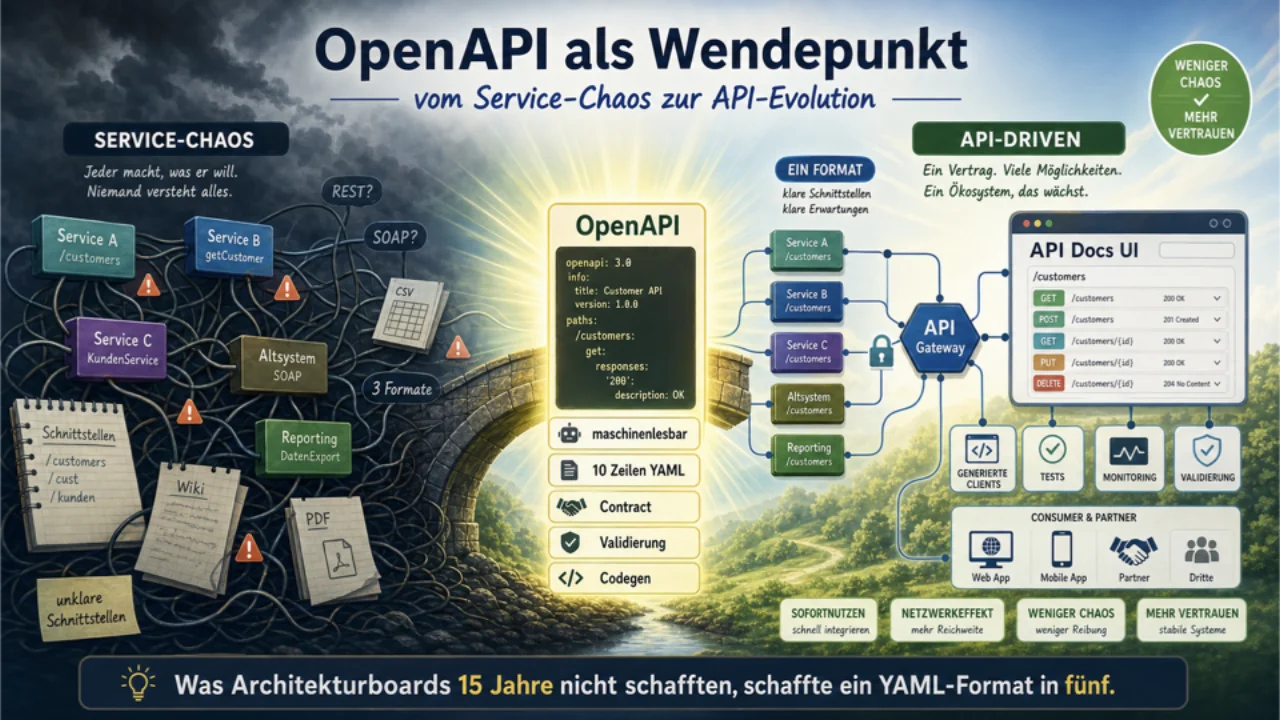

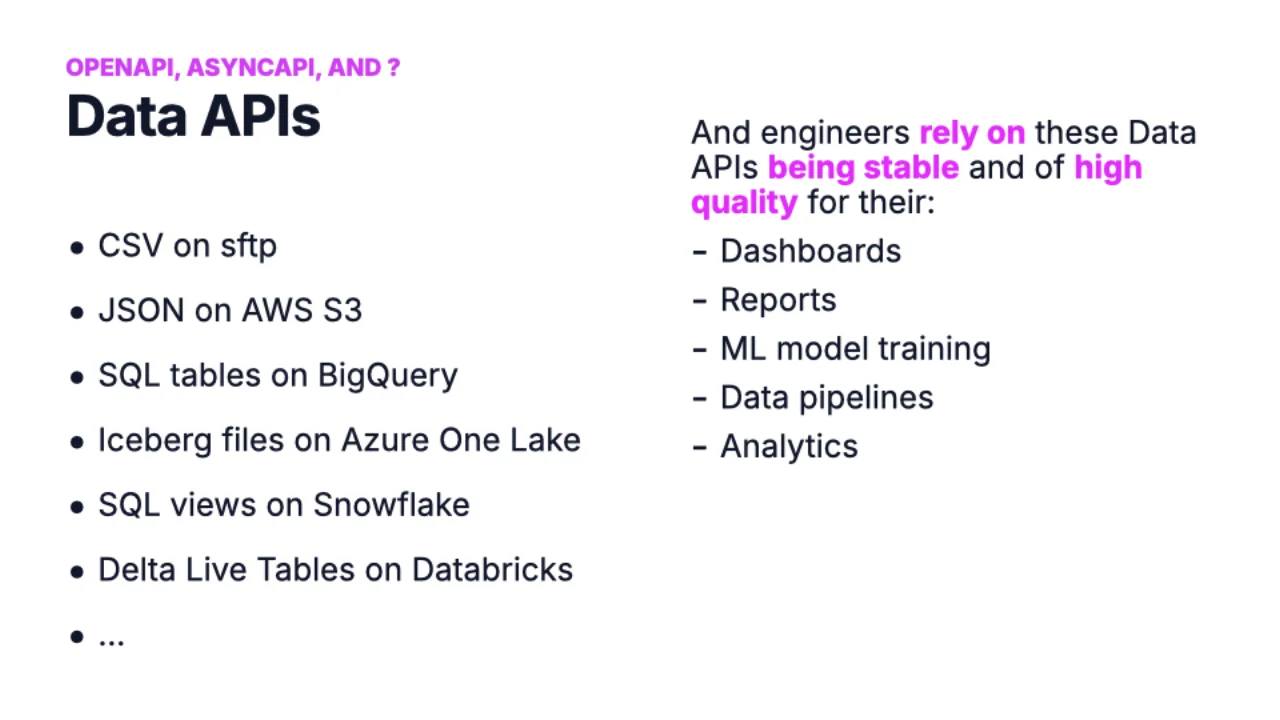

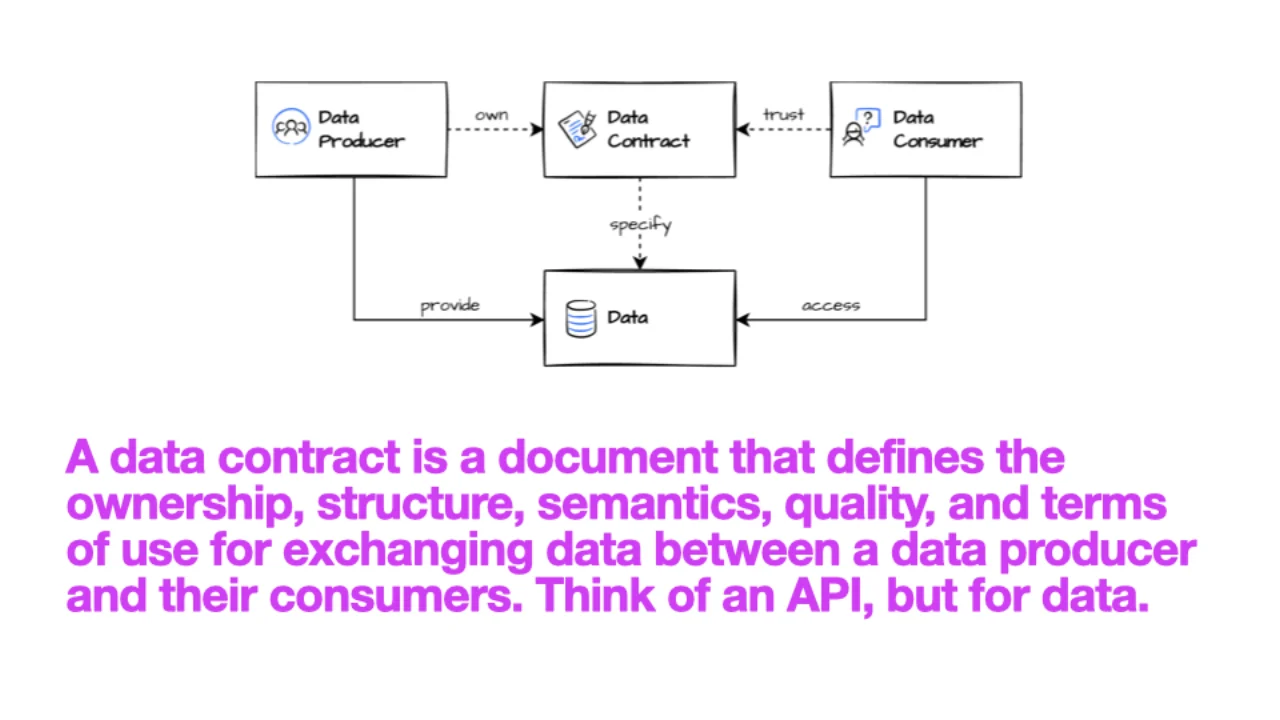

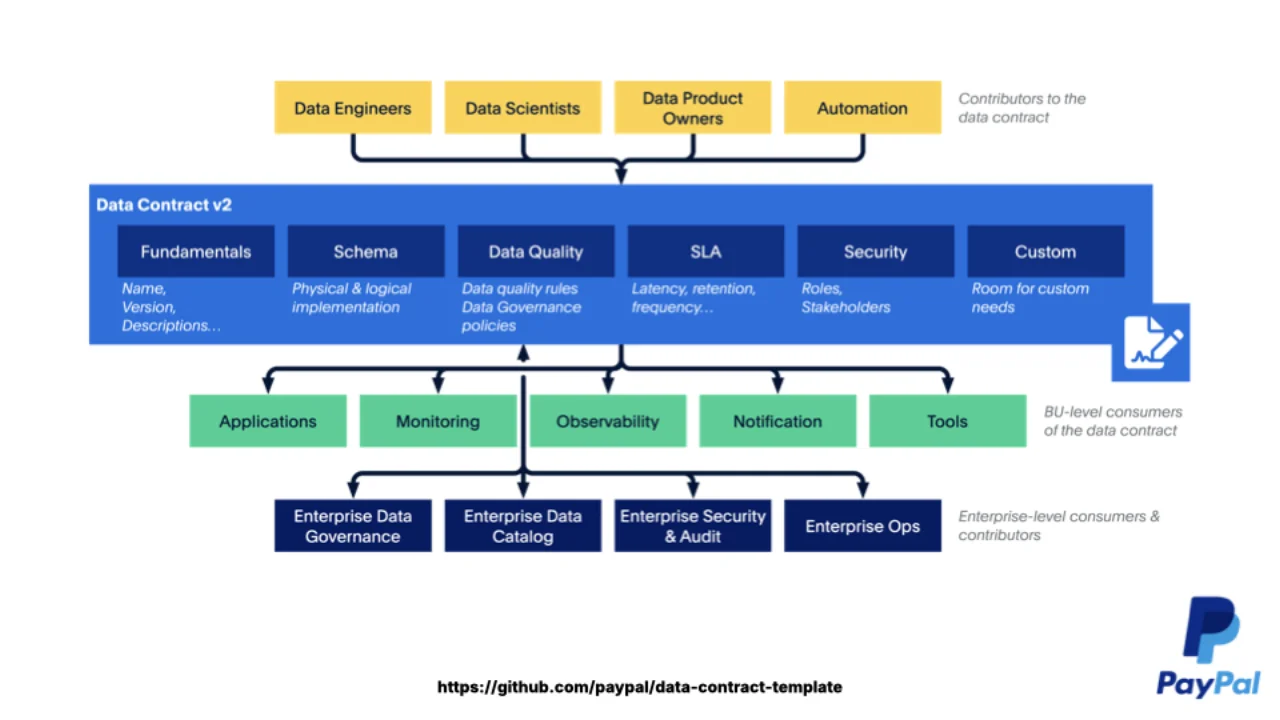

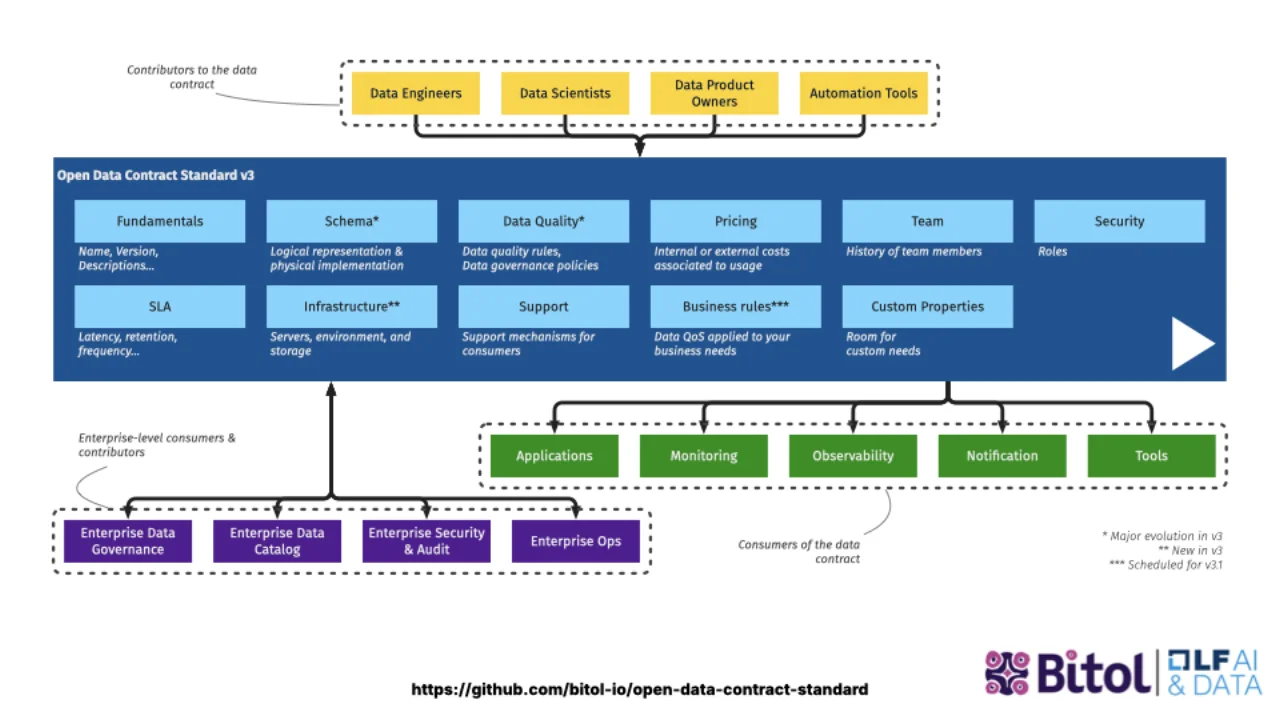

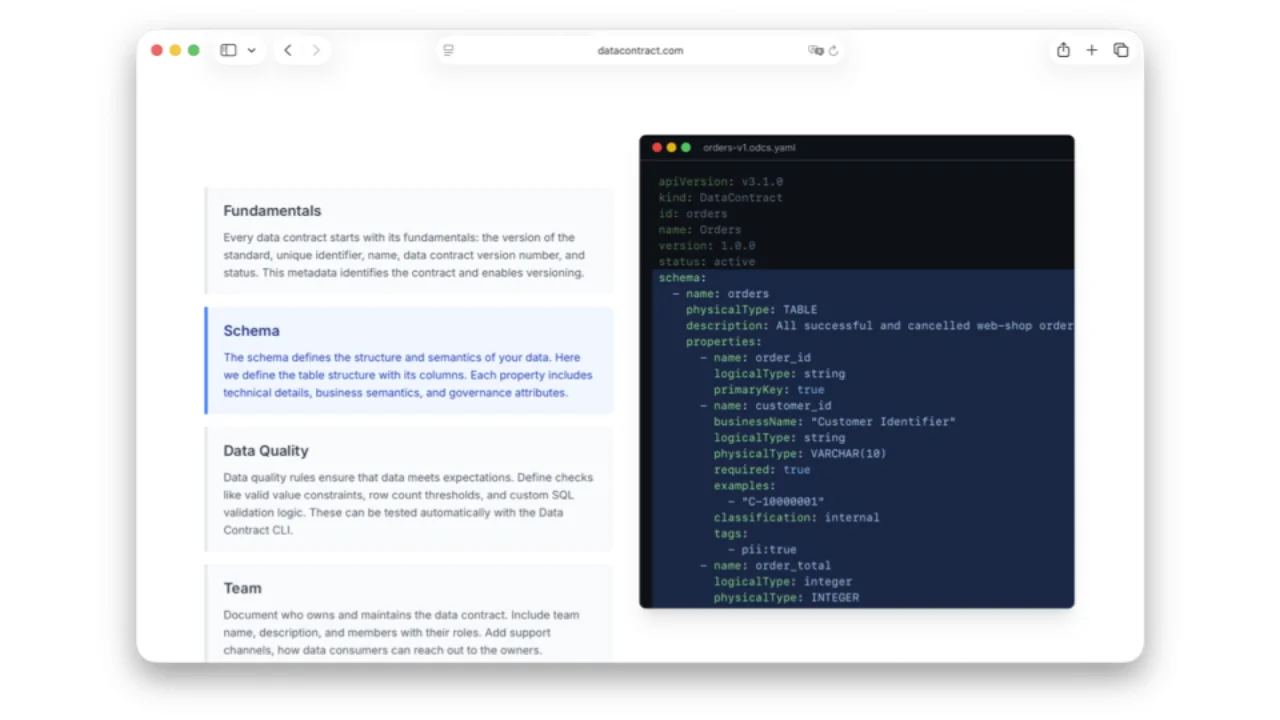

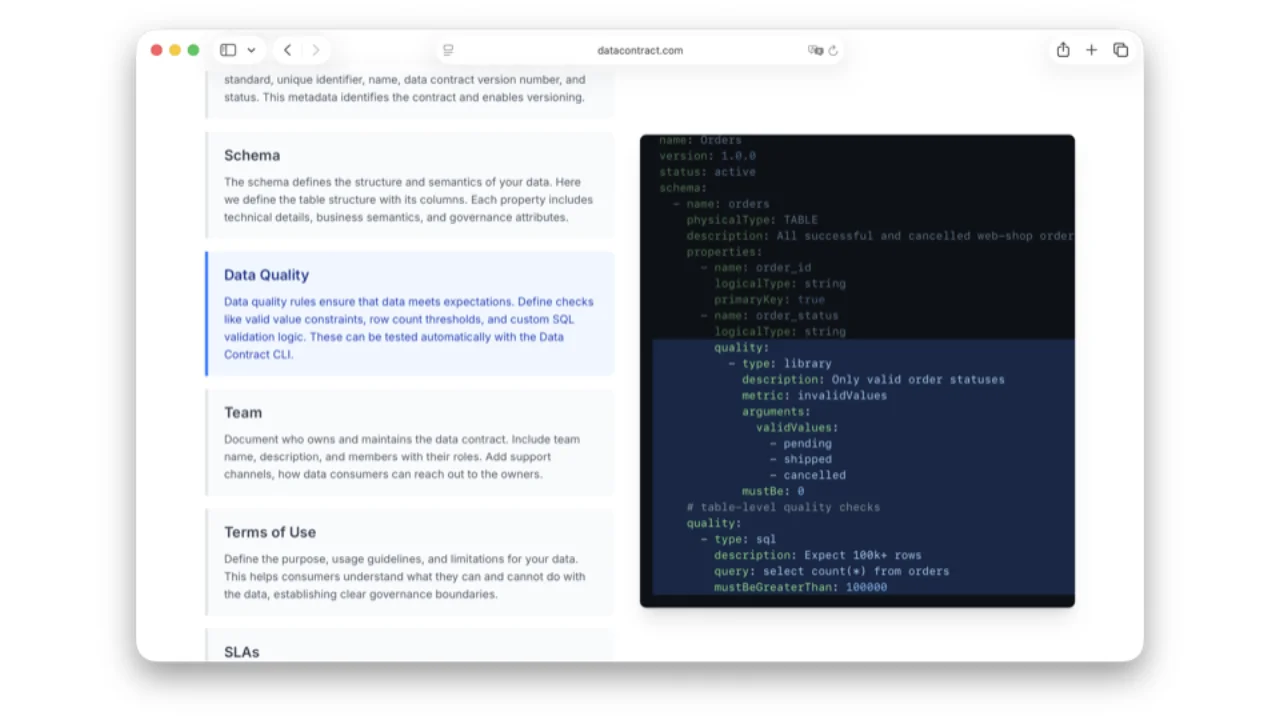

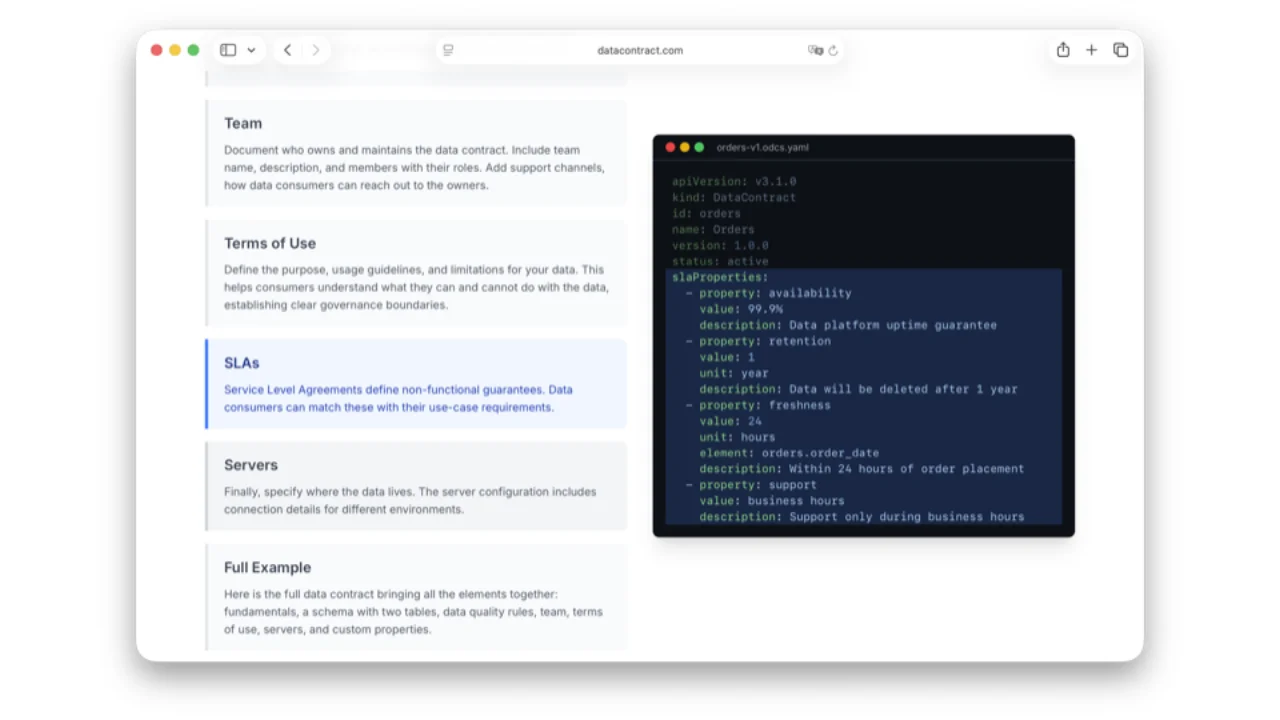

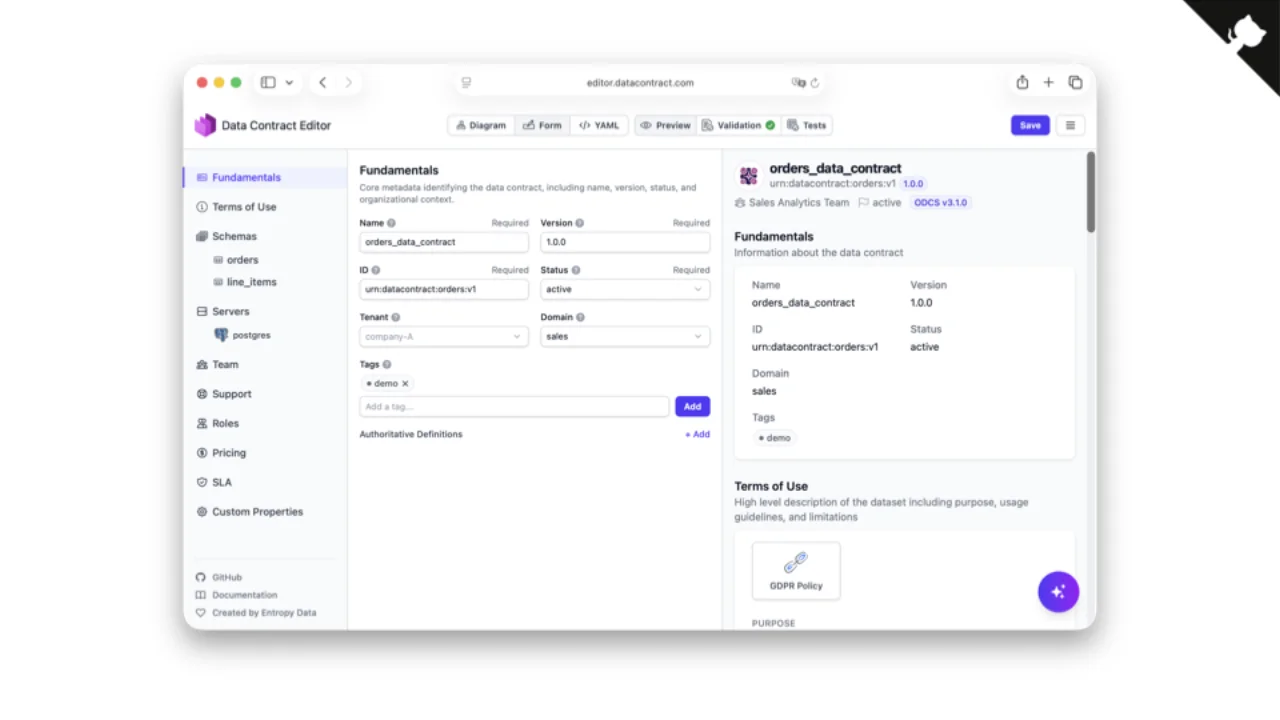

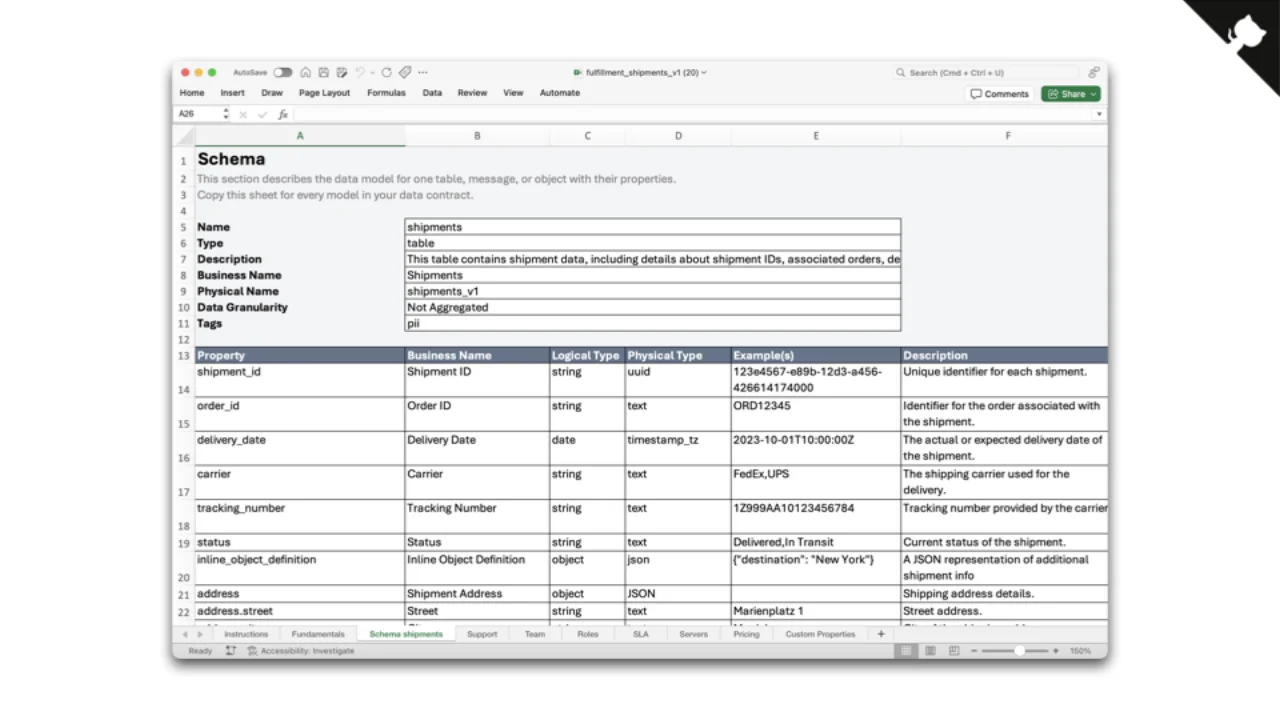

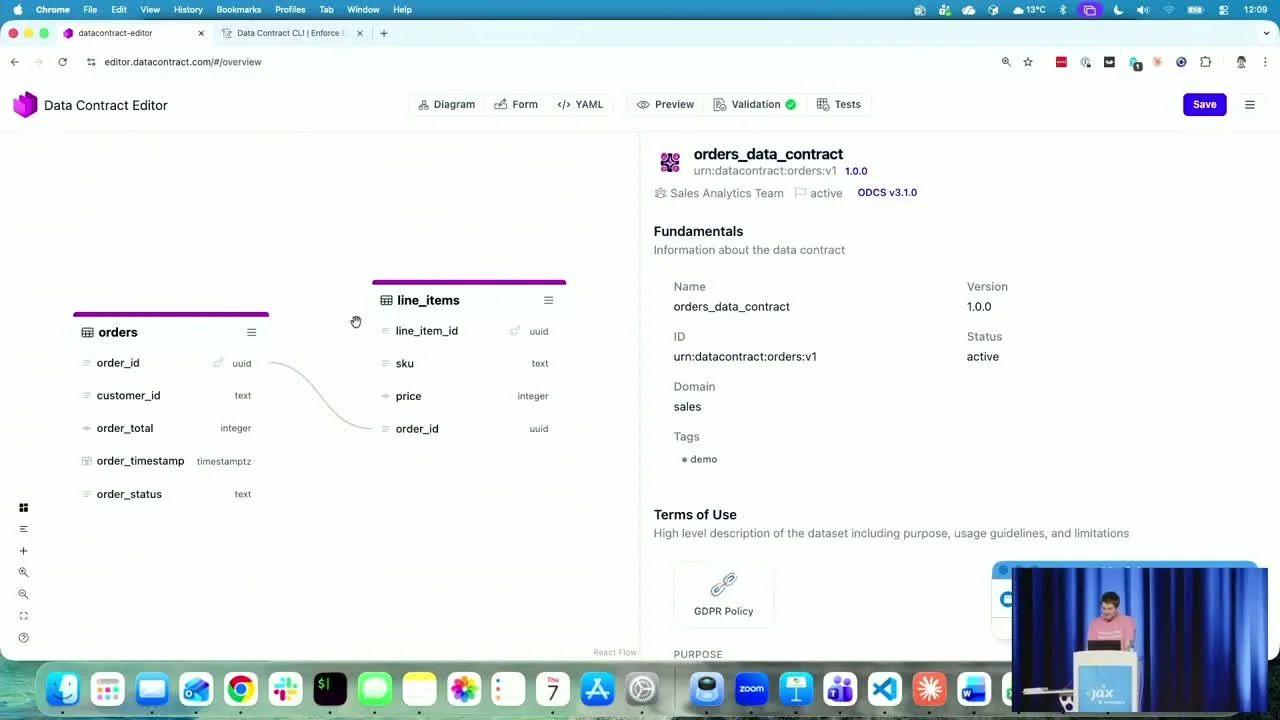

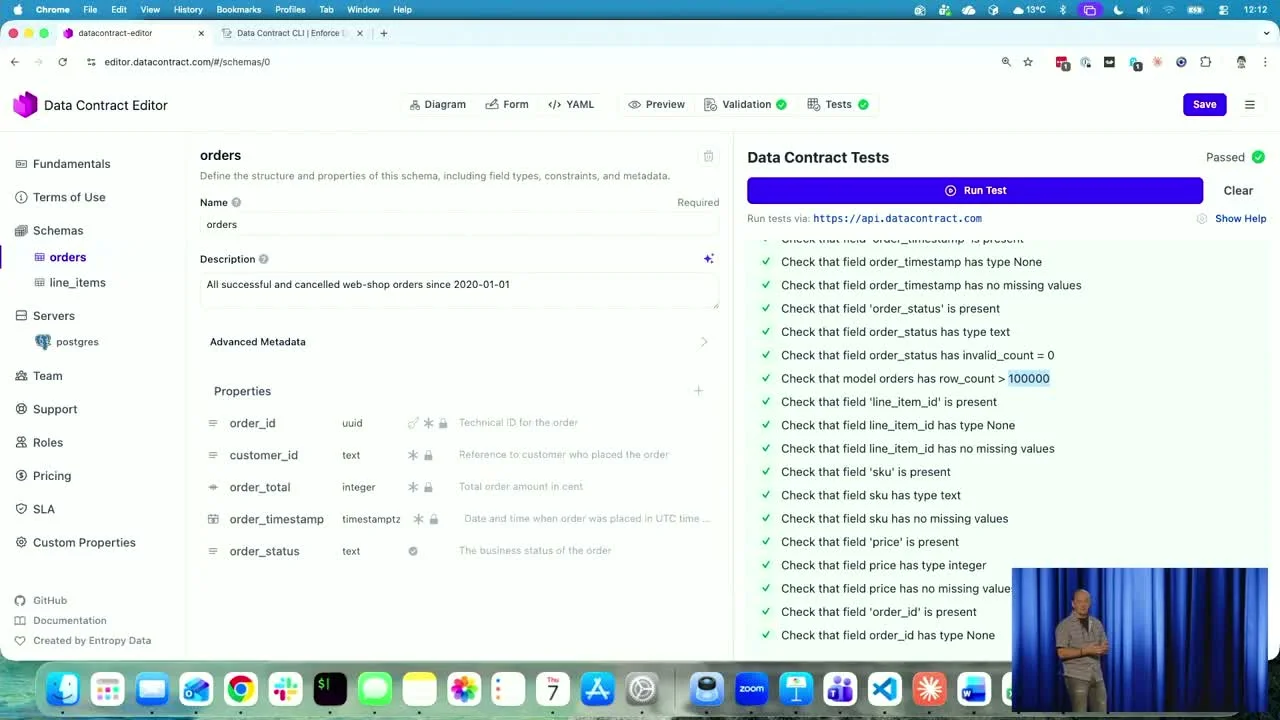

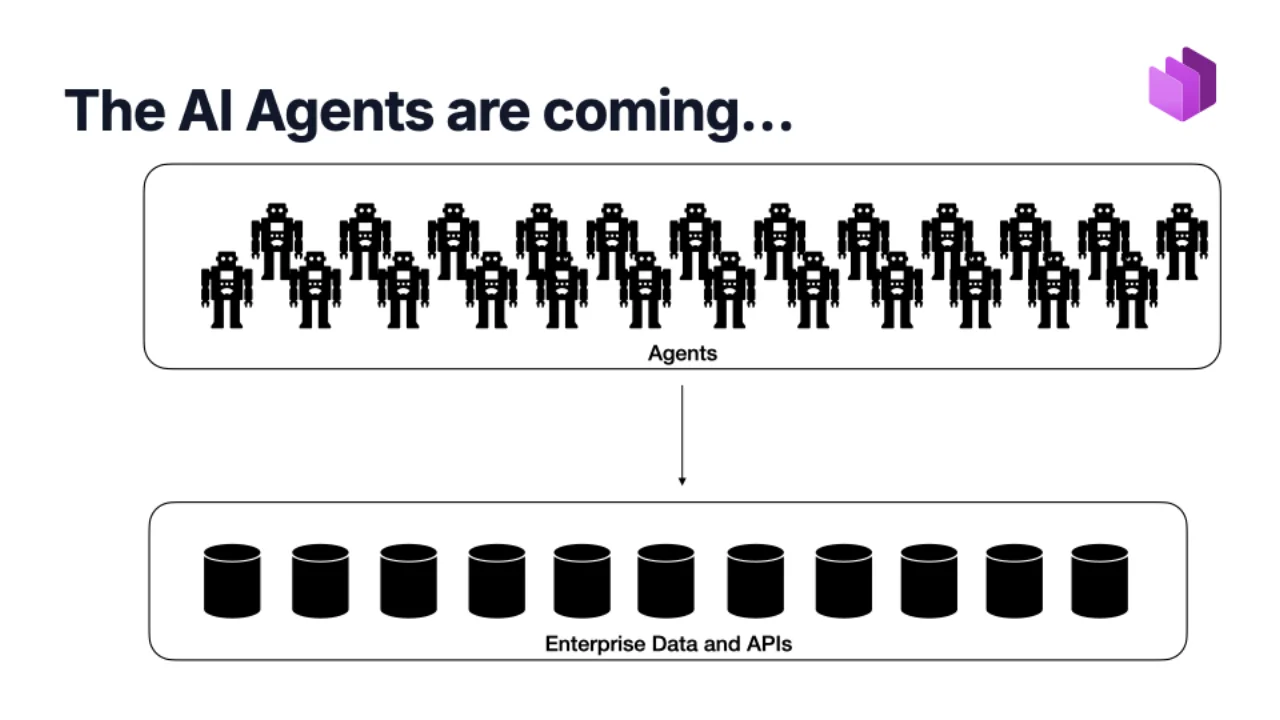

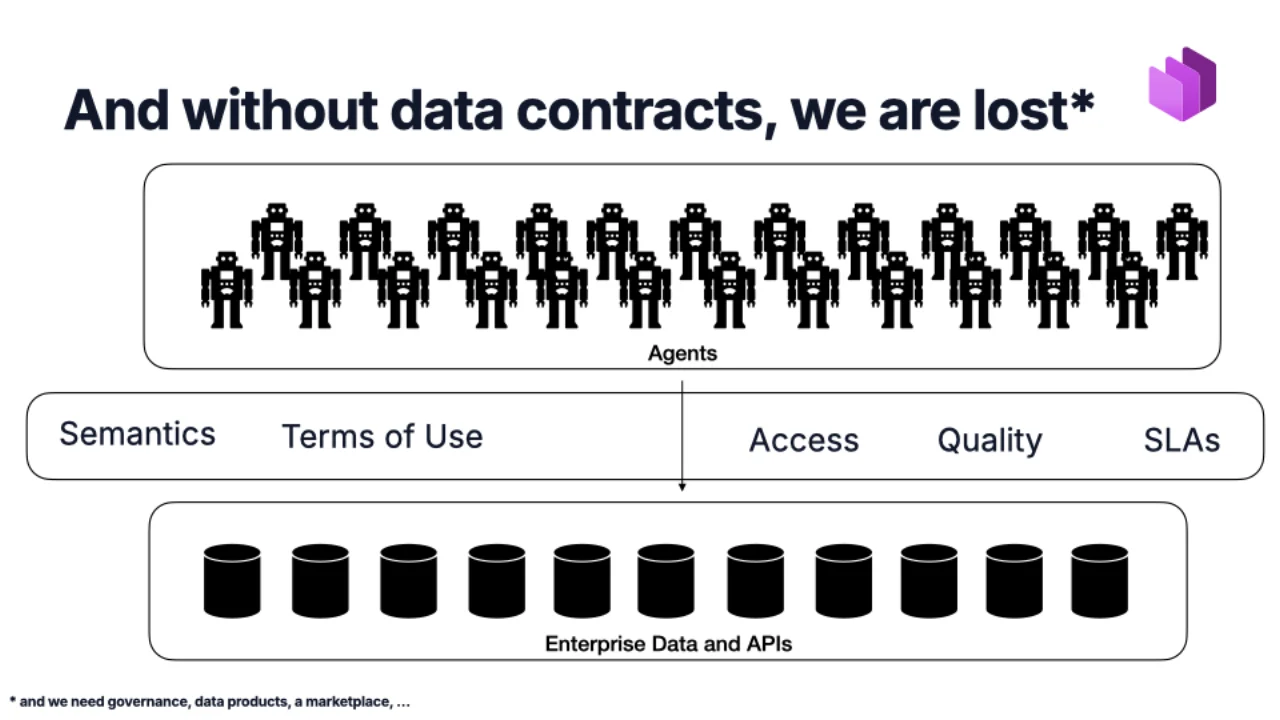

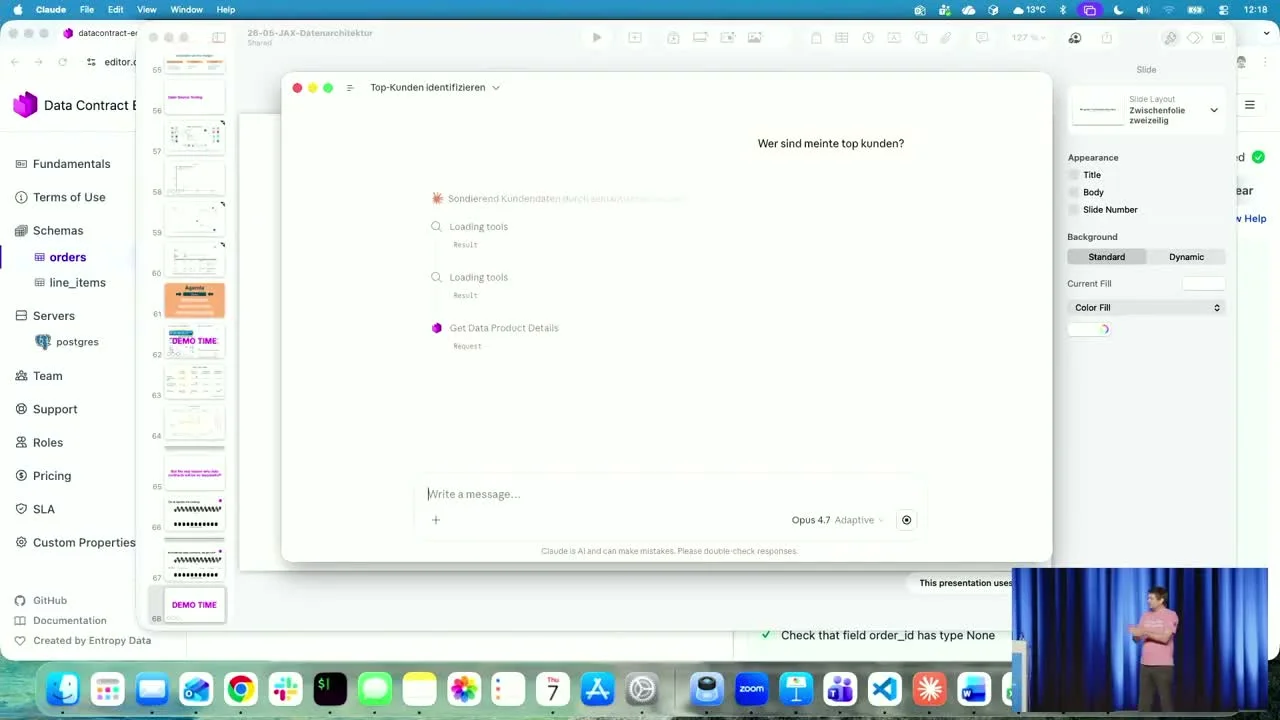

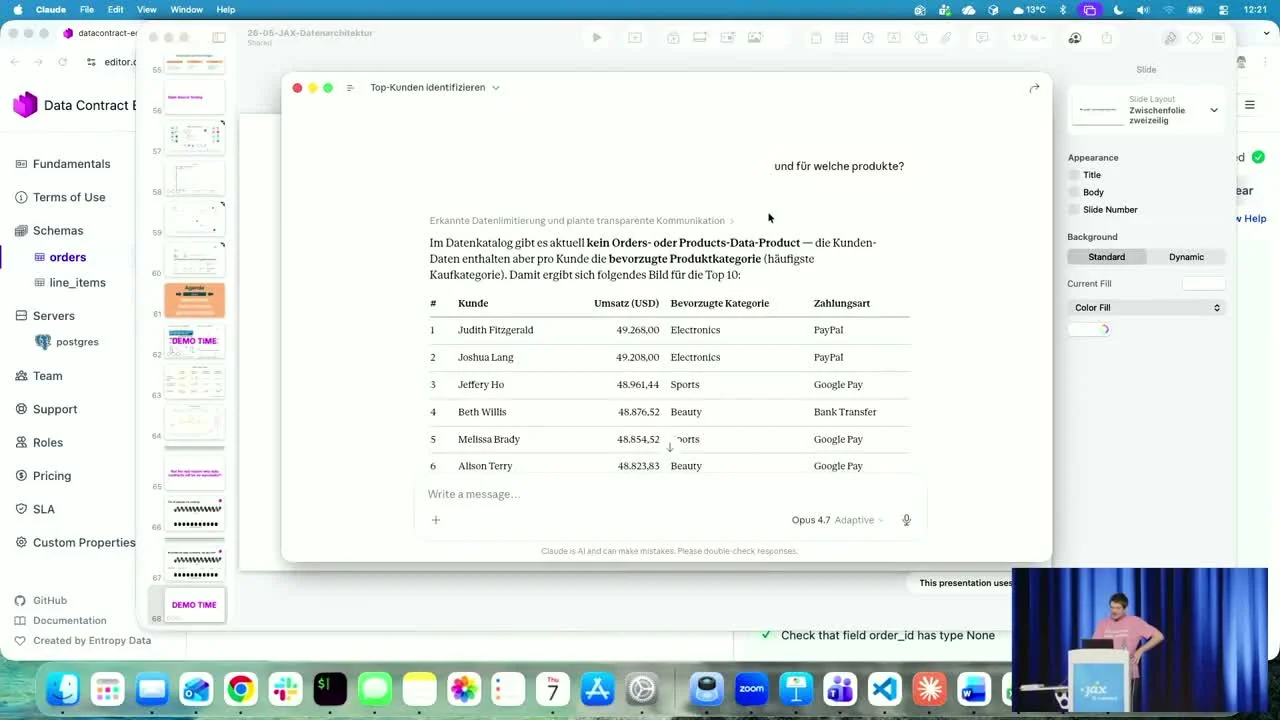

A joint talk at JAX 2026 in Mainz. Gernot opens with the long view -- the history of data storage and a thesis that software engineering largely forgot about data between 1995 and 2020. Simon follows with the fix: data contracts as the API specification layer for data, the Open Data Contract Standard (ODCS), and a live demo of an AI agent answering business questions on top of contract-backed data products.

Recorded live at JAX 2026 in Mainz. The annotation below is an edited English summary of the talk.

Q&A

Selected questions from the audience after the talk.

Q: How are contracts actually enforced -- particularly around data exfiltration and usage limits?

On the producer side you can absolutely enforce the guarantees you make: schema, freshness, quality. Enforcing the consumer side of the agreement -- "you may only use this data for purpose X, not Y" -- is harder. Our answer is, perhaps unsurprisingly, more AI: you can only really police AI behaviour with another AI. A purely rule-based filter either lets everything through or strangles the model into uselessness. You can also harden it by class -- e.g., the AI is simply forbidden from touching the most sensitive datasets at all. That is rules-based and clean, but it also means the AI cannot help with those datasets. Either way, the fact that the AI knows the data exists and where it lives is itself a risk you have to manage, the same way we manage every other dual-use technology.

Q: Do you need a central registry so contracts can actually be discovered?

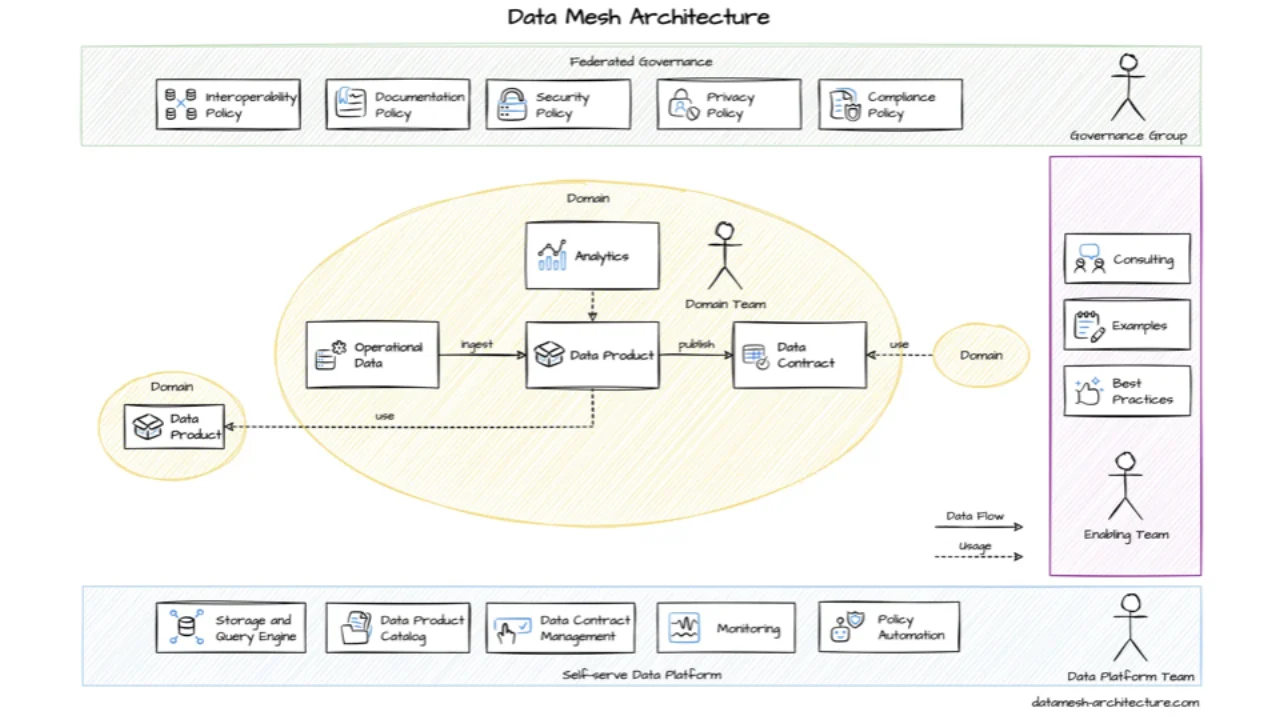

Yes. We recommend a data marketplace: a central place where consumers can shop for data, and access is requested as part of that flow. It is the analogue of an API gateway or API catalog. The contracts themselves are managed decentrally by the domain teams, but the registry has to be central so the discovery story works -- the classic decentralisation / centralisation balance you have to strike in any platform.

Q: How does this fit with service contracts and OpenAPI? Aren't those also describing data, via DTOs?

OpenAPI describes a single row's worth of shape: this field is optional, this one is not, maybe a regex. Beyond that it is silent. It does not tell you whether a field is PII-sensitive, what its internal protection class is, or how it relates to other concepts in the business. The link from orderId in the API to OID2 in a database table is the kind of thing only a semantic layer captures. With good metadata -- ODCS on one side, OpenAPI on the other -- the AI can recognise that the two things are the same concept and join across them. Without it, it has to assume, and assumptions are usually wrong.

Q: Should we stop building read-only interfaces for external systems and just publish data contracts instead?

A REST GET is already a read interface, so technically you can put a contract on it -- the only premise of a data contract is that data is being shared for reading. The deeper question is design: small, point-to-point APIs each tailored to one consumer's request pattern multiply quickly and become a maintenance burden in the data world. You want few, well-designed offerings serving many consumers -- product thinking, one-to-many. The same instinct that pushes us away from per-consumer microservices.

Q: A contract describes the producer's side. How do you see where the data actually flows -- who consumes it, how it is used?

A marketplace gets you part of the way: consumers request access with a stated purpose, which is recorded. Beyond that, lineage formats like OpenLineage report how data actually flows through the systems downstream, including column-level lineage. Combine the two and you can check whether your macro-architecture guidelines match the real flows -- a powerful audit of "what we said we were doing" against "what is actually happening".