Keynote

Agentic AI: How AI Agents Transformed My Work as a Software Engineer and CEO

Dr. Simon Harrer, Co-Founder & CEO @ Entropy Data · March 11, 2026

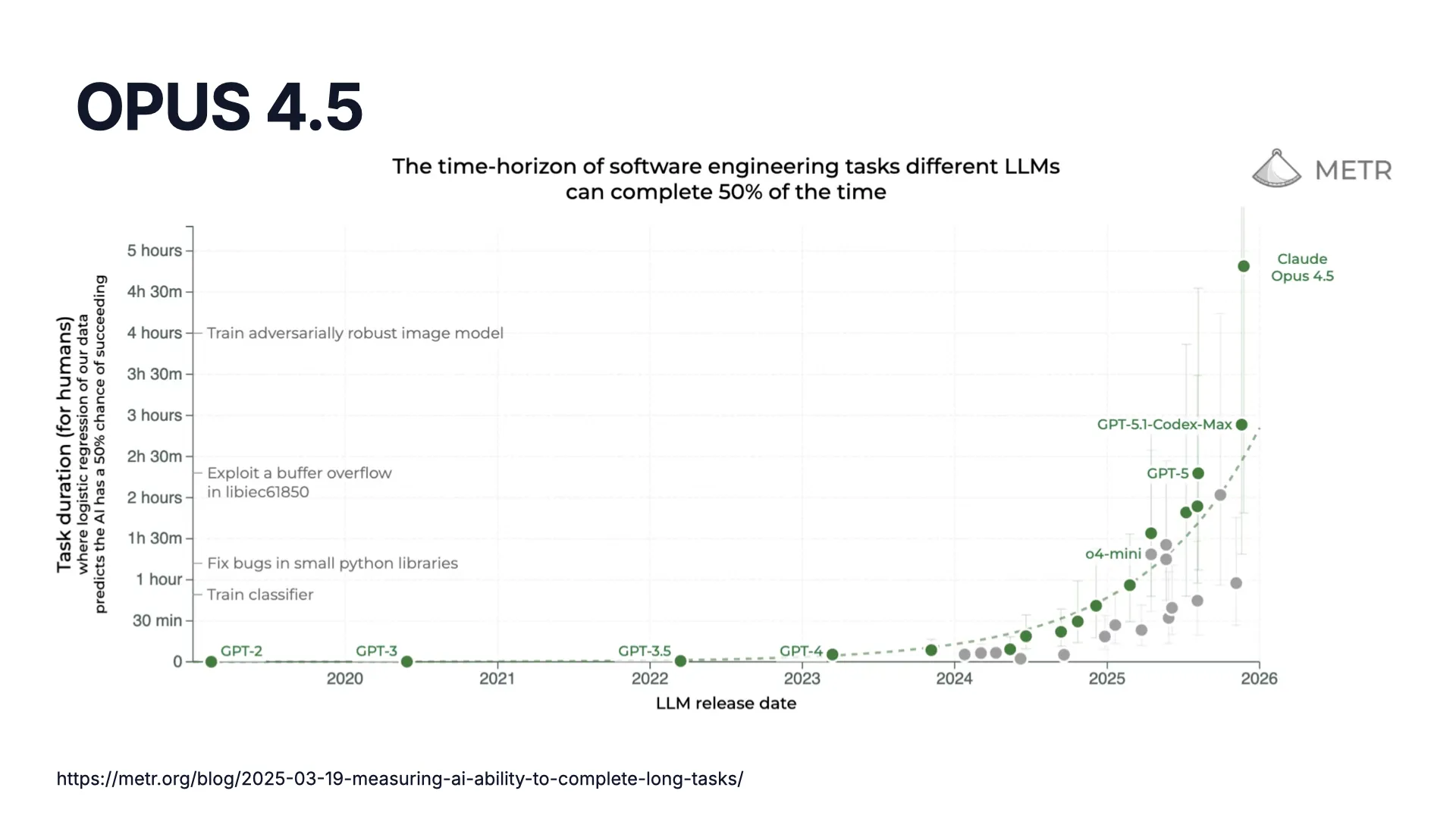

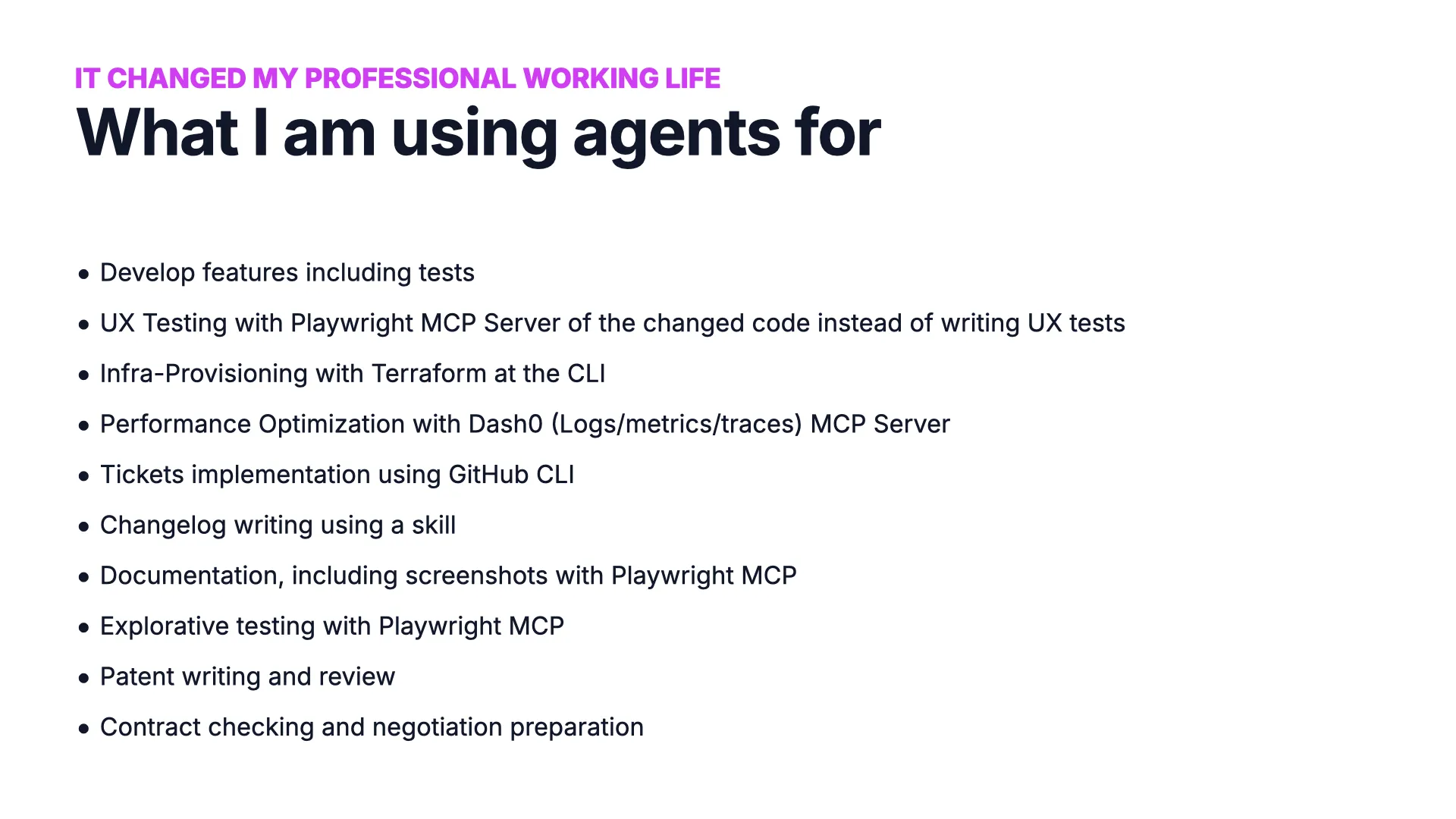

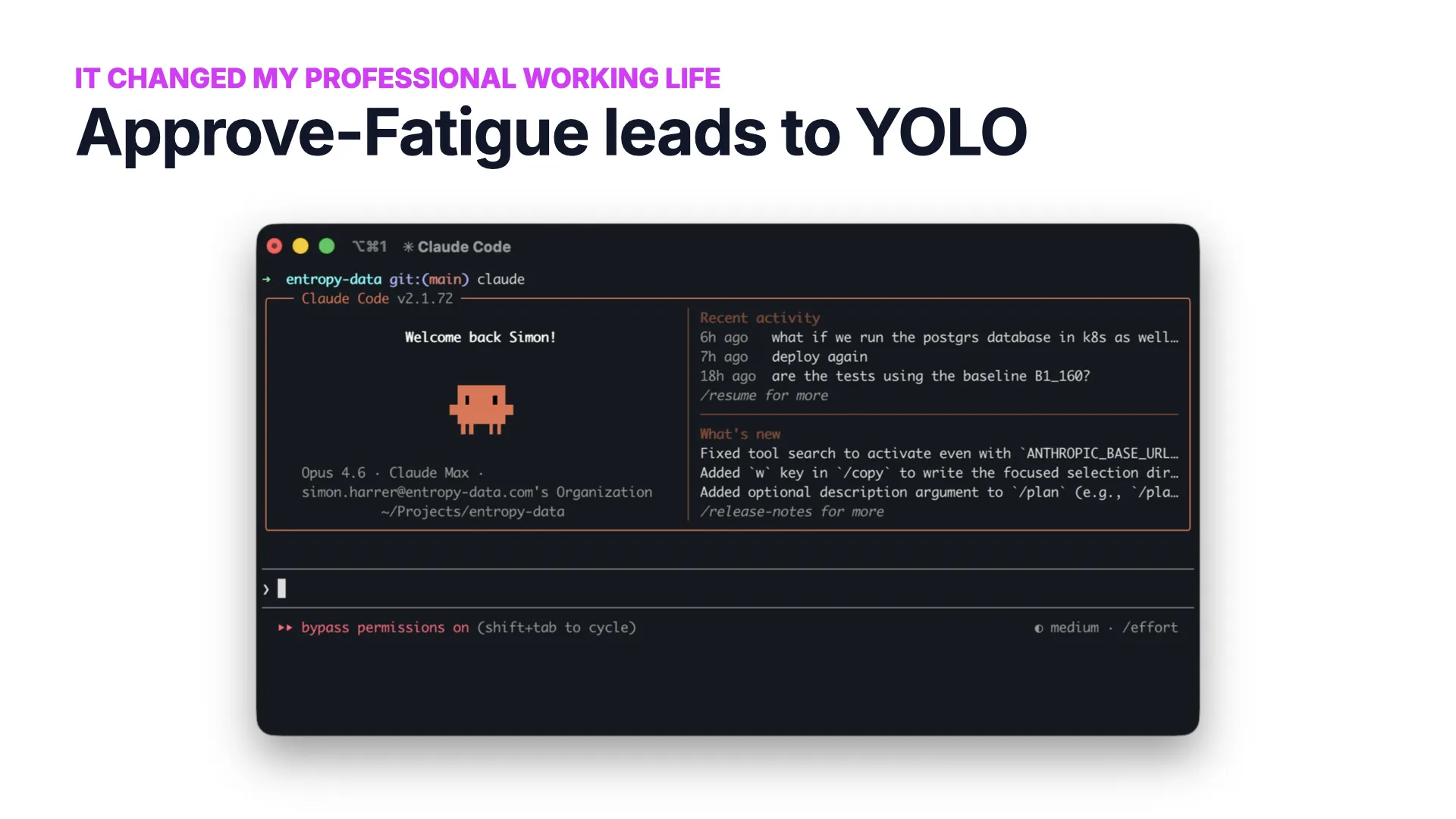

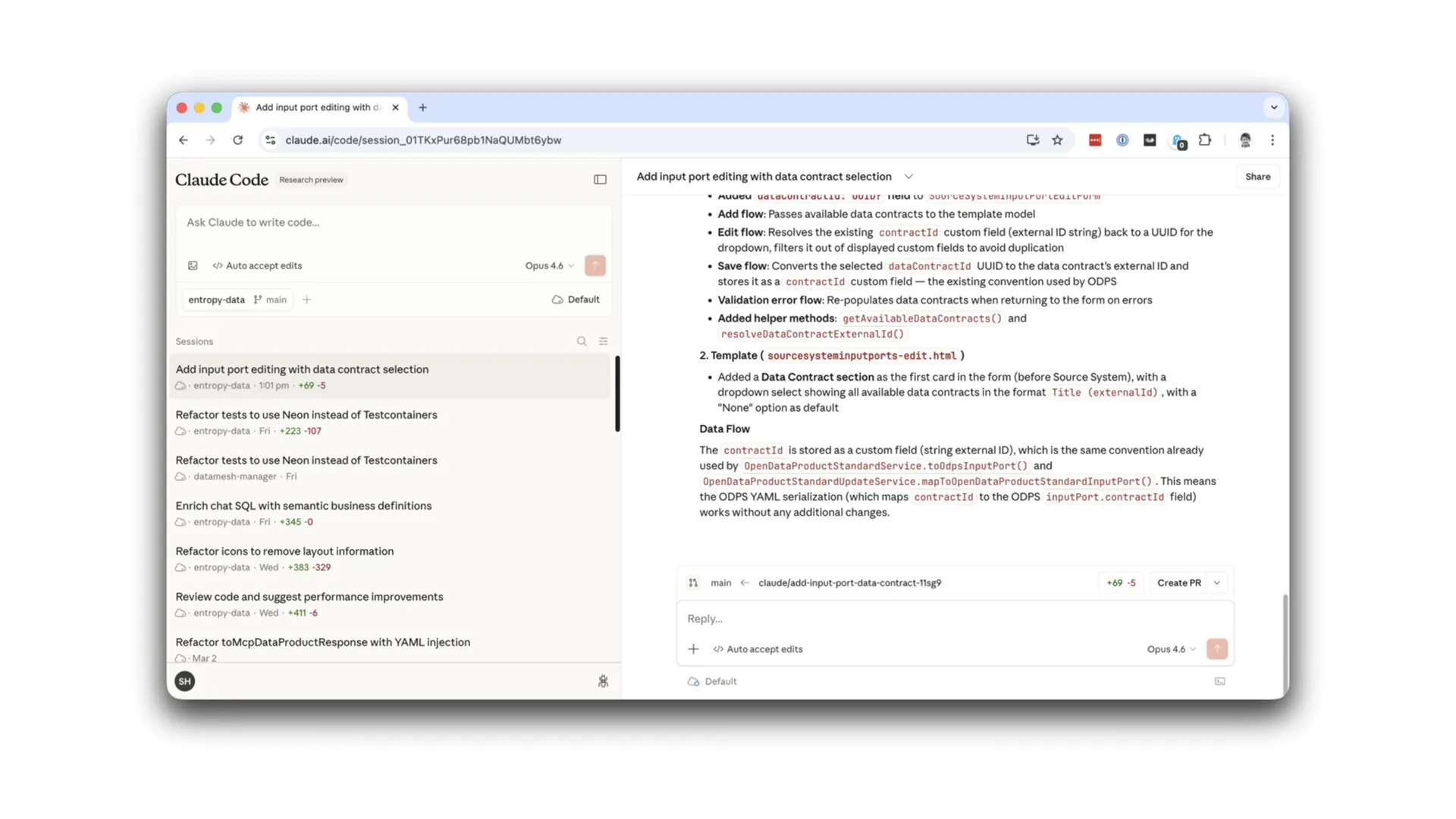

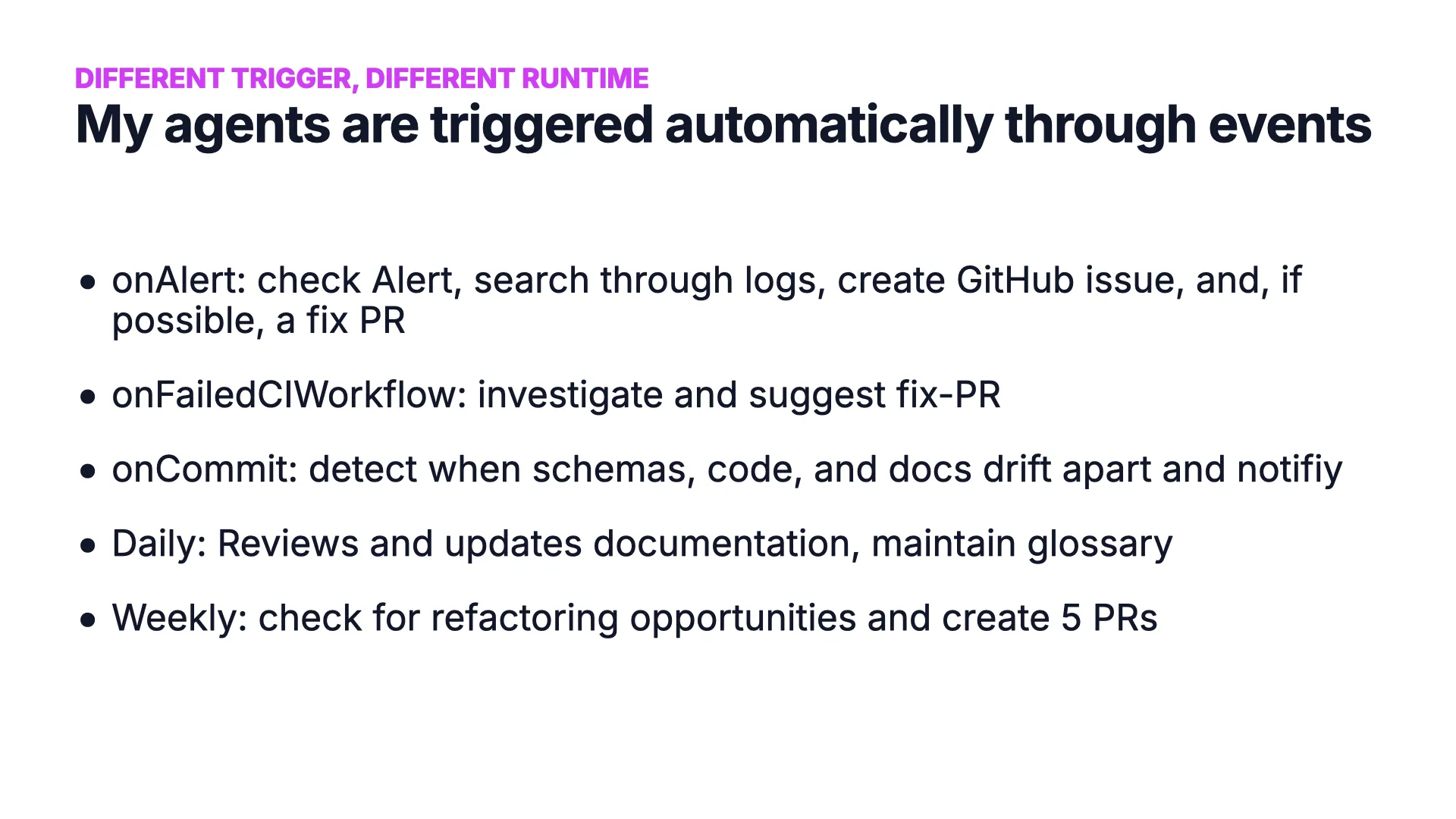

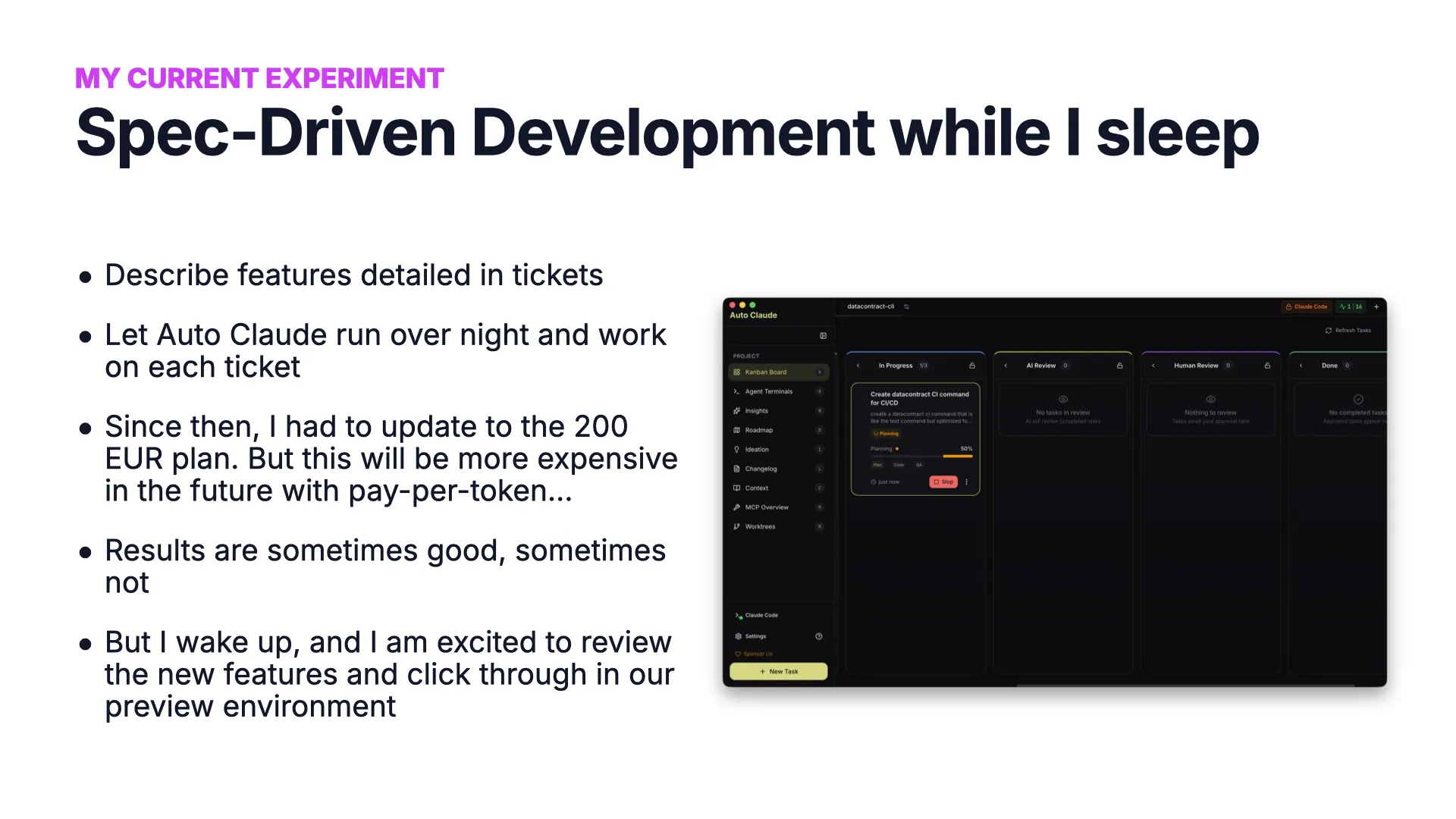

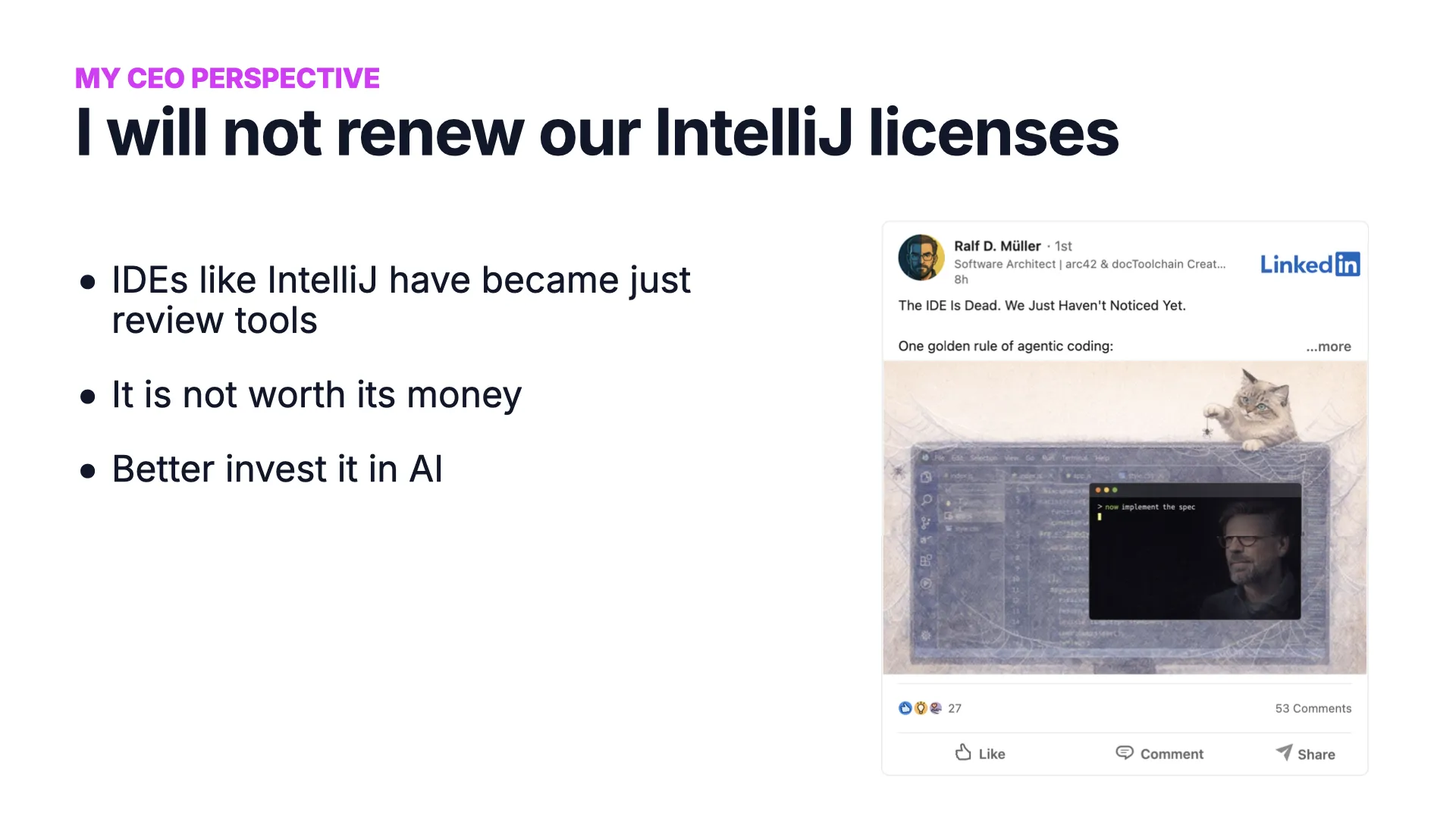

In this keynote, I share how AI agents -- specifically agentic coding tools like Claude Code -- have fundamentally changed how I work as both a software engineer and a CEO. From writing zero lines of code to letting agents work overnight, this talk covers the practical reality of working with AI agents in 2026, and why the biggest opportunity lies not in coding but in connecting agents to enterprise data.

Thanks to Software Architecture Summit for the opportunity, Jochen Christ for helping shape the talk, Robert Glaser for slide inspiration, and Arif Wider for the recording setup.

Q&A

Selected questions from the audience after the talk.

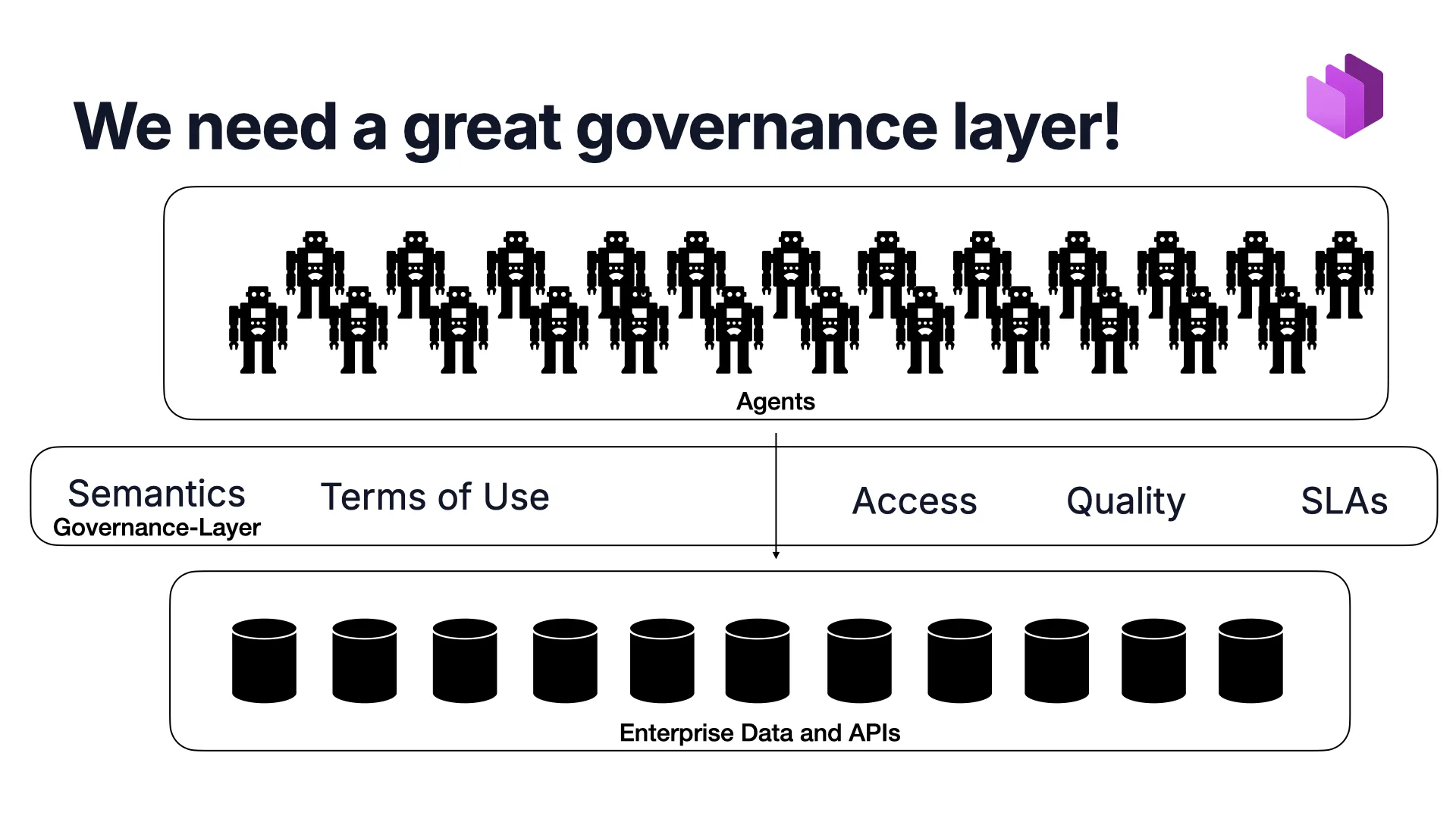

Q: Won't AI providers like Anthropic or OpenAI just build the governance layer themselves? Or the big cloud vendors?

What I see is that it is the data platform vendors -- Databricks, Snowflake, Google -- who are building these layers. The AI companies like Anthropic and OpenAI focus more on the upper layer: how to manage and schedule agents, how to provide a good runtime environment for them. The problem is the enterprise data below. Data sitting in on-premise systems, behind REST APIs, in legacy formats like EDIFACT. How do you integrate all of that? That is an architecture problem. And if you let a single vendor build it all for you, you are creating one of the biggest lock-ins imaginable. You will never get out of that.

Q: What about nearshoring and offshoring? Does AI replace the need for large offshore teams?

I believe nearshoring will significantly reduce. Someone mentioned they have 30 people in an offshore team. I think two people with a clear product vision can accomplish what those large teams used to do. If they have no language barrier, no cultural barrier, and a strong product vision -- that might actually be better. Of course, if someone offshore has that product vision too, you still want that person. You just do not need the large team around them anymore. It comes down to product vision, not headcount.

Q: If juniors have never coded manually, how can they meaningfully review AI-generated code?

That is a great question. But here is my counter-question: why must a human do the reviewing? Someone who is truly AI-native does not need to manually code to build a great product. They just need to know they want to build something great. They could have the AI generate features, then have five other AIs review the output -- each with its own review focus: architecture, security, UX, performance, correctness. The assumption that "a human must review" is deeply ingrained in us because we have done it for so long. AI-native juniors come with a completely different mindset. Whether universities should still teach Quicksort implementation or rather focus on product management -- I honestly do not know. But I think the skills that matter are shifting.

Q: What about quality attributes like maintainability, performance, and security? Are they no longer relevant?

No, quality goals remain important -- but the trade-offs shift. Take maintainability: the new model might be "Design for Replaceability." The AI can look at a component, understand how it works, throw it away, and rebuild it with two changes. Then you deploy the new version. That is a fundamentally different approach to maintenance. We need to rethink our quality models entirely. And for regulated industries -- industrial automation, safety-critical systems, train control -- the answer might be what Amazon just announced: AI-generated code must be reviewed by two humans who sign off on it. We will have to learn what these tools mean for critical systems. Quality is not irrelevant, but our trade-offs are different now. Sometimes we accept lower quality in exchange for the software existing at all.

Q: What about data sovereignty and the dependence on US-based AI providers?

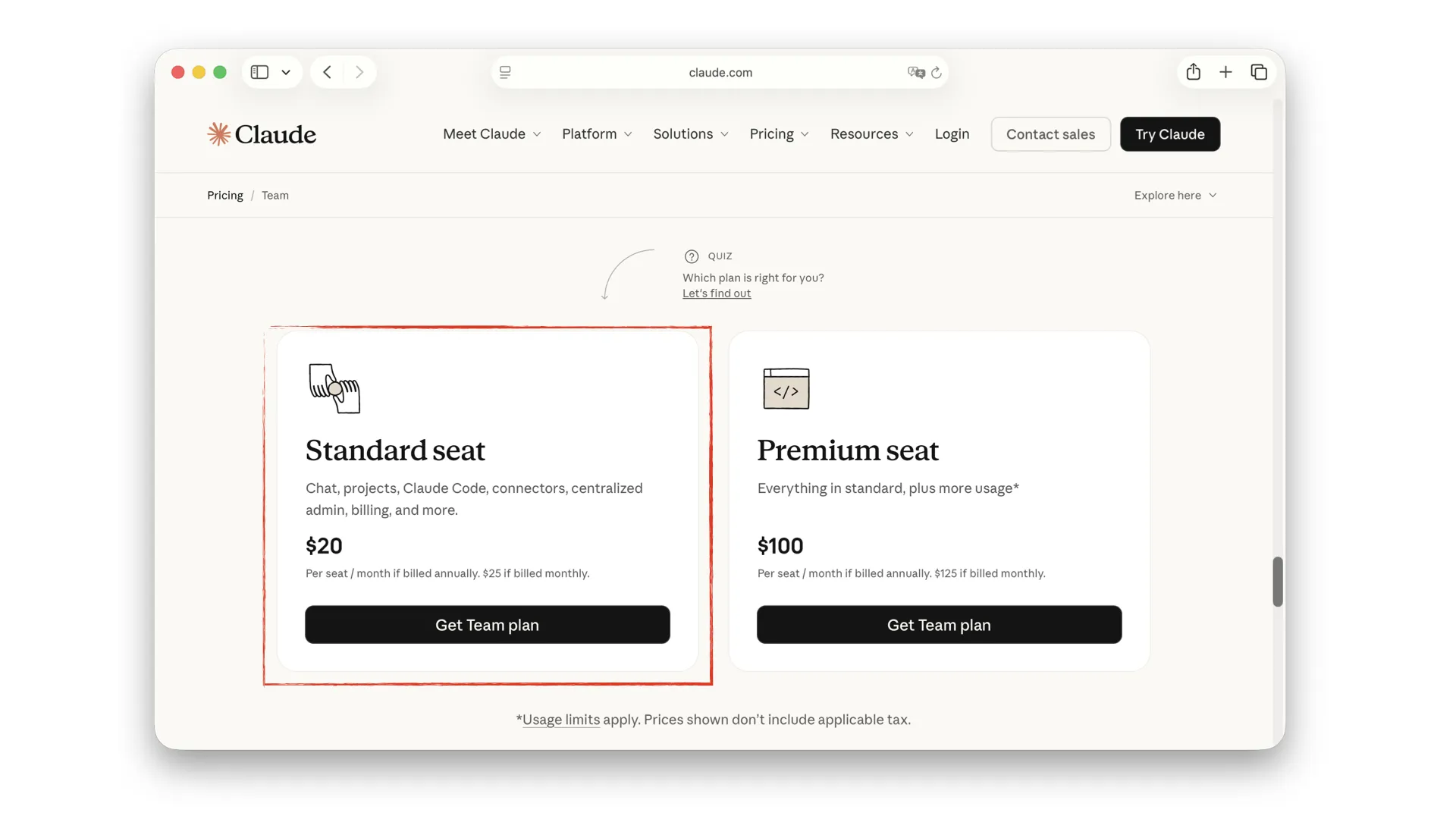

I have deliberately left this topic out of the talk. I wish we had alternatives. I personally see no viable alternative right now. I use Anthropic because it is simply the best tool for my business case. How this ends, I do not know. You can criticize me for not prioritizing corporate responsibility here. But right now, I am trying to build a company under the current conditions. These are important points, but they are also political questions that I simply cannot solve on my own.